OpenAI recently released GPT 5.2, their self-proclaimed "most advanced model" The announcement came with impressive benchmarks, promising significant advancements in coding, reasoning, and vision. On paper, it looks incredible.

However, since its release to users, the reception has been a mixed bag. There appears to be a gap between the lab tests and what people are experiencing in day-to-day use. So, what's the real story?

This post will provide a balanced look at what GPT 5.2 is, what it promises, where it seems to be falling short, and what it all means for businesses looking for AI solutions.

What is GPT 5.2? A family of new models

First, GPT 5.2 isn't just one model. It's the latest series of large language models from OpenAI, built for complex, professional tasks. It is a family of options, with each one tailored for different needs and price points.

According to the official OpenAI announcement, here’s how the family breaks down:

-

GPT-5.2 Instant: This is the speedy version designed for everyday tasks and learning within ChatGPT. It’s built for quick answers.

-

GPT-5.2 Thinking: This is the core model for deeper, more complex work like coding and analysis. If you're using the API, this is the one you'll call with

gpt-5.2. -

GPT-5.2 Pro: The most powerful and precise option in the lineup. It's for difficult questions where getting the best possible answer is the top priority.

Alongside these, OpenAI also rolled out GPT-5 mini as a smaller, more efficient option and a specialized GPT-5.2-Codex for developers. It’s clear they're trying to cover all their bases by offering a range of models.

The official story: New features and claimed improvements

Based on OpenAI's launch materials, the model shows significant gains over previous versions. Let's break down the key areas where GPT 5.2 is supposed to perform well, based on their official documentation.

Enhanced performance on professional tasks

One of the promises of GPT 5.2 is how it handles professional knowledge work and unlocks more economic value. OpenAI claims it’s much better at creating spreadsheets, building presentations, and writing sophisticated code than its predecessors.

To back this up, they point to the GDPval benchmark, where the model supposedly outperforms industry professionals on over 70% of tasks. The coding improvements are particularly notable, with the model hitting new state-of-the-art scores on tough benchmarks like SWE-Bench Pro (55.6%). The idea is that it can now handle more complex software engineering problems, moving beyond just generating simple scripts.

Stronger reasoning, vision, and long-context capabilities

OpenAI also highlights the model's ability to reason through complex information, especially over long documents. OpenAI's "needle in a haystack" test (OpenAI MRCRv2), which checks if a model can find specific information in a large body of text, showed near-perfect accuracy. This suggests you could provide it a lengthy report and trust it to extract the details you need.

Its vision capabilities have also been upgraded, with error rates on tasks like chart reasoning and understanding software interfaces cut roughly in half. In theory, you can show it a complex dashboard or a motherboard diagram, and it will have a much better spatial understanding of what it’s seeing. It also scored top marks on science and math benchmarks like GPQA Diamond (92.4%) and FrontierMath (40.3%), pushing what AI can do in highly technical fields.

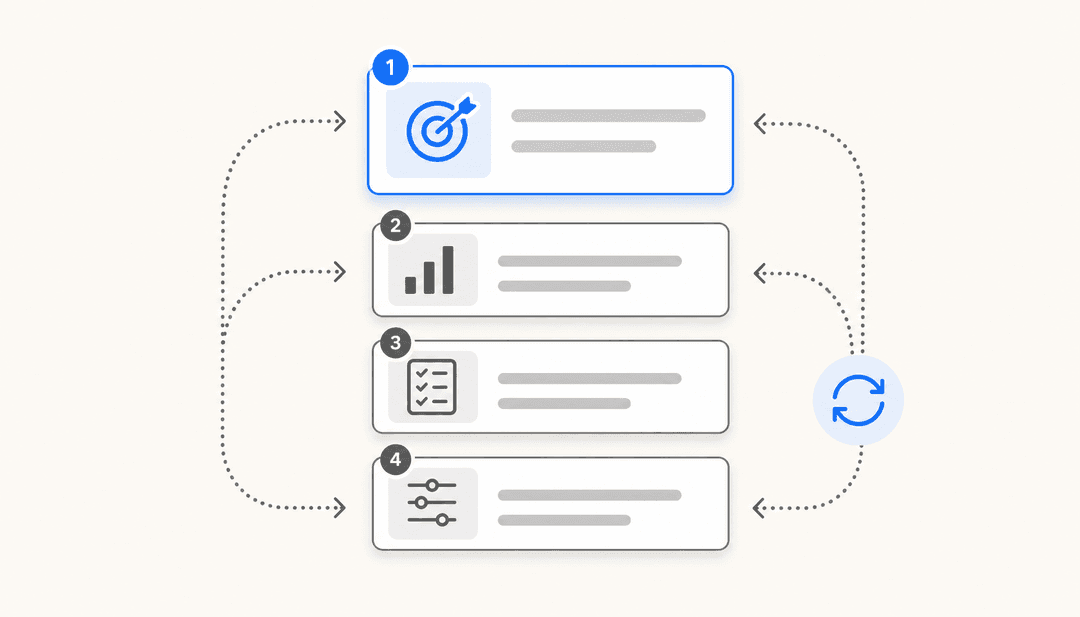

More reliable tool calling for agentic workflows

This is a more technical feature, but it’s important for building practical AI applications. "Tool calling" is how an AI model interacts with other software to perform actions, like looking up an order in Shopify or creating a ticket in Jira. GPT 5.2 is designed to be much better at executing these complex, multi-step tasks from start to finish.

It scored an impressive 98.7% on the Tau2-bench Telecom benchmark, a test designed to simulate multi-turn customer support tasks. In practice, this means you can build more reliable AI agents that can handle entire workflows, like resolving a customer's shipping issue or analyzing sales data, with fewer errors.

Introducing GPT-5.2-Codex for advanced coding

For developers, OpenAI also released GPT-5.2-Codex, a version of the model specifically optimized for "software engineering and cybersecurity" This isn't just for writing a few lines of code; it's built to handle large codebases and assist with long, complex tasks like code migrations and refactors.

It also comes with enhanced security awareness. For now, it’s available in Codex surfaces for paid ChatGPT users, with API access planned to roll out later. This indicates OpenAI's focus on making its models essential tools for software development teams.

Real-world experiences: A mixed bag of user feedback

Benchmarks are one thing, but the real test is how a model performs for actual people in their daily work. Since GPT 5.2’s release, the feedback on developer forums and in publications like Medium has been varied.

Many users are running into issues that benchmarks simply don't capture, creating a gap between the advertised performance and day-to-day reality.

Concerns about tone and increased refusals

One of the common complaints is about the model's personality. Many users describe its tone as "flatter and over-sanitized," as if it’s been trained to be so cautious that it's less able to improvise or show creativity.

I don't want a Yes Man, but I also don't want a suspicious, paranoid assistant who thinks I'm jailbreaking every request, even borderline ones. We were talking about online scams, I asked him to explain this scam in a simple way and he said 'I can't encourage scams'... Damn, but I asked him to explain it simply, not to scam!!!

This ties into another major issue: increased refusals. Users report that the model is now overly cautious and will sometimes refuse to discuss topics or complete tasks that previous versions handled. For anyone relying on the model for creative writing, brainstorming, or exploring nuanced topics, this can present challenges. It feels like a step back in usability for some users, even if the underlying technology is more powerful.

Inconsistent performance and context issues

Even more concerning are the reports of inconsistent performance. Some users feel that for their specific tasks, the model seems less effective than GPT-4. It might perform well on a benchmark test but then struggle with a common-sense problem.

Most annoying thing I’ve noticed is that I ask it something and it replies. I then ask it something else and it answers the first question along with the second question.

Another frequent complaint is that the model loses context or contradicts itself during long conversations. This is unexpected, given OpenAI's claims about its performance on long-context benchmarks. As one reviewer put it, "benchmarks are clean. Real documents are not." Real-world use cases are messy, and it seems the model can still get tripped up. This inconsistency makes it difficult for businesses to build reliable workflows on top of the raw model. It may require more careful prompt engineering to get the desired output.

For business applications like AI for customer service, predictability is important. Specialized platforms like eesel AI are designed to provide consistent, on-brand performance. These platforms learn directly from a company's own data and past successful conversations to deliver tailored responses.

Understanding the pricing and accessibility

Putting performance aside, the cost and accessibility of a new model are huge factors for any business. GPT 5.2 comes with a new pricing structure that positions it as a premium, enterprise-grade model. Let’s take a look at the numbers from OpenAI's official pricing page.

API pricing structure

The standard API pricing for the new models is significantly higher than previous versions, signaling that this power comes at a cost. For businesses that can handle asynchronous tasks (meaning they don't need an instant response), OpenAI also offers a Batch API that provides a 50% discount, which is an incentive for non-urgent workloads.

Here’s a clear breakdown of the standard costs per million tokens for input and output, which should help you compare the options. This visual comparison highlights the significant cost difference between the models.

| Model | Input (per 1M tokens) | Output (per 1M tokens) |

|---|---|---|

| gpt-5.2-pro | $21.00 | $168.00 |

| gpt-5.2 | $1.75 | $14.00 |

| gpt-5-mini | $0.25 | $2.00 |

What this pricing means for businesses

So, what does this pricing really mean? GPT 5.2 is a powerful but raw material. The variable, token-based cost can make budgeting for AI challenging, especially if model inconsistencies mean you have to run the same prompt multiple times to get a usable result. Every attempt costs money.

The higher price of gpt-5.2-pro, especially for its output, means it is likely best suited for very specific, high-value tasks where the cost can be justified. For many day-to-day business needs, it may not be necessary.

Businesses seeking predictable AI costs may consider different models. For example, some services like eesel AI offer interaction-based pricing. With this model, costs are tied to outcomes (like a resolved ticket) rather than token computation. This approach can simplify cost forecasting and ROI measurement.

Is GPT 5.2 a powerful tool that requires careful handling?

So, what's the final takeaway on GPT 5.2? On one hand, it sets new records on technical benchmarks and represents an incredible feat of engineering. On the other, it also presents some challenges with consistency and usability in real-world applications, with some early adopters reporting a gap between benchmark performance and their experience.

For technical teams with the time, budget, and expertise to experiment, fine-tune prompts, and build extensive guardrails, it offers a ton of power.

However, many businesses are not looking to work with a raw AI model directly. They're looking to solve specific problems, like reducing support tickets or accessing internal knowledge. The goal is the outcome, not the process.

An alternative to building from scratch is using a specialized solution. eesel AI offers products designed for specific business needs. It learns from company data to provide responses tailored to the organization's context. See how an AI Agent can start resolving your support tickets today.

To see some of these real-world tests in action, this video from Fireship offers a quick and insightful breakdown of GPT 5.2's performance, highlighting both its strengths and where it seems to fall short of the hype.

Frequently asked questions

GPT 5.2 is OpenAI's latest series of language models, designed for complex professional work. It's not a single model but a family (Instant, Thinking, Pro, and mini) that promises big improvements in coding, reasoning, and understanding long documents compared to older versions like GPT-4.

According to OpenAI's benchmarks, yes. They released a specialized model, GPT-5.2-Codex, which scored higher on difficult software engineering tests. However, some developers in the real world have reported mixed results, so your experience may vary.

This seems to come down to a few things. Users have pointed out that the tone of GPT 5.2 can feel "flat" or overly cautious, leading it to refuse certain tasks. Others have found it struggles with consistency and can lose context in long conversations, which isn't always captured in clean benchmark tests.

The pricing for GPT 5.2 varies by model. The standard `gpt-5.2` model costs $1.75 for input and $14.00 for output per million tokens. The most powerful version, `gpt-5.2-pro`, is much more expensive at $21.00 for input and $168.00 for output per million tokens.

While possible, the model's performance variability and token-based costs are factors to consider for critical functions like customer support. Businesses often use specialized platforms, such as eesel AI, which are trained on company-specific data to provide consistent responses for these use cases.

Share this article

Article by

Stevia Putri

Stevia Putri is a marketing generalist at eesel AI, where she helps turn powerful AI tools into stories that resonate. She’s driven by curiosity, clarity, and the human side of technology.