I tested 5 Claude Opus 4.5 alternatives: Here's the best AI for coding in 2026

Kenneth Pangan

Katelin Teen

Last edited January 6, 2026

Claude Opus 4.5 is a powerful model for heavy-duty coding and complex, agent-like tasks. However, its capabilities come at a significant cost. With API costs at $5 per million input tokens and $25 per million for output, the expenses can accumulate quickly. For solo developers, small teams, or budget-conscious companies, that cost can be a roadblock.

Opus is too expensive now, it really should have been 1.6x Oh well was fun while it lasted

This has led to an exploration of practical alternatives that offer a balance of performance and cost. This article evaluates five leading contenders on real-world coding challenges, from simple functions to complex, multi-file software projects.

Here, we will break down GPT-5.2 Codex, Gemini 3 Pro, Devstral 2, GLM-4.7, and MiniMax M2.1. This comparison aims to help you select the right AI partner for your workflow and budget.

What is Claude Opus 4.5 and why consider alternatives?

Opus 4.5 is considered a top-tier model, especially for professional developers. It performs well on coding benchmarks, scoring an impressive 80.9% on SWE-bench Verified, and its massive 200K context window allows it to handle long, complex tasks without performance degradation. Its new hybrid reasoning capabilities are also a huge help for particularly tricky problems.

This brings us to the primary reason for considering alternatives: cost. The API pricing is higher than many of its competitors. While the model is powerful, these costs can escalate quickly, especially if you're running a lot of agentic workflows or high-volume tasks. This pricing structure can be a consideration for teams running high-volume tasks or agentic workflows. This infographic illustrates the trade-off between its high-end capabilities and its premium cost, a key reason for exploring Claude Opus 4.5 alternatives.

Criteria for evaluating Claude Opus 4.5 alternatives

Choosing a suitable alternative involves more than just selecting the model with the highest performance metrics. It requires a balance of key factors that impact daily development workflows. Here’s what I looked at:

-

Coding & reasoning performance: How well does it actually code? I’m talking about complex, multi-file software engineering tasks. To keep things objective, I'm referencing scores from well-respected benchmarks like SWE-bench Verified and Terminal-Bench 2.0.

-

Workflow integration: How does it actually fit into your setup? Is it a smooth integration into an IDE like VS Code, or is it more of a command-line tool? The less friction, the better.

-

Cost-effectiveness: I compared the cost per million tokens to assess overall value and identify cost-effective solutions.

-

Flexibility & control: Does the alternative support open-weight models that you can run locally for better privacy, customization, and control?

A comparison of the top Claude Opus 4.5 alternatives

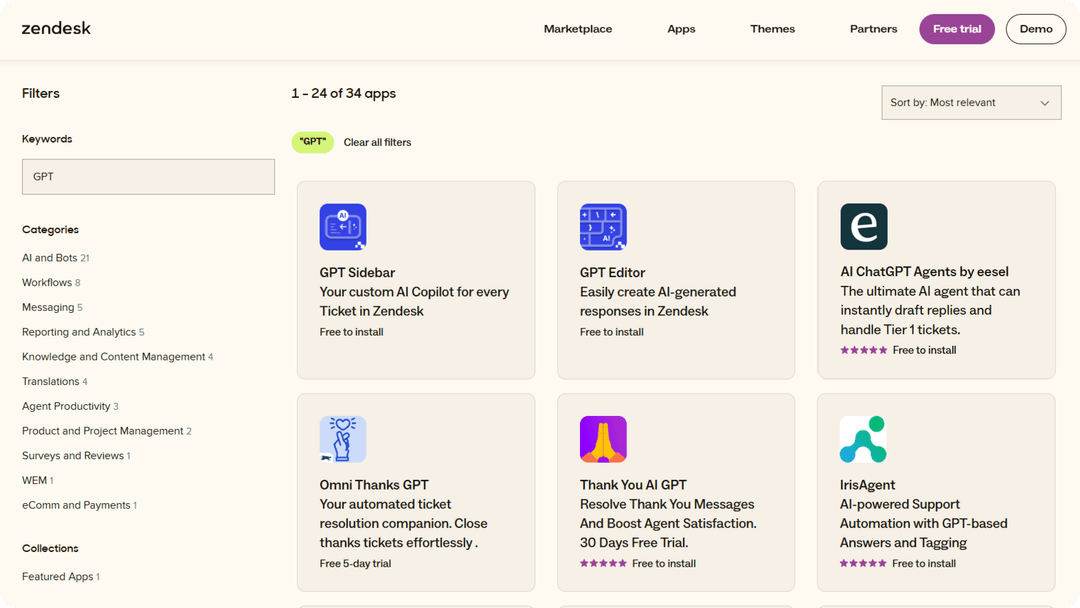

This table provides a high-level comparison of the key specifications for each model.

| Model | Key Strength | SWE-Bench Verified | Context Window | Cost (Input/Output per 1M Tokens) |

|---|---|---|---|---|

| GPT-5.2 Codex | Agentic coding & cybersecurity | 80.0% | 128K | $1.75 / $14.00 |

| Gemini 3 Pro | Multimodal & long context | 74.2% | Up to 2M | Via Google AI Studio |

| Devstral 2 | Open-weight & agentic focus | 53.8% | 32K | Via Mistral Platform |

| GLM-4.7 | "Deep Thinking" & cost-efficiency | 73.8% | 205K | $0.60 / $2.20 |

| MiniMax M2.1 | Multi-language & UI generation | 61.0% (M2) | ~200K | $0.30 / $1.20 |

The 5 best alternatives to Claude Opus 4.5 in 2026

Each of these models has unique strengths, and the ideal choice depends on your specific project requirements and priorities.

1. GPT-5.2 Codex

GPT-5.2 Codex is OpenAI's model designed specifically for professional software engineering. It is suitable for demanding tasks like large-scale refactors, complex migrations, and defensive cybersecurity tasks.

It performs extremely well, hitting an impressive 80.0% on SWE-bench Verified and 64.0% on Terminal-Bench 2.0. Its vision capabilities are also excellent, meaning it can interpret UI designs and technical diagrams with ease. Its primary advantage is its strong reasoning and reliability for extended professional workflows. As a proprietary model, it cannot be customized or run locally. While more affordable than Opus 4.5, it remains a premium-priced option.

Gpt4o seems drunk and will ignore important details and just spew out some code. I'd correct it again, then again, then again, then we might have a solution.

Pricing-wise, it's available through an API at $1.75 per million input tokens and $14.00 for output. This makes it a more cost-effective option than Claude for comparable tasks.

Best for: Professional developers and enterprise teams working on large, mission-critical codebases who need a reliable and intelligent coding partner.

2. Gemini 3 Pro

Gemini 3 Pro is Google's entry in the AI coding space, and its standout features are its native multimodal capabilities and large context window.

This model can understand video and images right alongside your code, which is a unique and helpful feature for certain types of development. It scored a very competitive 74.2% on SWE-bench Verified, and some analyses suggest it's especially good for tasks that require the entire project to be held in context. Its main strengths are its speed and visual understanding, making it a fantastic choice for UI/UX work or analyzing visual assets. Its API pricing is integrated into Google's cloud platforms, which may differ from the standalone pricing models of other providers.

You can get access through Google AI Studio and Vertex AI. For individual use, it's available via the Gemini Advanced plan.

Best for: Developers working on multimedia apps, UI/UX designers, or anyone who needs to process visual inputs alongside code at high speed.

3. Devstral 2

Devstral 2 from Mistral AI is a frontier model designed specifically for agentic software engineering, with a focus on open-source principles.

It was built for coding agents, and it performs well for an open-weight model, scoring 53.8% on SWE-bench Verified. It also integrates with popular IDEs like VSCode and JetBrains, so it fits right into existing workflows. A key advantage is its open and customizable nature. You have more control and can even self-host it for privacy or performance reasons. As a specialized tool, its ecosystem is less mature than those of larger providers, and its benchmark scores are currently lower than some top proprietary models.

It's available as an open-weight model to self-host or you can access it through Mistral's platform and partners like AWS, Azure, and Google Cloud.

Best for: Developers who love the command line, want an open-source AI agent, or need to run a powerful model locally for privacy and control.

4. GLM-4.7

GLM-4.7 from Zhipu AI can be described as a "thinking" model. It has a feature that allows it to reason through a problem before it generates a solution, which can lead to more robust and logical code.

Its "Deep Thinking" mode can be toggled in the API to improve stability on long-horizon tasks. It puts up strong numbers, scoring 73.8% on SWE-Bench, and comes with a generous 205K context window. It also has features specifically optimized for generating clean, modern UIs. The key advantage is that explicit reasoning step, which helps in complex, multi-step workflows. This process can add latency, but the thinking process can be streamed to reduce the perceived wait time.

The pricing is very competitive. It's very cost-effective at just $0.60 per million input tokens and $2.20 for output.

Best for: Teams building complex AI agents and developers working on tricky, multi-step coding problems where logical coherence is more important than raw speed.

5. MiniMax M2.1

MiniMax M2.1 is a highly efficient model. It excels at multi-language programming and UI/UX generation while being one of the most affordable options out there.

It has enhanced support for languages beyond just Python, including Rust, Java, Go, and C++. Its "Vibe Coding" feature is useful for generating high-quality UIs, and it offers open-source weights for local deployment. It even offers a compatible Anthropic API format to simplify migration. Its main advantages are its performance-to-cost ratio and strong multi-language support. As an efficient MoE (Mixture of Experts) model, it is very fast, though it may not match the reasoning power of larger models on very deep, single-topic problems. It scored a solid 61.0% on SWE-bench.

The pricing is very competitive, at just $0.30 per million input tokens and $1.20 for output, providing significant capabilities at a lower cost than premium models.

Best for: Full-stack developers working in multiple programming languages, frontend engineers focused on UI/UX, and any team looking for a powerful, cost-effective, and self-hostable solution.

For those looking to see these models in action, this video offers a practical comparison and discusses which alternatives might be best depending on your specific coding needs.

How to choose the right AI assistant

There is no single "best" model for all use cases. The right choice depends on the specific task. For enterprise-grade complexity, GPT-5.2 is a suitable option. For speed and visual workflows, Gemini is a strong choice. For users who value control and open-source models, Devstral is a viable option, and for budget-conscious users, GLM-4.7 and MiniMax M2.1 offer excellent value.

A recommended practice is to use the right tool for the job. These general-purpose coding assistants are effective for writing code. However, a developer's work extends beyond coding. You also have to write documentation, create blog posts to announce new features, and answer questions from your community. For content-related tasks, a specialized tool can be more effective.

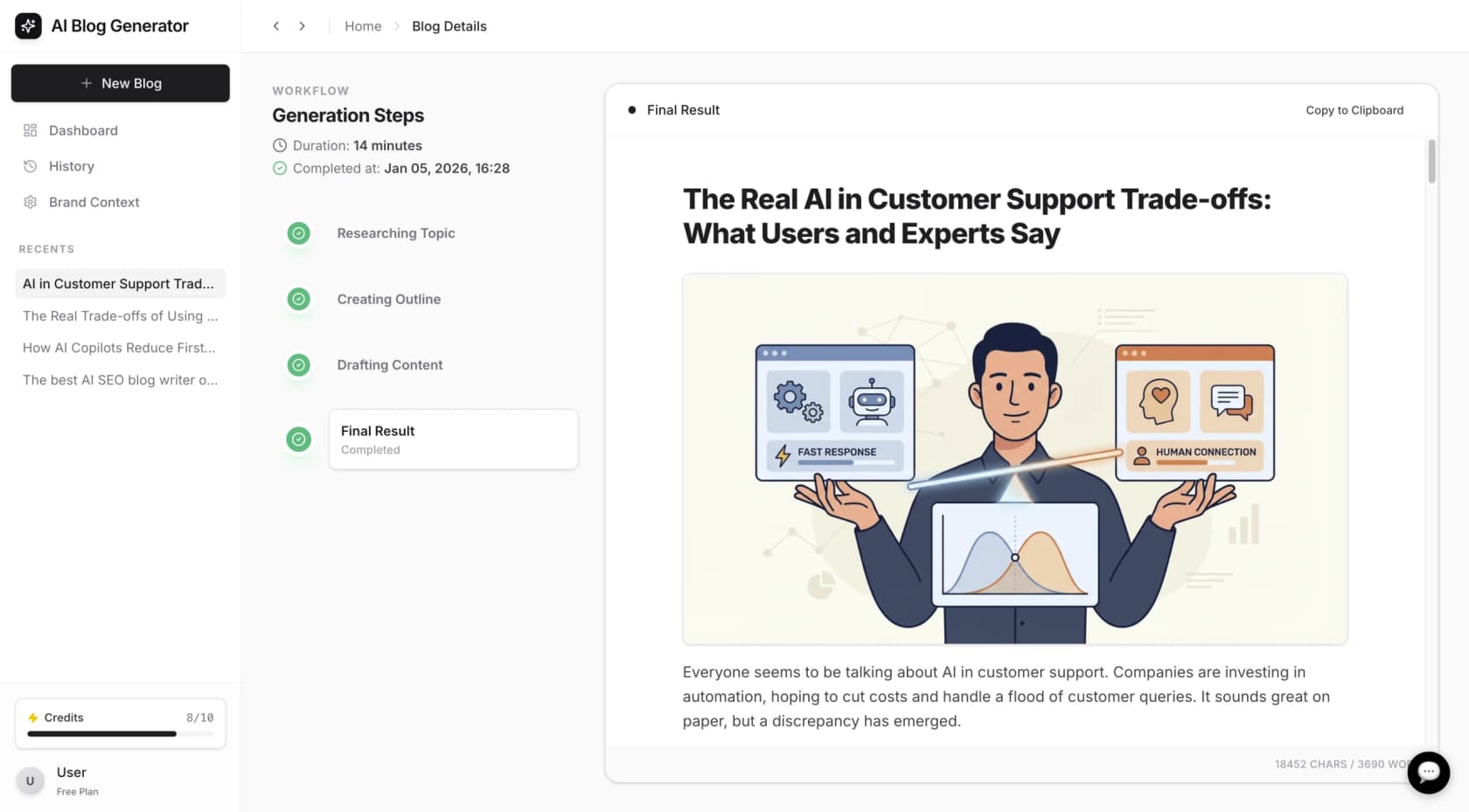

For example, while an AI coding assistant is used to build an application, a tool like the eesel AI Blog Writer can be used to generate launch announcements and technical documentation from a single keyword. Such tools are designed for creating SEO-optimized content, allowing coding assistants to be used for their primary purpose: writing code.

Final thoughts: A hybrid approach to AI coding assistants

The AI coding landscape in 2026 is rich and diverse. Relying on a single, high-cost model like Claude Opus 4.5 may not be necessary or cost-effective for all users.

An effective workflow is often a hybrid one. This involves using a "model chain" or a multi-tool approach. Use a powerful model like GPT-5.2 for architectural planning, a fast one like Gemini for frontend iteration, and a cost-effective one like MiniMax for routine tasks.

I’ll often take a response from Opus or Gemini Advanced and then feed it into GPT 4 and ask it to evaluate the accuracy of the other AI. Then it will fix the code or whatever, and I’ll take it back to Claude and it will often apologise and treat me like I’m some sort of genius because ‘I’ found a better way to do it.

Building software requires both coding and communication. While these AI assistants can enhance development, other tools like eesel AI can assist with communication needs. From answering internal questions with our AI Internal Chat to creating all your customer-facing content with our AI Blog Writer, you can cover all your bases.

Frequently asked questions

For startups on a budget, GLM-4.7 and MiniMax M2.1 are excellent choices. They offer impressive performance at a fraction of the cost of premium models, with API pricing as low as $0.30 per million input tokens.

Absolutely. Devstral 2 is a powerful open-weight model designed for this purpose. You can run it on your own infrastructure, giving you full control over your data and privacy. MiniMax M2.1 also offers open-source weights for local deployment.

If performance on complex, enterprise-level tasks is your top priority, GPT-5.2 Codex is strong. It scores nearly as high as Opus 4.5 on benchmarks like SWE-bench Verified and is built for heavy-duty software engineering.

Yes, Gemini 3 Pro is the standout here. Its native multimodal capabilities allow it to process video and images alongside code, making it perfect for UI/UX work or any development that involves visual assets.

The best approach is to match the tool to the task. Consider a hybrid model: use a high-performance model like GPT-5.2 for complex architecture, a speedy one like Gemini 3 Pro for frontend, and a cost-effective one like MiniMax M2.1 for routine coding.

MiniMax M2.1 is a great option if you work with multiple languages. It has enhanced support for languages like Rust, Java, Go, and C++, making it a versatile choice for full-stack developers.

Share this article

Article by

Kenneth Pangan

Writer and marketer for over ten years, Kenneth Pangan splits his time between history, politics, and art with plenty of interruptions from his dogs demanding attention.