On November 19, 2025, OpenAI introduced GPT-5.1-Codex-Max, their new coding model, representing a significant development. This model is positioned as a substantial advancement in AI-assisted coding.

It’s been built from scratch for long, complicated software engineering jobs. A key feature is "compaction," which helps the AI maintain context over millions of tokens without getting sidetracked.

In this post, we'll get into what GPT-5.1-Codex-Max is, look at its new features, see how it compares to competitors like Google's Gemini 3 Pro and Anthropic's Claude Opus 4.5, and consider what this type of AI means for businesses outside of coding.

What is GPT 5.1 Codex Max?

GPT-5.1-Codex-Max differs from general-purpose models like ChatGPT. It is a highly specialized AI agent built on an updated foundational reasoning model. It’s been trained specifically for agentic tasks in software engineering, math, and research. Think of it less as a chatbot and more like a junior developer you can pair program with.

It’s designed to live inside developer environments like the Codex CLI, IDE extensions, cloud services, and code review tools. This means it works where developers spend their time, helping with the detailed aspects of building software.

It is designed to handle long, detailed projects that can be challenging for other AI models. These tasks include project-wide code refactoring, deep debugging sessions, and building entire features from scratch. It’s meant to be an autonomous partner, not just a tool that autocompletes a line of code. As the new default model in all Codex surfaces, it offers increased speed and token-efficiency compared to its predecessor, GPT-5.1-Codex.

The key features of GPT 5.1 Codex Max

The release of GPT-5.1-Codex-Max introduces fundamental changes to how AI agents approach complex, multi-step tasks, enhancing performance and efficiency.

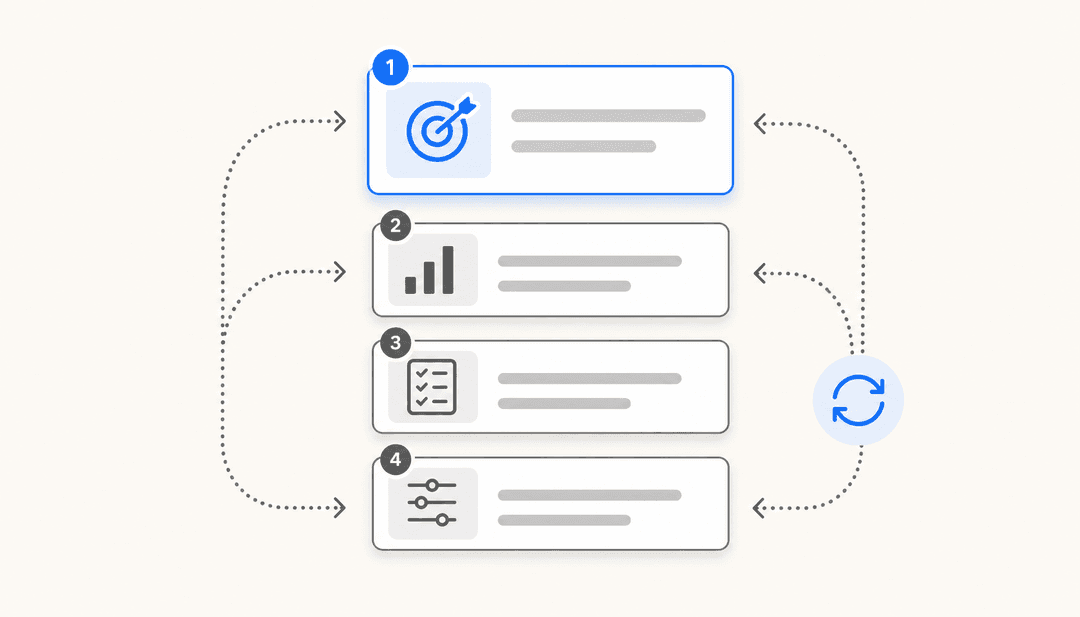

Agentic coding capabilities

What does "agentic coding" mean? It’s the AI's ability to plan, write, test, and fix code on its own, with minimal human guidance. Instead of only responding to specific prompts, it can take a broad goal and independently determine the necessary steps to achieve it.

The performance numbers illustrate this capability. On industry benchmarks, it achieves high scores, as shared in OpenAI's official announcement:

-

SWE-bench Verified: 77.9%

-

SWE-Lancer IC SWE: 79.9%

-

Terminal-Bench 2.0: 58.1%

These benchmarks are not purely theoretical. Benchmarks like SWE-bench check the model's skill at solving real software engineering problems taken from actual GitHub issues. This provides a simulation of real-world job tasks for an AI.

Another significant update is its training for Windows environments, making it the first OpenAI model with this capability. This is a notable improvement for the large community of developers who use Windows.

Long-running tasks with compaction

A common challenge with large language models is the limitation of the context window. It's like a short-term memory; once it's full, the AI starts forgetting what you talked about at the beginning. This can be a significant limitation for coding tasks that span several hours.

GPT-5.1-Codex-Max addresses this with a feature called "compaction." It is a process where the model continuously refines its operational history, retaining the most relevant context while discarding extraneous information. This lets it work coherently over millions of tokens for a long time.

You can think of it like the AI taking its own notes as it works. It keeps track of the main goal, key variables, and important decisions, so it doesn't lose sight of the objective, even if a task is very long.

How long can it run? In their own tests, OpenAI observed the model work on one task for more than 24 hours, constantly adjusting and improving its work until it was done. This demonstrates a level of endurance not previously seen in similar models.

Improved speed and cost-efficiency

In addition to performance enhancements, GPT-5.1-Codex-Max offers improvements in cost-efficiency. On the SWE-bench Verified benchmark, it gets better results than the last version at the 'medium' reasoning effort level, and it uses 30% fewer "thinking tokens" to do so.

Users also have more control over reasoning effort. You can stick with 'medium' for everyday tasks or switch to the new 'xhigh' setting for particularly tricky problems where a longer wait for a more comprehensive answer is acceptable.

This efficiency leads to lower costs. For example, OpenAI showed how it can create high-quality frontend designs for much less than it would have cost with the old model. This allows for greater use of the AI for various tasks while managing API costs.

Comparison with other models

Comparing a model to its contemporaries provides context for its capabilities. Here’s a look at how GPT-5.1-Codex-Max measures up against other top models, based on official benchmarks and developer feedback.

Advancements over GPT-5.1-Codex

Developer feedback suggests this is a significant advancement over the previous version.

One developer on Reddit called the new model "epic" after using it to write a 64-bit SMP operating system with over 100,000 lines of code. This shows the model can do more than just repeat code it's seen before. It can understand large, complex systems and devise the programming techniques to build them.

I use codex to audit everything that CC produces.. it’s been quite effective

The same developer also shared their workflow, which involved switching between different models (like GPT-5.1-Thinking and Codex) to get the best results. It suggests a new way of working where developers team up with a group of specialized AIs to get things done.

Performance alongside Claude Opus 4.5 and Gemini 3 Pro

The AI field is fast-paced, with intense competition. Just look at the release schedule: Google's Gemini 3 Pro came out on November 18, 2025, OpenAI announced GPT-5.1-Codex-Max the next day on November 19, and Anthropic followed with Claude Opus 4.5 on November 24.

A side-by-side comparison of performance metrics shows the models are closely matched. The SWE-Bench Verified benchmark is a good way to measure them, since it tests how well the models solve real software problems. Here’s how they stack up:

| Model | SWE-Bench Verified Score | Release Announcement |

|---|---|---|

| Claude Opus 4.5 | 80.9% | November 24, 2025 |

| GPT-5.1-Codex-Max | 77.9% | November 19, 2025 |

| Gemini 3 Pro | 76.2% | November 18, 2025 |

Source: Vellum.ai Flagship Model Report

Based on this benchmark, Claude Opus 4.5 has a small lead. However, all three models represent the current state-of-the-art for AI coding. Each has its own strengths, and the best one depends on the task. This competition provides developers with several high-quality options.

Applying agentic AI in a business context

GPT-5.1-Codex-Max is a powerful tool. But it's also very specialized. It’s an agentic AI made for developers, and effective use requires technical skills and a solid grasp of software engineering.

This raises the question of how similar autonomous AI can be applied to other business functions, such as customer service, in a more accessible way.

While developers utilize agentic coders, AI assistants are also being developed for other business teams. The approach shifts from configuring complex tools to deploying AI that learns from a company's data, similar to onboarding a new employee.

For example, platforms like eesel AI offer an AI teammate for customer service that can be implemented quickly.

By connecting to help desks and knowledge bases, it learns from past tickets, help articles, and internal documents. It learns the business context, rules, and the team's specific tone of voice autonomously.

Just like Codex-Max can spend over 24 hours refactoring a large codebase, an AI Agent from eesel can work 24/7, handling frontline support tickets. A key difference is the method of interaction. eesel AI is managed with plain English instructions rather than code.

Choosing the right AI for the task

GPT-5.1-Codex-Max is a significant step forward for autonomous coding agents. With features like compaction, strong performance on benchmarks, and notable real-world results, it is a valuable tool for developers.

To see the model in action and get a feel for its real-world performance, check out this hands-on review that explores whether the new features deliver on their promise.

It also highlights a broader trend in AI toward specialized, agentic models designed for specific jobs. The future may involve using specialized AI for specific tasks rather than a single, all-encompassing AI.

For developers, that might be a coding agent like Codex-Max. For customer service teams, it’s an AI teammate that understands their workflows, adopts their communication style, and can be integrated quickly.

Those interested in how an AI teammate can be applied to support processes can explore platforms like eesel AI, which can be configured to manage support issues.

Frequently asked questions

GPT 5.1 Codex Max is a specialized AI agent built for complex software engineering, not a general-purpose chatbot like ChatGPT. Think of it as a junior developer you can pair program with, as it's designed to work directly inside developer environments.

The main features include advanced "agentic coding" capabilities for autonomous work, a "compaction" feature to handle tasks lasting over 24 hours without losing context, and overall improvements to its speed and cost-efficiency.

It uses a feature called "compaction." This process allows the model to summarize and prune its own history as it works, keeping only the most critical information. This lets it work on tasks for extremely long periods, even over 24 hours, without forgetting the main goal.

The models are closely matched. On the SWE-Bench Verified benchmark, Claude Opus 4.5 has a slight edge. However, GPT 5.1 Codex Max performs well, particularly on long, complex tasks. The most suitable model often depends on the specific job you need it for.

Yes! It's the first OpenAI model that has been specifically trained to operate in Windows environments, which is a significant benefit for the large community of developers who use Windows as their primary OS.

It means the AI can proactively plan, write, test, and debug code with minimal human supervision. Instead of just responding to a command, GPT 5.1 Codex Max can take a high-level goal and determine the necessary steps to achieve it on its own.

Share this article

Article by

Kenneth Pangan

Writer and marketer for over ten years, Kenneth Pangan splits his time between history, politics, and art with plenty of interruptions from his dogs demanding attention.