Claude Sonnet 4.6 is being called the "sweet spot model" for good reason. It delivers roughly 90% of Opus 4.6's capability at a fraction of the cost, making it the default choice for most developers and teams building with AI.

Released in February 2026, Sonnet 4.6 represents a significant leap from its predecessor. Early testers preferred it over Sonnet 4.5 roughly 70% of the time. Even more surprising, users chose it over the flagship Opus 4.5 59% of the time in head-to-head comparisons.

In this review, we'll break down what makes Sonnet 4.6 special, how it performs on real benchmarks, and when you should choose it over Opus. We'll also look at pricing, customer feedback, and how we at eesel AI leverage Claude models to power autonomous customer service agents.

What is Claude Sonnet 4.6?

Claude Sonnet 4.6 sits in the middle of Anthropic's model lineup, positioned between the fast, lightweight Haiku and the premium Opus. Anthropic describes it as offering "frontier performance at practical pricing," and the numbers back that up.

The model launched in February 2026 and immediately became the default for Claude.ai Free and Pro users. It's available across multiple platforms: the Claude API, AWS Bedrock, Google Cloud's Vertex AI, and Microsoft Foundry. This wide availability makes it easy to integrate into existing workflows regardless of your cloud provider.

What sets Sonnet 4.6 apart is its hybrid reasoning architecture. It can produce near-instant responses or engage in extended, step-by-step thinking depending on the task. API users get fine-grained control over the model's thinking effort, letting you balance speed against depth.

The model also introduces a 1 million token context window in beta (API only), enough to hold entire codebases, lengthy contracts, or dozens of research papers in a single request. More importantly, it reasons effectively across all that context, not just the most recent portions.

Key improvements over Sonnet 4.5

Sonnet 4.5 was already a capable model. So what changed? According to Anthropic's research and early customer feedback, the improvements fall into three main categories.

Coding performance leap

Developers with early access preferred Sonnet 4.6 over 4.5 roughly 70% of the time. The model reads context more carefully before modifying code and consolidates shared logic rather than duplicating it. This makes long coding sessions less frustrating because the model maintains coherence across multiple files and changes.

On the hardest bug-finding problems, Sonnet 4.6 improved more than 10 percentage points over its predecessor. For teams running agentic coding at scale, this translates to higher resolution rates and more consistent performance.

Reduced "laziness" and overengineering

One persistent complaint about earlier AI coding assistants was their tendency to overengineer simple solutions or claim success when the code still had issues. Sonnet 4.6 addresses both problems.

Users report fewer false claims of success and less tendency to overengineer. The model follows instructions more consistently and completes multi-step tasks without losing track of the goal. In Claude Code, Anthropic's development environment, users rated Sonnet 4.6 as meaningfully better at instruction following with fewer hallucinations.

Computer use capabilities

In October 2024, Anthropic introduced the first general-purpose computer-using AI model. Sonnet 4.6 represents a major step forward in this capability.

On OSWorld, the standard benchmark for AI computer use, Sonnet 4.6 shows significant gains over 4.5. Early users report human-level capability in navigating complex spreadsheets, filling out multi-step web forms, and coordinating actions across multiple browser tabs.

The model also demonstrates improved resistance to prompt injection attacks, a critical safety consideration for computer use scenarios. Anthropic's safety evaluations show Sonnet 4.6 performs similarly to Opus 4.6 on security metrics.

Benchmarks and performance

Marketing claims are one thing. Hard numbers tell a clearer story. Here's how Sonnet 4.6 performs on the benchmarks that matter for real-world deployment.

Coding benchmarks

Sonnet 4.6 approaches Opus-level performance on software engineering benchmarks. On long-horizon coding evaluations, where every feature builds on previous decisions, it matches Opus 4.5's performance while using fewer tokens and running faster.

The model excels at SWE-bench Verified, a benchmark that tests real-world software engineering tasks drawn from GitHub issues. It also performs strongly on Terminal-Bench 2.0, which evaluates command-line task completion.

For production code review workflows, Sonnet 4.6 meaningfully closes the gap with Opus on bug detection. Teams can run more reviewers in parallel, catch a wider variety of issues, and do it without increasing costs.

Reasoning and agent capabilities

Beyond coding, Sonnet 4.6 demonstrates strong performance on reasoning and agent tasks. On Vending-Bench Arena, a business simulation where AI models compete to maximize profits, Sonnet 4.6 developed a novel strategy: investing heavily in capacity for the first ten simulated months, then pivoting sharply to profitability. This timing helped it finish well ahead of competitors.

For enterprise document comprehension, Sonnet 4.6 matches Opus 4.6 on OfficeQA, which measures how well a model can read enterprise documents (charts, PDFs, tables), extract relevant facts, and reason from those facts. Box reported a 15 percentage point improvement in heavy reasoning Q&A over Sonnet 4.5 when tested across real enterprise documents.

Context window and reasoning

The 1 million token context window (currently in beta on API) opens new use cases. You can feed an entire codebase, a lengthy legal contract, or dozens of research papers into a single request. Unlike some models that technically accept large contexts but lose coherence, Sonnet 4.6 maintains effective reasoning across the full window.

This capability shines for tasks like:

- Cross-file code refactoring where understanding dependencies matters

- Legal document analysis requiring comparison across hundreds of pages

- Research synthesis from multiple papers

- Long-form content creation with consistent tone and references

Sonnet 4.6 vs Opus 4.6: Which should you choose?

Both models have their place. The question is which one fits your specific needs.

When Sonnet 4.6 wins

For most engineering tasks, Sonnet 4.6 is the better choice. Users preferred it to Opus 4.5 59% of the time, citing better instruction following, less overengineering, and faster response times. It's more cost-effective for high-volume workloads, making it practical for production systems that process thousands of requests daily.

The model particularly excels at:

- Day-to-day coding and debugging

- Code review and bug detection

- Frontend development and UI generation

- Agent workflows requiring sustained coherence

- High-volume API applications

When Opus 4.6 still reigns

Opus 4.6 remains the strongest option for tasks demanding the deepest reasoning. Anthropic recommends it for:

- Complex codebase refactoring across many files

- Coordinating multiple agents in a workflow

- Problems where getting it "just right" is paramount

- Research and analysis requiring maximum depth

The performance gap exists, but it's narrower than the price difference would suggest. Think of Opus as the specialist you bring in for the hardest problems, while Sonnet handles the bulk of your workload.

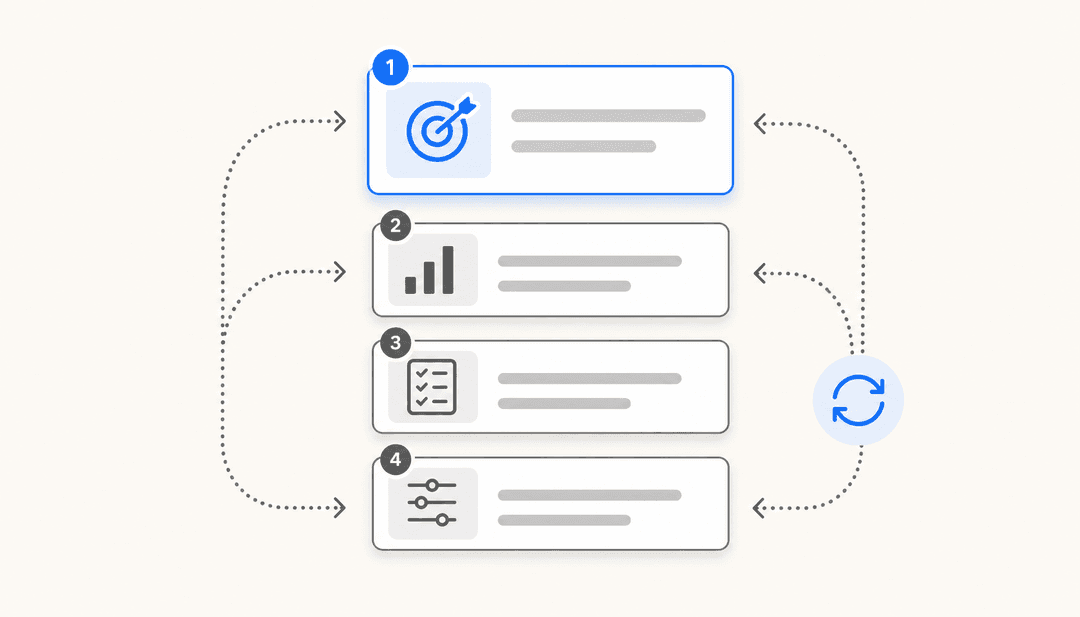

Decision framework

A practical approach: start with Sonnet 4.6 for everything. When you encounter a task where the model struggles, that's your signal to try Opus. Most teams will find Sonnet 4.6 handles 80-90% of their needs, reserving Opus for the edge cases where that extra capability matters.

At scale, this approach saves significant money without sacrificing much quality. The cost difference between Sonnet and Opus adds up quickly when you're processing millions of tokens.

Pricing and availability

Sonnet 4.6 offers compelling value. Here's the complete pricing breakdown:

| Usage Tier | Input Price | Output Price |

|---|---|---|

| Prompts ≤ 200K tokens | $3 / million tokens | $15 / million tokens |

| Prompts > 200K tokens | $6 / million tokens | $22.50 / million tokens |

For comparison, Opus 4.6 costs $5/$10 per million input tokens and $25/$37.50 per million output tokens. Haiku 4.5, the lightweight option, runs $1/$5 per million tokens.

You can reduce costs further:

- Prompt caching: Up to 90% savings on repeated context (write: $3.75/MTok, read: $0.30/MTok for ≤200K tokens)

- Batch processing: 50% discount for asynchronous workloads

Consumer access through Claude.ai starts at free, with Pro plans at $20/month ($17/month annually). The 1M token context window is available in beta on the API using the context-1m-2025-08-07 header.

Real-world customer feedback

Enterprise customers have been vocal about their experiences with Sonnet 4.6. Their feedback provides insight into how the model performs outside benchmark environments.

Rakuten AI reported genuine surprise at the iOS code quality: "Claude Sonnet 4.6 produced the best iOS code we've tested for Rakuten AI. Better spec compliance, better architecture, and it reached for modern tooling we didn't ask for, all in one shot."

Box evaluated the model on deep reasoning and complex agentic tasks across real enterprise documents, finding it outperformed Sonnet 4.5 in heavy reasoning Q&A by 15 percentage points.

An insurance technology company reported Sonnet 4.6 hit 94% on their complex computer use benchmark, the highest of any Claude model they tested, with the ability to reason through failures and self-correct.

Multiple developers noted the model's design sensibility. One commented: "Claude Sonnet 4.6 has perfect design taste when building frontend pages and data reports, and it requires far less hand-holding to get there than anything we've tested before."

At eesel AI, we've observed similar patterns when using Claude models to power our autonomous customer service agents. The combination of strong reasoning, large context windows, and reliable instruction following makes Sonnet 4.6 particularly effective for handling complex support tickets that require understanding multiple previous interactions and company policies.

Getting started with Claude Sonnet 4.6

Accessing Sonnet 4.6 is straightforward. If you use Claude.ai, you already have it: the model became the default for Free and Pro users upon release. Simply start a new conversation.

For API access, use the model ID claude-sonnet-4-6. The model is available on the Claude Developer Platform, AWS Bedrock, Google Cloud Vertex AI, and Microsoft Foundry.

If you're migrating from Sonnet 4.5, Anthropic recommends exploring the adaptive thinking settings. Sonnet 4.6 offers strong performance at any thinking effort level, even with extended thinking disabled. Experiment to find the right balance of speed and reliability for your specific use case.

For teams building AI-powered customer experiences, whether that's autonomous support agents, intelligent copilots, or automated triage systems, the combination of Sonnet 4.6's capabilities and cost-effectiveness opens new possibilities. At eesel AI, we help teams deploy AI agents that handle frontline support autonomously, draft replies for human review, and continuously learn from your existing knowledge base. If you're exploring how AI can transform your customer operations, we'd love to show you what's possible.

Frequently Asked Questions

Share this article

Article by

Stevia Putri

Stevia Putri is a marketing generalist at eesel AI, where she helps turn powerful AI tools into stories that resonate. She’s driven by curiosity, clarity, and the human side of technology.