The pressure is real and it runs in both directions. On one side, executives are pushing support teams to adopt AI: 91% of customer service leaders report pressure from leadership to implement AI in 2026, according to Gartner's February 2026 survey. On the other side, customers are pushing back: 79% of Americans say they strongly prefer interacting with a human over an AI agent, and 46% say AI-powered customer service rarely or never leads to a successful outcome.

The gap between those two realities is where most support teams are stuck right now. They've been told to automate, they've deployed a tool, and they're watching satisfaction scores wobble while the inbox still fills up. Meanwhile, the teams genuinely getting results with AI are doing something different: they're not treating it as a replacement for human agents. They're treating it as a layer.

This post walks through the actual data on AI versus human support, covering resolution rates, response time, cost per ticket, customer satisfaction, and the specific situations where each approach wins. The goal is to give you what you need to make the right call for your team, not to convince you AI is the answer to everything.

What AI customer support actually does

The phrase "AI customer support" gets stretched to cover everything from a basic FAQ chatbot to a fully autonomous agent that opens tickets, drafts replies, processes refunds, and closes cases without any human involved. Those are very different things, and the data reflects that gap.

At the low end, rule-based chatbots have been around for years. Gartner found that only 14% of customer issues fully resolve through traditional self-service channels. These systems deflect rather than resolve: they intercept the request, give a canned answer, and either close the ticket or route the customer to a human if the answer misses.

The more capable tier, which most modern platforms fall into, uses large language models to understand intent rather than match keywords. These systems can handle multi-turn conversations, access backend data (order status, subscription details, account history), and complete actions on behalf of the customer. Salesforce's Agentforce achieved an 84% autonomous resolution rate across 380,000+ conversations, with only 2% requiring human escalation. Klarna's AI assistant handled two-thirds of all customer service chats in its first month, cutting average resolution time from 11 minutes to under 2 minutes and driving a $40 million profit improvement in 2024.

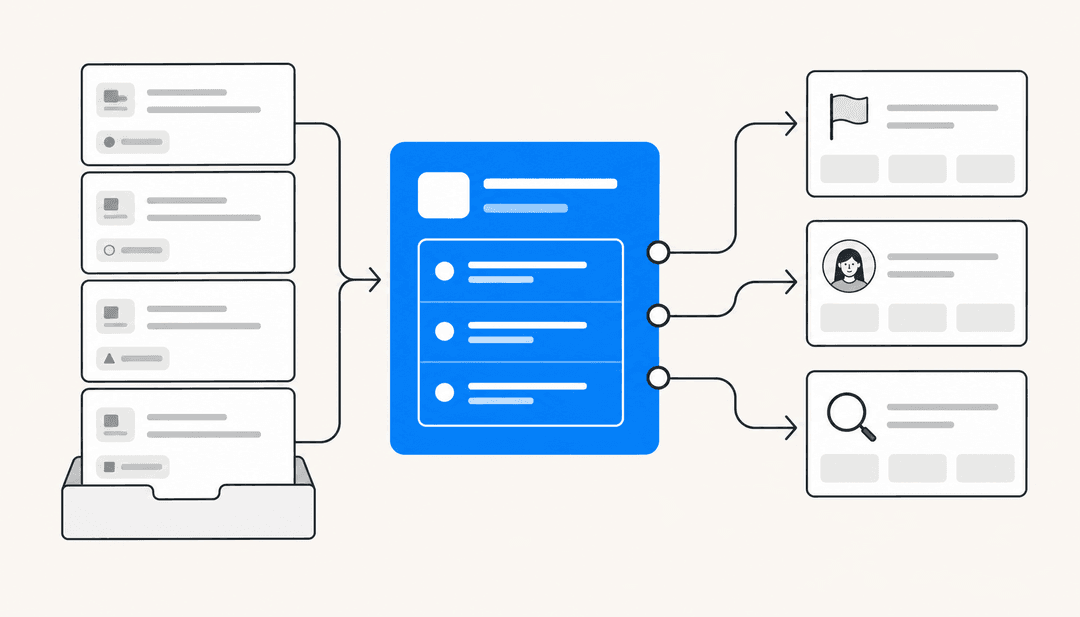

What these deployed systems actually do, day to day:

- Ticket routing and triage: Classify incoming requests by type, priority, and complexity before any human sees them

- Autonomous response for tier-1 queries: Answer password resets, shipping status requests, return policy questions, and similar repeatable questions without agent involvement

- Copilot assistance: Draft suggested responses for agents to review, edit, and send, reducing per-ticket handle time

- Escalation with context: When the AI hits a case it can't handle confidently, it hands off to a human agent with a full summary already written

- Knowledge gap detection: Surface patterns in tickets the AI struggled with, and flag gaps in the help center

Platforms like eesel AI take a graduated approach to this: teams start in copilot mode, where the AI drafts every response for human review, then move to agent mode once accuracy is validated. That progression matters because it lets teams build trust in the AI's output before giving it autonomy. Gridwise resolved 73% of tier-1 support requests in their first month using this approach.

What human agents do that AI can't

AI is good at consistency, speed, and scale. It is poor at the things that don't have a fixed answer.

Human agents read emotional subtext. They hear that a customer is about to cancel not because the words say so, but because the tone has shifted. They notice when a customer is confused rather than frustrated and adjust their explanation accordingly. They make judgment calls that no amount of training data can fully capture, like when to escalate something that technically fits the AI's criteria but doesn't feel right.

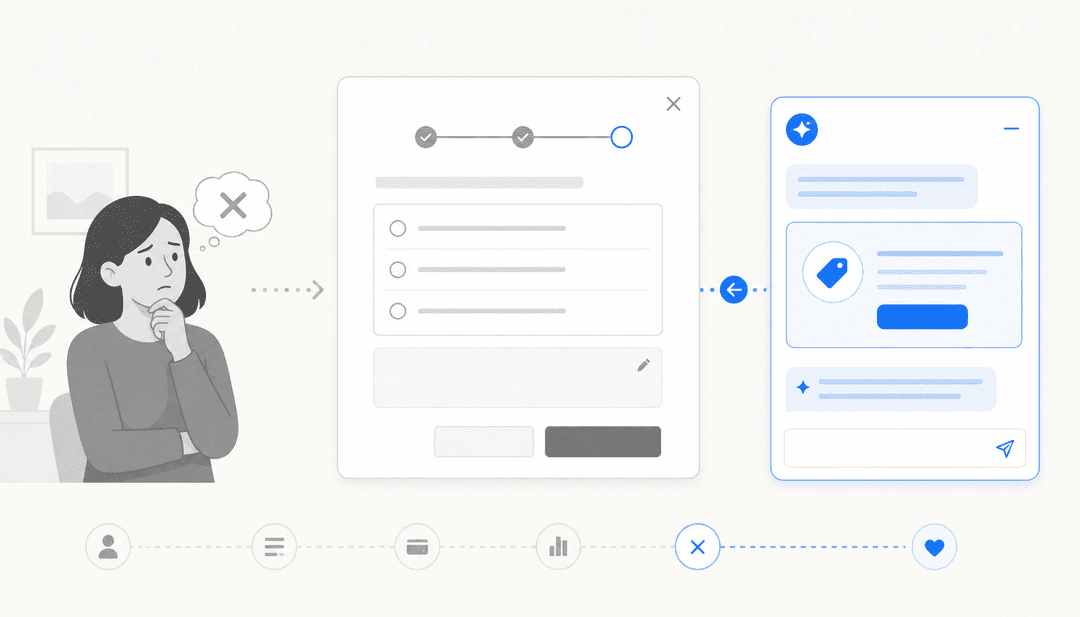

Natalie Ruiz, CEO of AnswerConnect, commissioned an OnePoll survey of 6,000 adults across the US, UK, and Canada and found that 29% ranked talking to an AI as their most frustrating service experience, ranking just behind being left on hold. What drove that frustration? Customers described AI systems that couldn't understand their actual problem, provided irrelevant or inaccurate responses, and couldn't adapt when the scripted path didn't fit their situation.

From Ruiz's piece in Forbes:

"A customer with a billing question gets incorrect advice. A patient trying to book an appointment gets blocked. A person in a plumbing emergency cannot get through to a real person because the automated system fails to escalate the call. Each failure feels personal."

A Hiver survey of 500+ customer service professionals found that 59% believe a human-led support strategy works better, specifically because complex and emotionally sensitive issues require judgment and empathy that AI doesn't yet have. The same survey found that 50% believe the future is AI-human collaboration, not replacement.

The situations where human agents consistently outperform AI:

- Complaints from upset or distressed customers where tone and empathy matter more than speed

- Novel or complex issues that don't fit any existing category or knowledge base entry

- Negotiations, sensitive account changes, and retention conversations

- Anything requiring regulatory judgment (medical, legal, financial decisions)

- De-escalation after a previous interaction went badly

Nearly 9 in 10 respondents in the AnswerConnect survey said they preferred speaking with a real person when contacting a healthcare provider. The pattern holds across any domain where accuracy and trust are both on the line at the same time.

Speed and availability

This is where AI has an unambiguous structural advantage.

AI operates 24 hours a day, 7 days a week, without shift coverage gaps, lunch breaks, or queue buildup when three agents call in sick on a Monday. For teams with customers in multiple time zones, that coverage is genuinely hard to replicate with headcount alone.

On response time, AI-native platforms achieve average handle times under 3 minutes for resolved interactions, compared to an industry average of 4-7 minutes for agent-assisted contacts. For first response specifically, AI can reply instantly; the median first response time for a human agent at a mid-market company is typically measured in hours.

That speed gap matters to customers. 51% of consumers say they prefer bots over humans when they want immediate service. For common queries, availability and response time are the primary purchase factors, not the agent's empathy. Someone checking their order status at 11pm doesn't want to wait until business hours.

The practical implication for response time: AI eliminates first-response latency entirely for any query it can handle. Human agents still matter for resolution quality, but the first response to every ticket can be instant, regardless of when it arrives. AI-powered routing has also been shown to reduce customer "hunting time" in IVR systems by 54%, cutting out the menu-navigation frustration that ranks among customers' top complaints.

Quality and customer satisfaction

The CSAT picture is more complicated than either side usually admits.

On the pro-AI side: 92% of businesses report improved customer satisfaction after implementing AI, according to a cross-industry analysis. When AI resolves a query quickly and accurately, customers are satisfied. Many don't care whether it was a human or a machine if the outcome was right.

On the other side: 46% of consumers say AI-powered service rarely or never leads to a successful outcome (Pega/YouGov, February 2026), and 77% say they always or often get better outcomes when dealing only with a human. Trust in AI fell from 62% in 2023 to 59% in 2025, and the share calling AI "very untrustworthy" more than doubled, from 5% to 12% (Avaya).

The resolution is that both statistics are true at once, they're just measuring different things. The 92% CSAT improvement stat captures what happens when AI works correctly on well-scoped queries. The 46% dissatisfaction rate captures what happens when AI is deployed broadly without tight scoping, so it fields queries it can't handle and fails publicly.

A 2025 Pega/YouGov study found that only 2% of consumers want to interact exclusively with AI chatbots. That's not a finding that says AI should be avoided. It's a finding that says humans need to remain accessible, especially when the AI hits a wall.

Some customer verbatim (from the broader AnswerConnect/OnePoll research):

"AI-driven service promises convenience. For many people, it only delivers friction."

The teams that protect CSAT scores do two things: they scope their AI tightly to the query types it handles well, and they build a clear, low-friction path to a human agent for everything else. Only 15% of customers experience a seamless handoff from AI to human agents, according to Twilio. That number is the real satisfaction lever to fix.

Cost per ticket

This is the most commonly cited justification for AI adoption, and the numbers are real, but they need context.

Human agent cost per ticket:

The full-cost accounting for a human agent includes salary and benefits (60-80% of total support cost), tooling and software (10-25%), and overhead including facilities and training (10-15%). LiveChatAI's 2025 analysis across 50 industries puts fully-loaded per-ticket costs between $20 and $30 for most companies. The range is wide because it depends heavily on ticket complexity, average handle time, and agent location. A US-based agent handling complex enterprise queries may cost $40+ per resolved ticket; an offshore tier-1 team handling simple queries can be as low as $8-12.

AI cost per ticket:

Gartner benchmarks median self-service cost at $1.84 per contact, versus $13.50 for agent-assisted. AI-native platforms typically price per resolution in the $1-3 range. At scale, the math is compelling.

| AI (autonomous) | Human agent (US) | Human agent (offshore) | |

|---|---|---|---|

| Cost per resolved ticket | $1-3 | $20-30 | $8-15 |

| Availability | 24/7 | Business hours | Business hours or shift |

| Avg handle time | Under 3 min | 4-7 min | 4-7 min |

| Suitable query types | Tier-1, FAQ, status | All | All |

| Scalability | Near-unlimited | Headcount-bound | Headcount-bound |

When does the ROI actually kick in?

The break-even calculation depends on volume. For a team handling 1,000 tickets per month with 50% tier-1 queries (password resets, status checks, FAQs), automating that 50% at $2/ticket saves roughly $8,000/month versus $13.50 agent-assisted cost, or $6,000/month versus a cheaper offshore rate. That covers most AI platform costs at mid-market volumes.

66% of businesses required more than six months to see measurable ROI from AI implementations, which means the ramp period matters. Scoping the AI too broadly early creates high failure rates that inflate human agent costs (re-handling failed AI tickets), which pushes ROI further out. Tight scoping of tier-1 queries from day one gets to ROI faster.

One important caveat from Gartner: by 2030, the cost per resolution for generative AI is predicted to exceed $3, which could exceed offshore agent costs as infrastructure expenses grow. The cost advantage is real today but not necessarily permanent.

Types of queries: AI wins vs human wins

The most useful lens for deployment decisions isn't AI vs human as a binary. It's query type. Most support teams have a distribution of query complexity that fits naturally into a tiered model.

| Query type | Best handled by | Why |

|---|---|---|

| Order status, shipping tracking | AI | Structured lookup, no judgment needed |

| Password reset, account access | AI | Repeatable, no emotional stake |

| Return and refund requests (standard) | AI | Policy lookup + action, well-scoped |

| FAQ and help center questions | AI | Knowledge retrieval, consistent answer |

| Billing disputes | Hybrid (AI gathers info, human decides) | Requires judgment on edge cases |

| Complex troubleshooting | Human | Novel combinations, iterative diagnosis |

| Upset or emotional customer | Human | Tone, empathy, and de-escalation |

| Retention and cancellation | Human | Relationship and negotiation |

| Medical, legal, financial queries | Human | High-stakes, accuracy-critical |

| Novel or first-ever issue type | Human | No precedent in training data |

| VIP or enterprise account queries | Human (with AI assist) | Relationship matters, customized response |

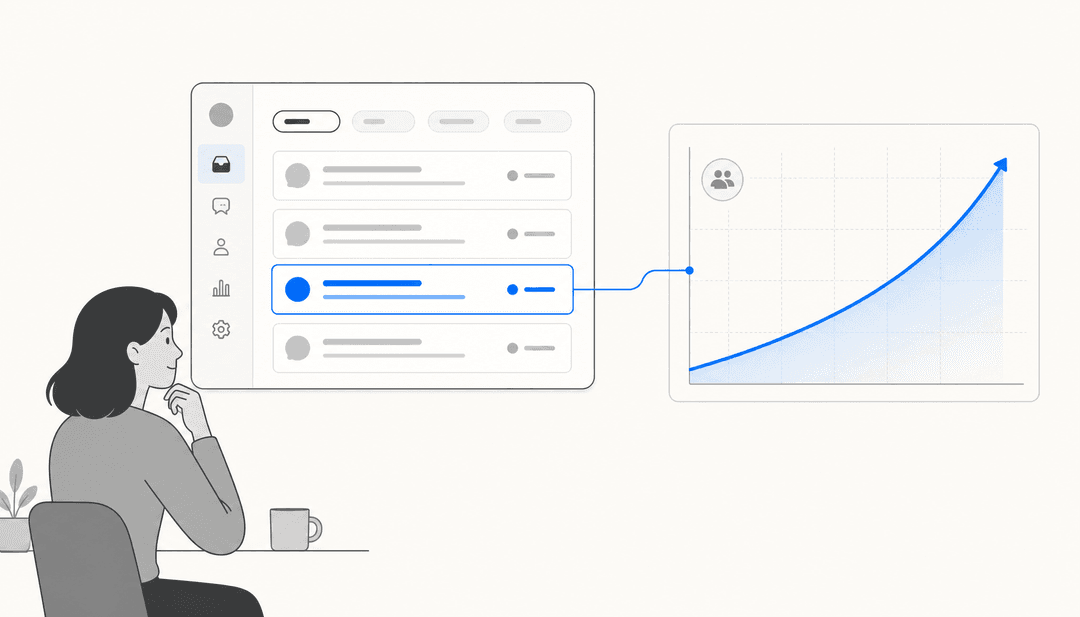

The Comm100 data shows that small teams (1-5 agents) that scope their AI tightly achieve up to 89% resolution rates on the queries they route to AI. Large teams that funnel everything through a bot see only 41% resolution. The volume-versus-precision tradeoff is the clearest pattern in the resolution rate data.

McKinsey's research shows AI deployments reduce total ticket volume by 40-50% for teams that scope well, primarily through deflection of tier-1 queries. That volume reduction compounds the per-ticket cost savings.

The hybrid model

The teams getting real results with AI aren't choosing between AI and humans. They're layering them.

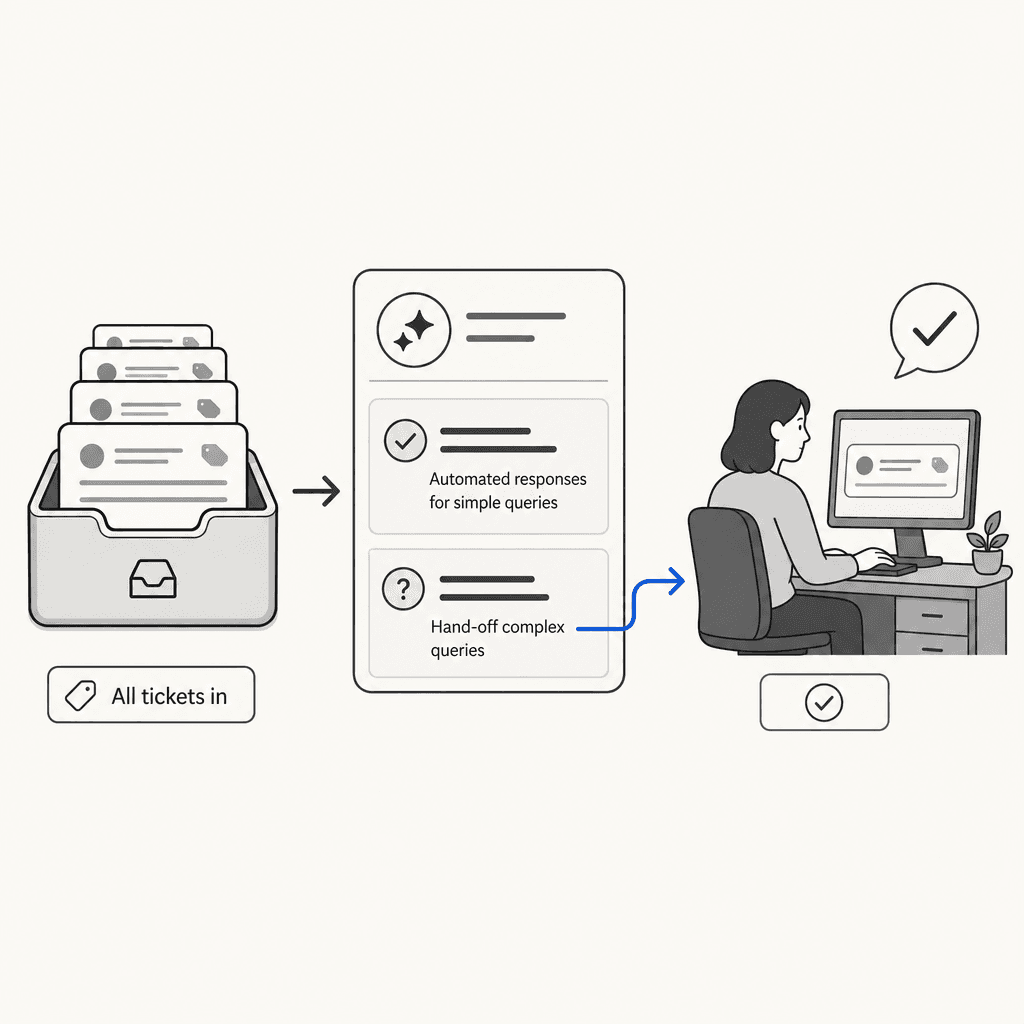

The model looks like this:

- Every ticket enters through AI triage. The AI classifies query type, checks confidence, and either resolves it autonomously (tier-1, high confidence) or routes it to a human with a summary and suggested response already prepared.

- For tier-1 queries the AI can handle, it responds directly and closes the ticket. No human involved.

- For anything the AI flags as low-confidence or outside its scope, it escalates to a human agent. The handoff includes the customer's full message history, the AI's attempted understanding of the issue, and a draft response if it generated one.

- Human agents review AI-handled tickets on a sampling basis, catching any errors before they compound into patterns.

- Mishandled tickets feed back into the AI's training loop, improving future routing accuracy.

76% of contact center leaders have formally adopted human-in-the-loop models combining AI routing with human handling of complex interactions, according to Natterbox's data cited by CMSWire. That figure is up sharply from previous years, reflecting a shift from "AI vs human" to "AI routes to human."

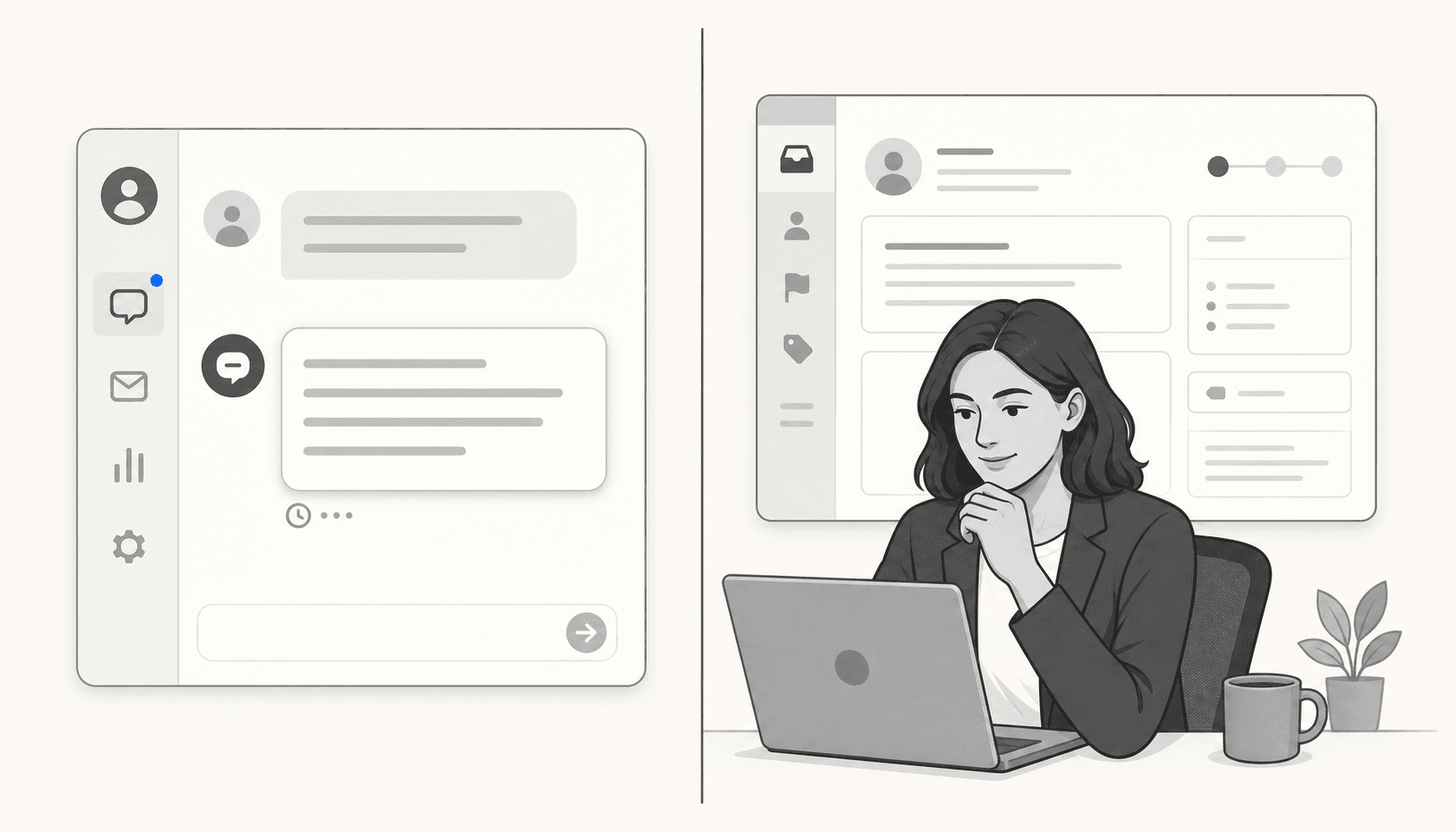

Platforms like eesel AI support this model natively. The copilot-to-agent progression lets teams start with AI drafting and human review, then shift to autonomous mode for the query categories where accuracy is proven. Escalation triggers are configured in plain language: "escalate any billing complaint to the senior team" rather than through a workflow builder.

eesel AI's confidence-based routing is particularly relevant here: it sends responses autonomously when confidence is high and queues drafts for review when confidence drops below threshold. That means the human agent review queue is weighted toward the cases where AI judgment is genuinely uncertain, not just complex.

The handoff quality is the part most implementations get wrong. Only 15% of customers currently experience a seamless handoff from AI to human. The other 85% have to re-explain their problem. Fixing that single friction point, by having the AI pass full context to the human, is often worth more to CSAT than any other change in the workflow.

How to decide the right mix for your team

The decision framework here is more useful as a set of if/then conditions than a generic checklist.

If your ticket volume is below 500/month: The cost savings from AI automation are modest, and the setup overhead may not be worth it unless your ticket mix is heavily tier-1. Consider starting with AI in copilot mode to reduce handle time rather than attempting autonomous resolution. The value is agent efficiency, not deflection.

If your ticket volume is above 2,000/month: Tier-1 AI automation almost certainly has positive ROI. Even a 30% deflection rate saves significant headcount equivalent at scale. Start by analyzing your ticket categories for the past 90 days and identify which categories are repeatable, well-scoped, and data-rich enough for the AI to learn from.

If your CSAT is already high (above 90%): Be careful. You have something working. Introduce AI gradually, in copilot mode first, and measure whether CSAT holds before switching to autonomous mode. Don't optimize for cost savings at the expense of a satisfaction score that's taken years to build.

If your CSAT is struggling (below 75%): Check whether AI is already in the workflow before adding more of it. If basic automation is already frustrating customers, layering more AI won't fix it. Fix the routing and escalation first.

If more than 40% of your queries are complex or emotional in nature: AI autonomous resolution is probably not the right first bet. Agent assist (AI drafts, humans send) will give you efficiency gains without the risk of automated responses landing badly.

If your team is spread across time zones: 24/7 AI coverage for tier-1 queries is a direct substitute for shift coverage overhead. This is one of the clearest ROI cases regardless of volume.

On team size: Nearly 80% of organizations plan to transition at least some agents into more complex or emotionally sensitive roles as AI takes on tier-1 volume, according to Gartner. Only 20% of surveyed leaders have actually reduced headcount. The more realistic outcome is that AI handles volume growth without requiring proportional headcount growth, not that it replaces existing agents.

Before any AI deployment, run a simulation if the platform supports it. eesel AI's ticket simulation feature lets teams run the AI against historical tickets before going live, producing a per-category performance breakdown, predicted deflection rate, and knowledge gap list. That data is worth more than any vendor's headline resolution rate claim, because it's based on your tickets, not an industry average.

There are also several useful guides for thinking through the deployment specifics: eesel AI's ticket deflection guide covers the 20-60% volume reduction range with implementation tactics, and their deflection rate explainer breaks down what the metric actually measures and how to improve it without gaming the number.

Conclusion

The honest answer to "AI or human support?" is: neither exclusively, and it depends on which query you're looking at.

For high-volume, well-defined tier-1 queries, AI autonomous resolution is defensible and often cost-compelling. AI-native platforms achieve 55-70% first contact resolution at $1-3 per ticket, against a fully-loaded human cost of $20-30. The math holds once you have enough volume.

For complex, emotional, or high-stakes interactions, humans are still the right call, and the data is unambiguous on this. 73% of customers would take their business elsewhere if a company only offered AI with no human option. That's not a preference. That's a retention risk.

The deployments that work are neither "AI first" nor "AI only." They're structured handoffs. AI handles the repeatable volume and passes everything else to a human with full context. Done well, that model reduces cost per ticket, improves first response time, and keeps CSAT intact because human agents are freed up to spend their time on the interactions that actually need them.

The teams stuck in the gap are usually the ones that deployed AI broadly, saw resolution rates around the 40% industry average, and are now managing both the AI's failures and the customer frustration that follows. The fix is almost never "more AI." It's tighter scoping, better escalation routing, and more investment in the handoff quality that currently leaves 85% of customers having to repeat themselves.

If your team handles more than 1,000 tickets per month with at least 40% tier-1 volume: AI automation with a human escalation path will almost certainly deliver positive ROI. Start in copilot mode, validate accuracy, then move to autonomous resolution in stages.

If your ticket mix is primarily complex or emotional: AI as an agent assist tool (drafts and summaries for humans to review) will give you efficiency without the customer experience risk of autonomous failure.

If you're unsure where you fall: run a 90-day ticket analysis categorized by query type and complexity before committing to a platform. The eesel AI blog's overview of companies using AI for customer service has nine real-world deployment playbooks worth reading before making that call.

Frequently Asked Questions

Share this article

Article by

eesel writer team

The eesel writer team creates content to help support teams get the most out of AI.