How to use Zendesk AI agent conversation transcripts: A practical guide

Stevia Putri

Last edited February 26, 2026

When you deploy an AI agent to handle customer conversations, you want to know how it's performing. Are customers getting their questions answered? Where does the AI struggle? What gaps exist in your knowledge base?

This is where Zendesk AI agent conversation transcripts come in. Since late 2025, Zendesk has made these conversations visible as tickets in your Agent Workspace, giving you unprecedented visibility into your automated support operations.

In this guide, you'll learn a practical workflow for reviewing these transcripts, identifying improvement opportunities, and turning insights into action. Whether you're just getting started with AI agents or looking to optimize an existing deployment, this systematic approach will help you get more value from your conversation data.

What you'll need

Before diving into transcript review, make sure you have:

- A Zendesk account with AI agent enabled (Suite Team plan or higher)

- The AI agent tickets feature turned on (this became mandatory for all accounts on May 4, 2026)

- Admin Center access to view conversation insights

- Optional: An eesel AI account if you want to run simulations or analyze transcripts at scale

Step 1: Access your Zendesk AI agent conversation transcripts

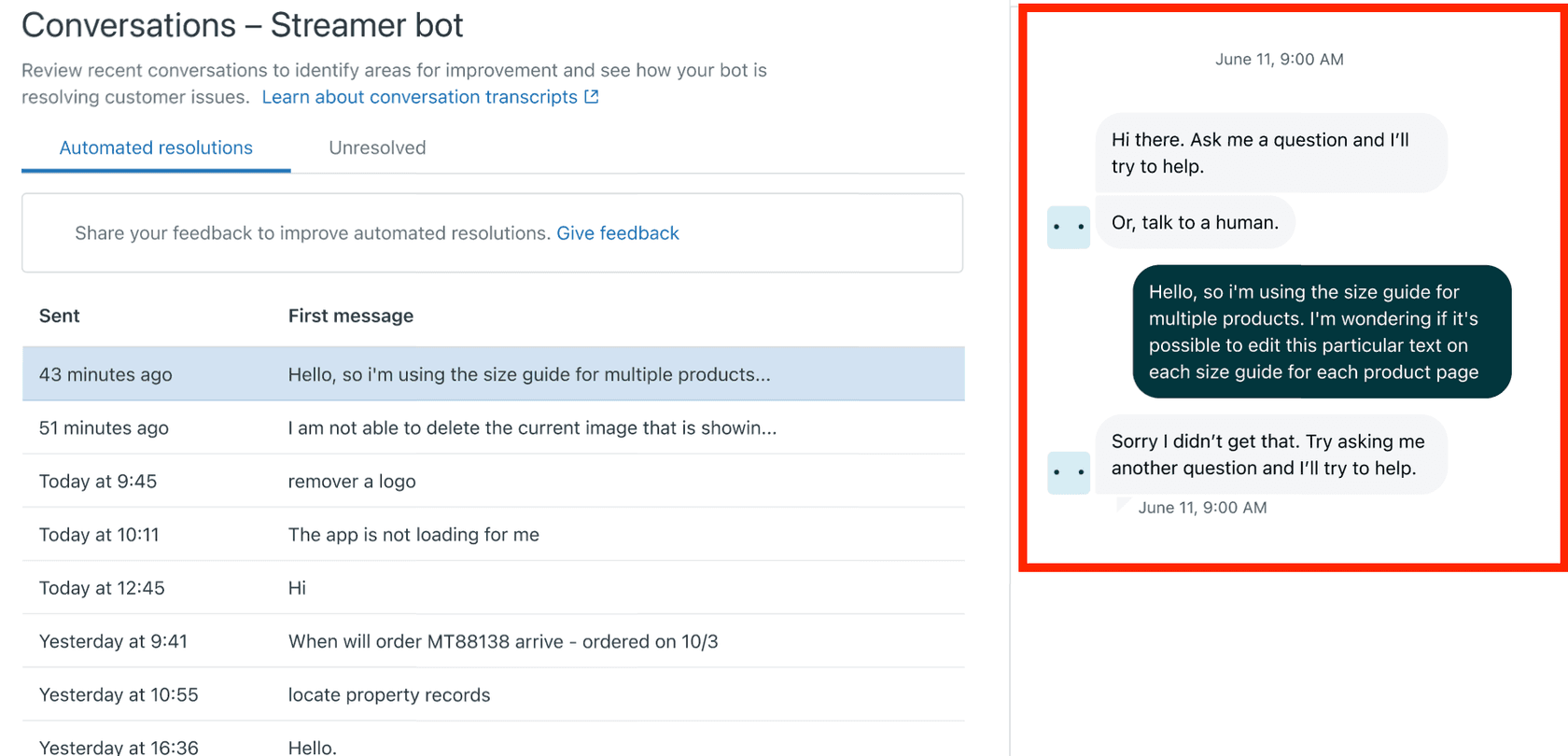

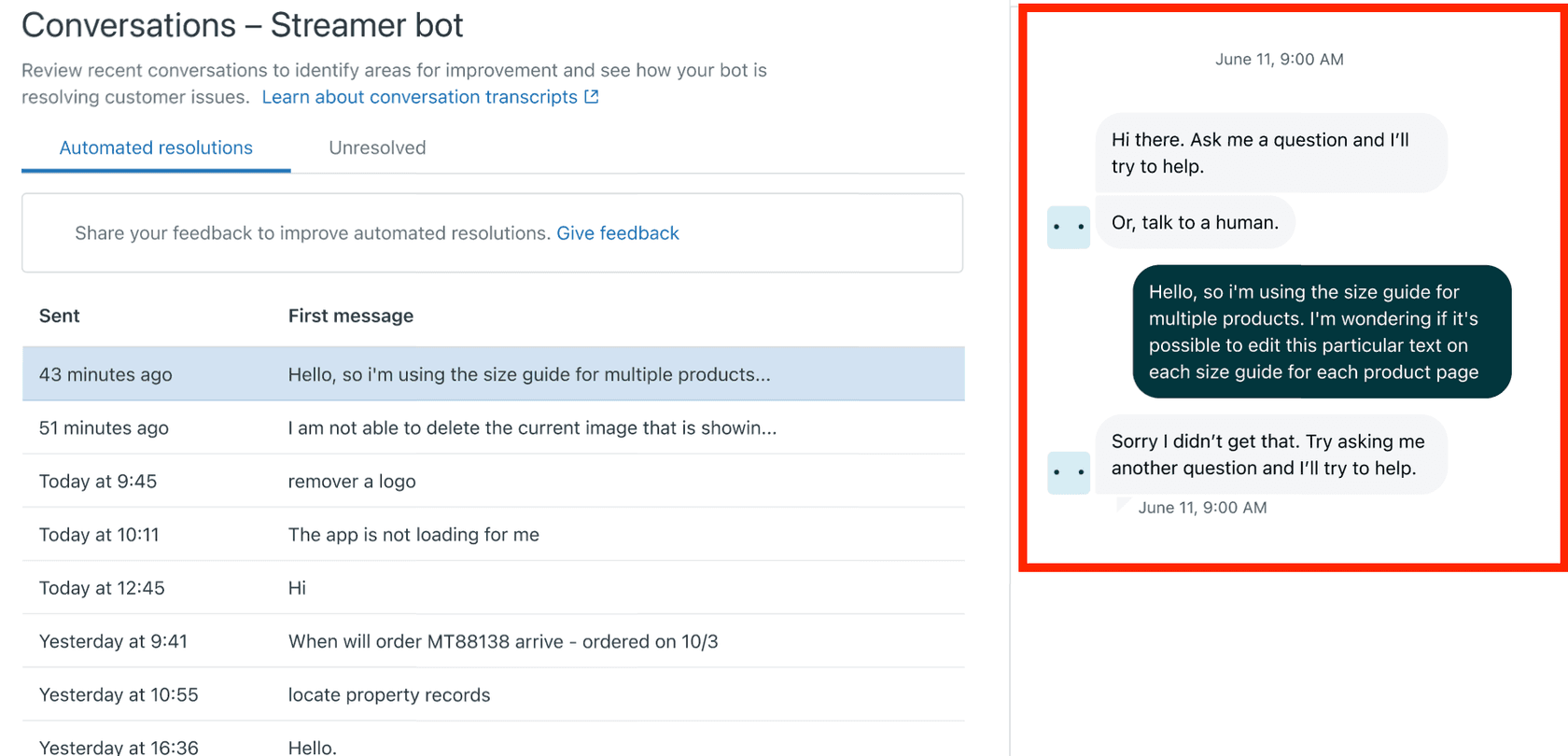

The first step is knowing where to find your conversation data. Zendesk organizes AI agent transcripts in the Admin Center under the AI agents section.

Here's how to access them:

- Navigate to Admin Center > AI > AI agents

- Select the AI agent you want to review

- Click the Insights tab to open the dashboard

- At the bottom of the dashboard, click either View unresolved conversations or View automated resolutions

You'll see two categories of conversations:

- Automated resolutions: Conversations the AI agent resolved without human intervention. These only appear after 72 hours of inactivity.

- Unresolved: Conversations that weren't resolved by the AI agent, including those that were transferred to a live agent.

Keep in mind a few limitations: transcripts are only available for the last 30 days, and each transcript displays the first 100 messages exchanged. If a conversation went beyond 100 messages, you'll only see the beginning.

Step 2: Review transcripts for quality assurance

Once you can access your transcripts, the real work begins. Quality assurance isn't about reading every single conversation (that would be overwhelming). Instead, focus on identifying patterns and outliers.

When reviewing automated resolution transcripts, ask yourself:

- Did the AI agent actually solve the customer's problem, or did the customer just give up?

- Were the responses accurate and grounded in your knowledge base?

- Did the conversation flow naturally, or did the AI seem confused?

Look for the "Customer sent details" placeholder in resolved transcripts. When customers provide sensitive information like order numbers or account details, Zendesk masks these in the transcript view for privacy. The placeholder indicates information was shared, but the specific details are hidden.

Zendesk also lets you submit feedback on automated resolutions directly in the transcript view. You can rate whether the resolution was fully resolved, partially resolved, or not resolved, and provide reasons for your rating. This feedback helps Zendesk improve their classification algorithms, though it doesn't directly impact your account's automated resolution metrics.

Step 3: Identify knowledge gaps from unresolved conversations

Unresolved conversations are gold mines for improvement opportunities. These are cases where the AI agent couldn't handle the request, either because it didn't have the knowledge or because the situation required human judgment.

As you review unresolved transcripts, categorize them into common themes:

- Missing help center articles: The customer asked something that isn't documented

- Unclear responses: The AI gave an answer, but the customer didn't understand it

- Edge cases: The request was valid but unusual, falling outside normal patterns

- System limitations: The AI couldn't perform an action the customer needed

Create a simple spreadsheet to track these patterns. Note the conversation ID, the category, and a brief description of what went wrong. Over time, you'll start to see which issues come up most frequently.

This is where a systematic review process pays off. Instead of reviewing transcripts randomly, set a schedule. Weekly reviews of 10-20 conversations will give you better insights than sporadic deep dives. Focus on one category at a time: one week look for knowledge gaps, the next week examine response quality.

Step 4: Turn insights into action

Identifying problems is only half the battle. The real value comes from fixing them.

For each knowledge gap you identified, prioritize based on frequency and impact. A missing article that would help 50 customers per month is more urgent than one that would help 5. Create a content calendar for your help center updates, tackling high-impact gaps first.

When updating your AI agent's training materials or responses, measure the improvement. After implementing a fix, check transcripts from the following weeks to see if similar conversations are now being resolved successfully. This before-and-after comparison validates your efforts and shows progress.

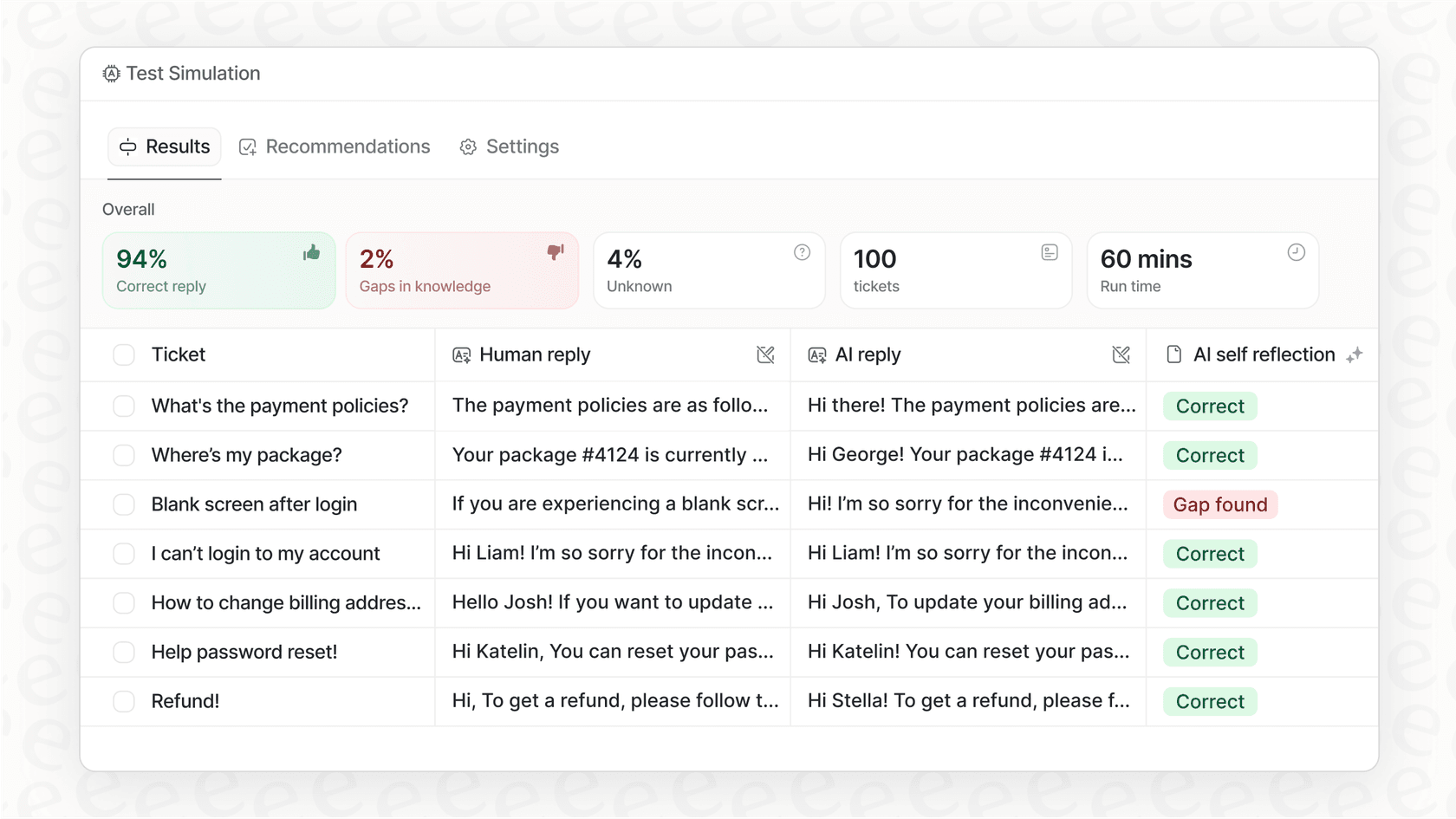

For teams looking to accelerate this process, eesel AI offers simulation capabilities. You can test proposed knowledge base updates against your historical tickets to see how they would have performed before going live. This lets you validate improvements with data rather than hoping they work.

Our Zendesk integration also enables automated actions based on transcript content. While Zendesk's native AI agent tickets are read-only and don't trigger workflows, eesel AI can apply tags, set priorities, and route tickets based on what was discussed in the conversation.

Common mistakes to avoid

Even with the best intentions, it's easy to fall into traps when reviewing transcripts. Here are the most common pitfalls:

Reviewing without a system. Randomly clicking through transcripts might surface interesting conversations, but it won't give you actionable trends. Define what you're looking for before you start, and track your findings consistently.

Focusing on individual cases instead of patterns. One customer having a bad experience is unfortunate. Fifty customers having the same bad experience is a problem you need to fix. Look for recurring themes rather than isolated incidents.

Not acting quickly enough. Transcript data has a shelf life. If you identify a knowledge gap but don't update your help center for three months, hundreds of customers may have the same frustrating experience in the meantime. Set SLAs for your improvement cycles.

Misunderstanding the 72-hour rule. Automated resolutions only appear in that tab after 72 hours of inactivity. A conversation that looks unresolved today might be classified as resolved three days from now. Don't panic if your numbers seem off; check back after the waiting period.

Expecting AI agent tickets to behave like regular tickets. They're read-only for a reason. They won't trigger your automations, they won't appear in Explore reports, and they can't be bulk edited. Understanding these limitations helps you work within them rather than fighting against them.

Taking your Zendesk AI agent conversation transcript analysis further

Zendesk's native transcript review is a solid foundation, but it has limitations. The 30-day window means you can't analyze long-term trends. The lack of bulk export makes it hard to perform large-scale analysis. And the read-only nature of AI agent tickets limits what you can automate.

This is where complementary tools can help. At eesel AI, we've built capabilities that extend what you can do with your conversation data:

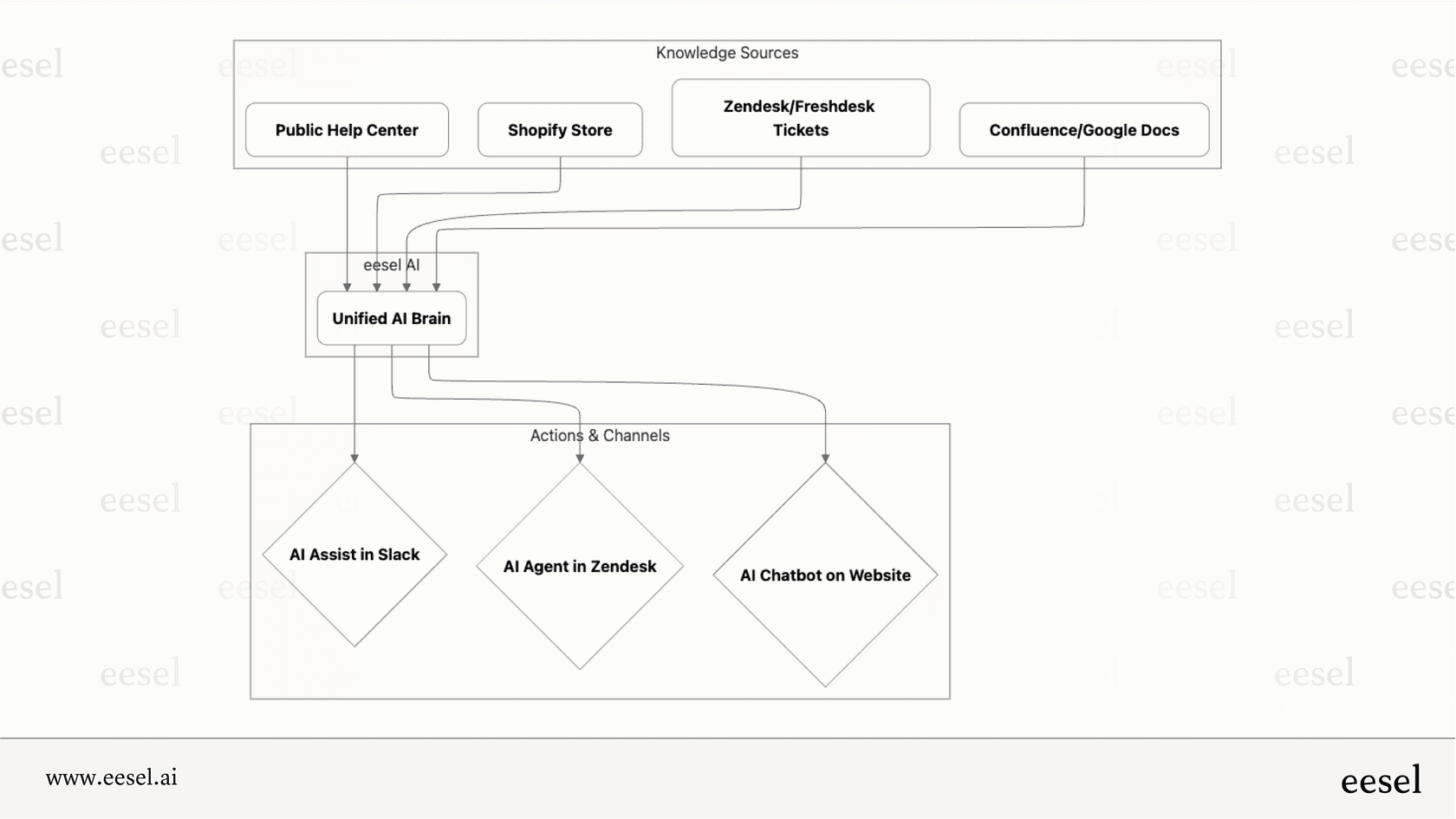

- Unified knowledge sources: Connect your Zendesk help center with internal documentation from Confluence, Google Docs, Notion, and more. This gives your AI agent a complete knowledge foundation to draw from.

- Advanced analytics: Identify trends across thousands of conversations, not just the ones you manually review. Spot emerging issues before they become major problems.

- Simulation testing: Test knowledge base updates and response changes against your historical tickets to predict their impact before going live.

- Automated actions: While Zendesk's AI agent tickets are read-only, eesel AI can take actions on regular tickets based on conversation analysis, including tagging, routing, and prioritization.

The goal isn't to replace Zendesk's native capabilities but to build on them. Use Zendesk for day-to-day transcript review and quality assurance. Use additional tools when you need deeper analysis, trend identification, or automated workflows.

Frequently Asked Questions

Share this article

Article by

Stevia Putri

Stevia Putri is a marketing generalist at eesel AI, where she helps turn powerful AI tools into stories that resonate. She’s driven by curiosity, clarity, and the human side of technology.