How to automate ticket triage: a step-by-step guide for support teams

Stevia Putri

Katelin Teen

Last edited May 15, 2026

When your inbox opens every morning, every ticket is marked URGENT. The same agents spend the first hour of their shift not solving problems - just sorting them. 15-25% of the tickets they assign will get reassigned at least once, adding roughly 47 minutes to each resolution time. For a team processing 2,000 tickets a month at a 35% misroute rate, that's $329,000 a year in avoidable rework - not counting SLA penalties, churn from slow resolution, or the morale cost of skilled agents acting as inbox sorters.

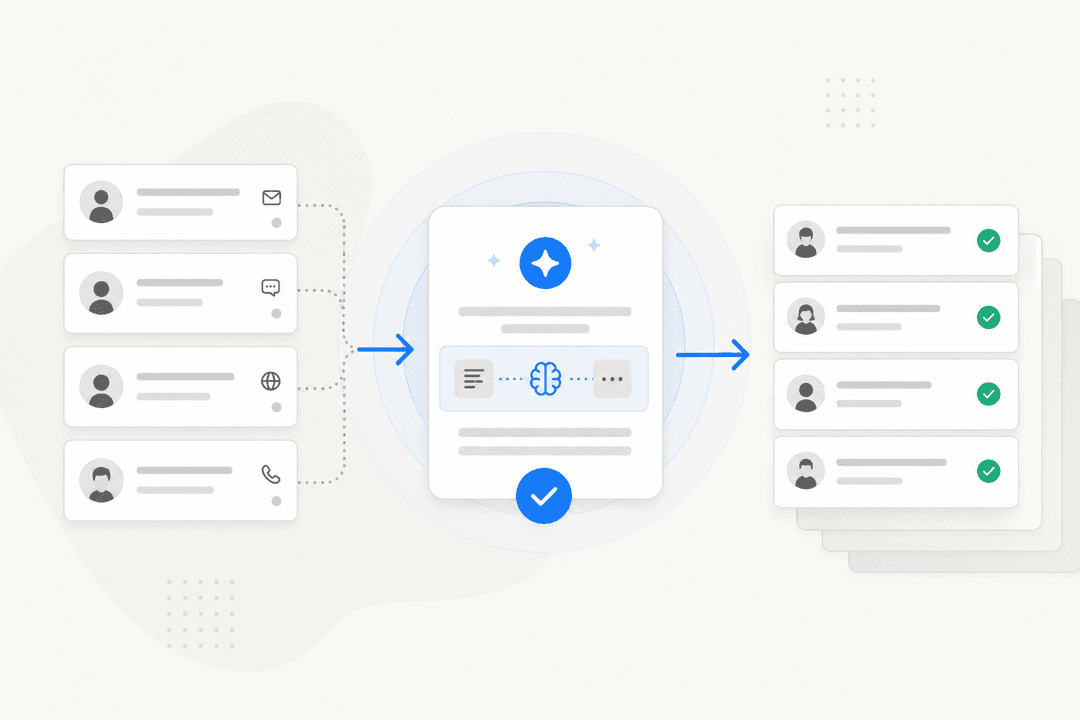

eesel AI's helpdesk agent reads every incoming ticket, classifies it, sets priority, routes it to the right queue, and flags escalation risks - all in under a second, before a human ever opens it. Teams using eesel's support automation typically reach 73%+ autonomous resolution in the first month. This guide covers exactly how to get there: what triage actually involves, where manual approaches hit their ceiling, and the nine steps to set up automated triage that holds up over time.

What ticket triage actually involves

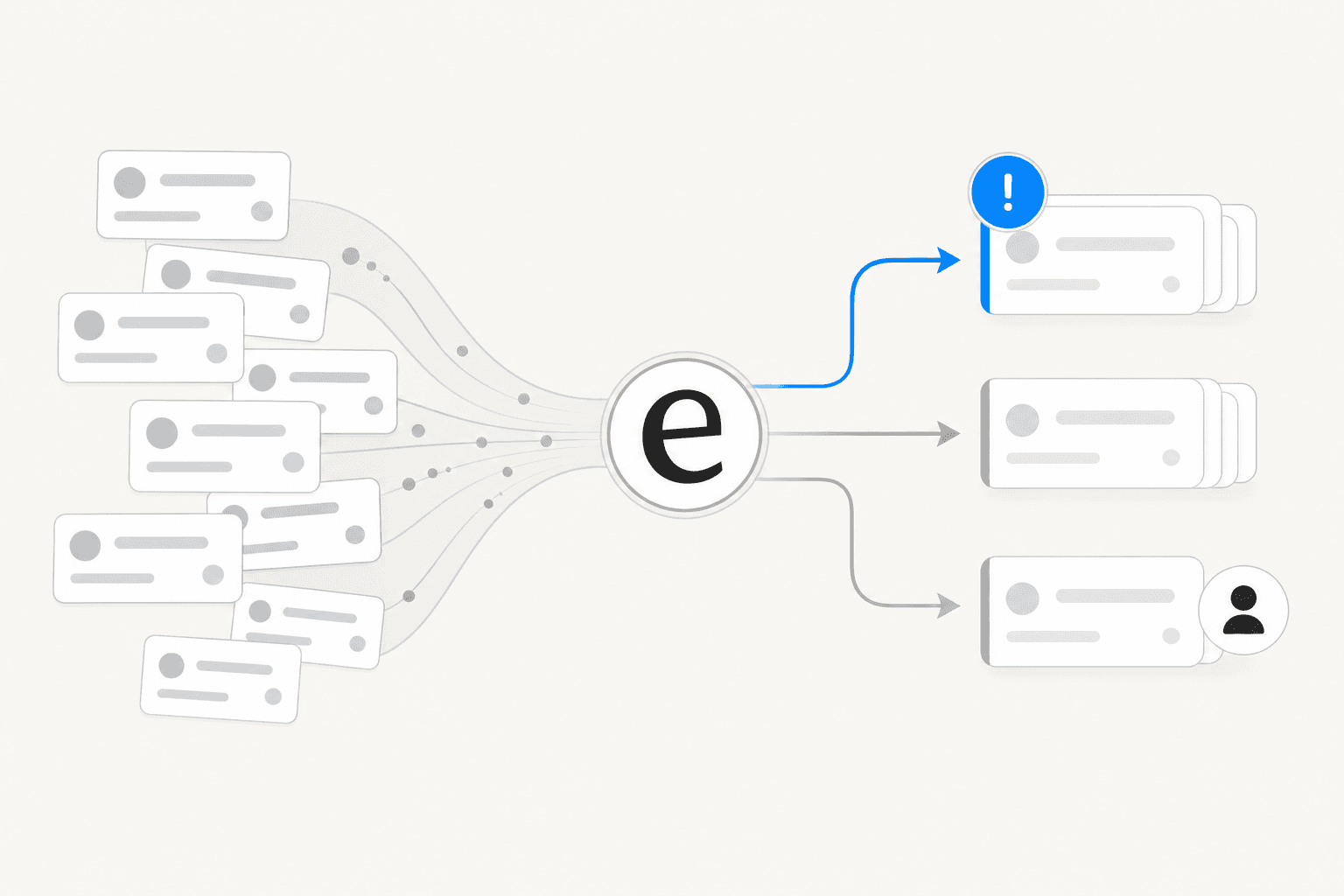

Triage is the work that happens between a ticket arriving and an agent starting to resolve it. It's four decisions, made for every single ticket:

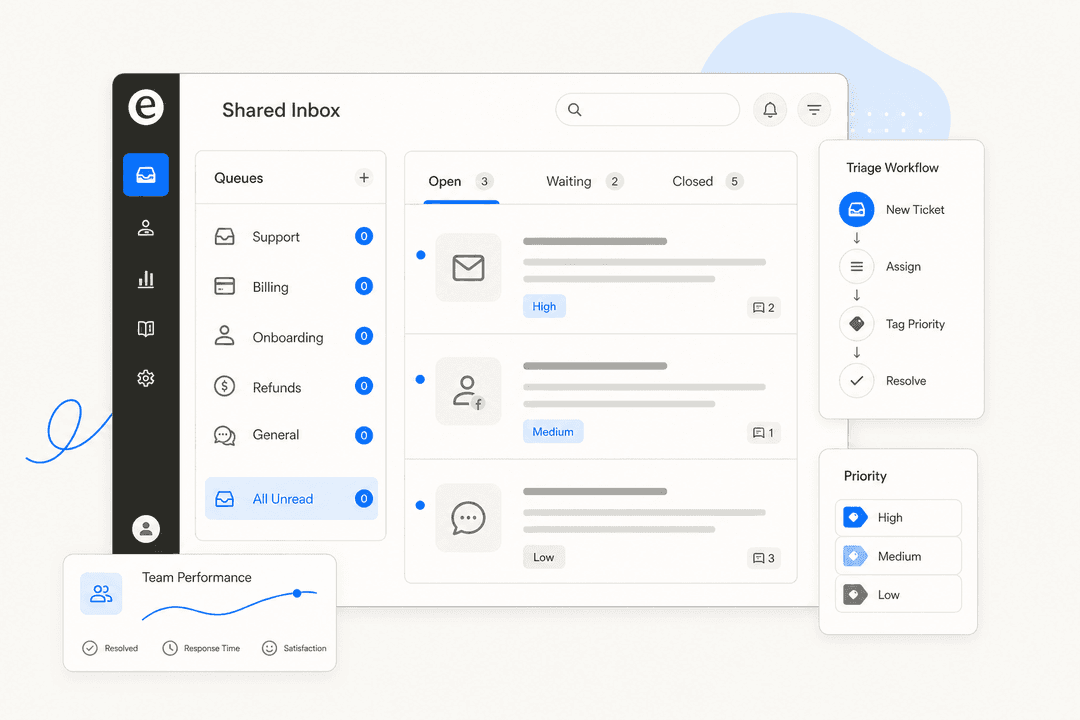

Classification. What is this ticket about? Product area, issue type, customer segment, root cause, sentiment. Without consistent classification, routing is guesswork and trend reports are meaningless. SentiSum describes this as building a "tagging taxonomy" - a defined set of categories with one-sentence definitions so every agent (and every AI) applies the same labels. At minimum: product area, issue type, urgency signal, sentiment, root cause, and customer segment.

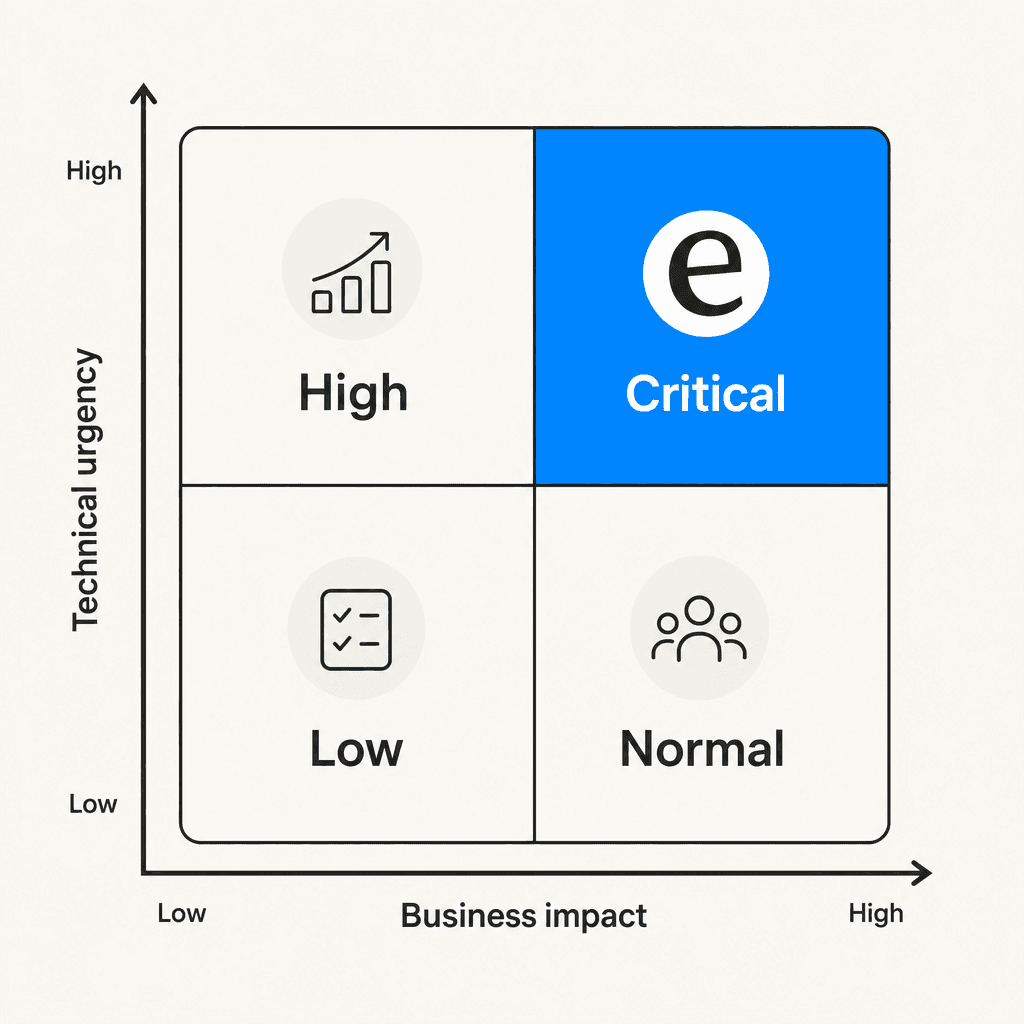

Prioritization. How urgent is this ticket relative to everything else open? The ITIL framework maps two variables: business impact (how many users or operations are affected - the customer's domain) and technical urgency (how severe the technical issue is - the support team's domain). Together they produce a priority tier. The key design rule: customers don't get to set their own urgency label. They report impact. The support team determines urgency.

Routing. Where does this ticket go? Queue, team, individual agent. Routing criteria typically include issue type, agent skill, customer tier (enterprise vs. SMB), language, and channel of origin.

Escalation. Does this ticket need to move to a higher tier before the situation gets worse? Manual escalation depends on an agent noticing a problem - a chain that breaks under volume, especially for high-sentiment tickets that don't look urgent at first glance.

The cost of getting any of these four decisions wrong is concrete. A team processing 2,000 tickets a month at a 35% misroute rate - 700 tickets reassigned, each adding 47 minutes of rework - runs a labor tab of $329,000 a year before a single SLA breach is counted. That's at $50/hour. Scale to $75/hour and the number climbs to nearly $500,000.

Why manual and rule-based triage fall short

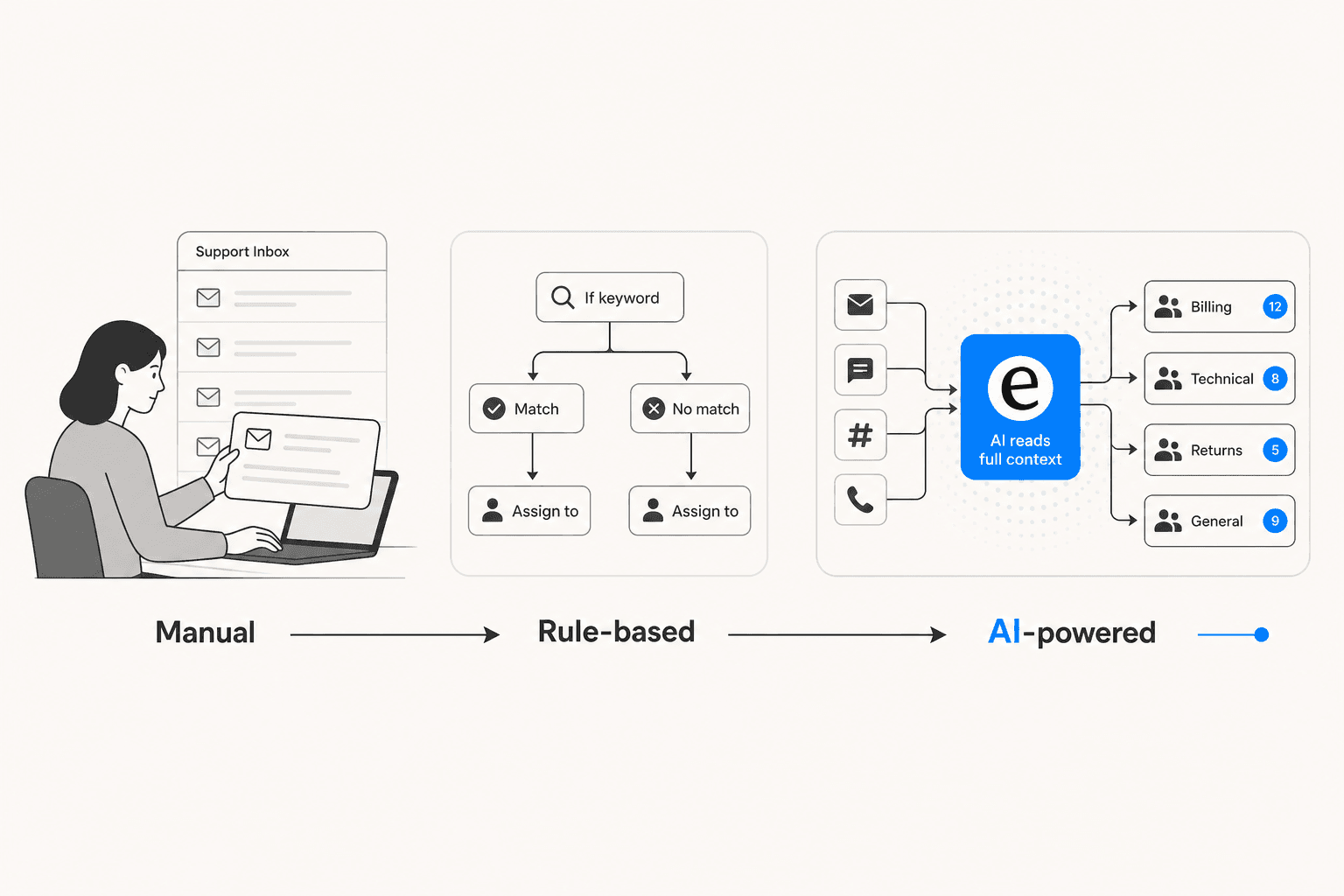

Most support teams start with one of two approaches. Both have hard ceilings.

Manual triage means a person - or a rotating triage hat among the team - reads every incoming ticket and makes all four decisions by hand. SentiSum documented GoCardless running two dedicated full-time agents just for ticket classification, using three severity tags (B1, B2, B3). The classification job was consistent enough that automating it would reclaim both FTEs. Manual triage runs 1-3 minutes per ticket, degrades under volume, and varies by agent mood and shift.

Rule-based automation is the obvious upgrade: if/then logic keyed off keywords, email domains, form fields, and subject lines. Every helpdesk offers this - Zendesk triggers, Freshdesk automations, Jira SLA queues. It handles simple, stable ticket types well and is fast to set up. The ceiling is low: IrisAgent benchmarks rules-based routing accuracy at 40-50%. Rules break on synonyms and typos, fail on multi-issue tickets, have no sentiment awareness, and require constant manual updates as the product changes. One new feature launch triggers a new round of rule maintenance.

An observation from an 86-comment r/msp thread on triage overload captures the operational failure mode common to both:

"The MOST important lesson I learned far too late: you MUST TRIAGE ALL TICKETS BEFORE DISPATCHING ANY. Triage all of them, then dispatch all of them. Not one at a time." -- u/CmdrRJ-45

When triaging and dispatching happen simultaneously - the default in any manual or simple rules-based setup - urgent tickets get buried under earlier, non-urgent arrivals. The inbox becomes a queue ordered by arrival time, not priority.

AI-powered triage breaks both ceilings. It reads full ticket content, conversation history, customer sentiment, and account context, then makes all four triage decisions in under a second. IrisAgent benchmarks AI-powered routing at 85-95% accuracy in mature deployments - against the 40-50% ceiling for rules - with 90%+ tag consistency and 50% fewer reassignments.

How to automate ticket triage with eesel AI

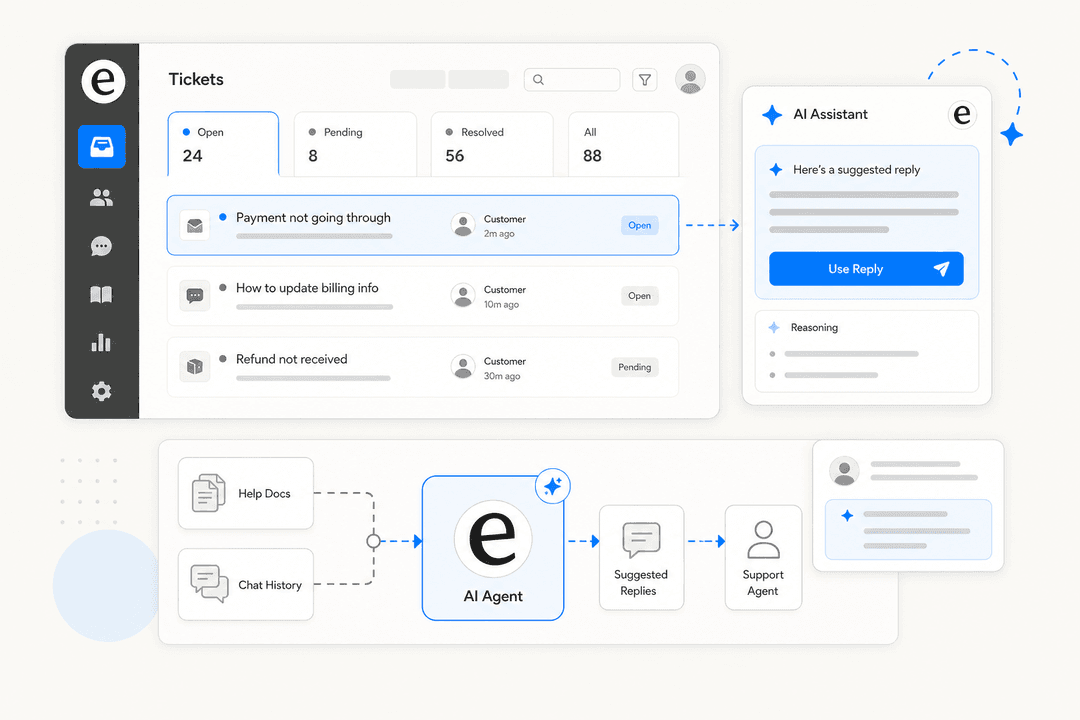

eesel AI's helpdesk agent connects to your existing helpdesk - Zendesk, Freshdesk, Gorgias, HubSpot, and others - and learns your operation immediately from your past tickets, macros, help center articles, and connected documentation. No manual training, no configuration wizards.

For triage, eesel handles all four decisions:

- Classification: Tags tickets by issue type, product area, sentiment, and customer segment based on your existing taxonomy

- Prioritization: Scores each ticket against your defined priority matrix, accounting for customer tier, SLA proximity, and real-time sentiment

- Routing: Assigns tickets to the right queue or agent based on skills, language, capacity, and channel

- Escalation: Watches for churn signals, SLA risk, and sentiment deterioration, and escalates before a situation compounds

What separates eesel from a rules engine is the simulation mode. Before going live, eesel runs on thousands of your historical tickets and shows exactly how it would have triaged each one - so you can compare the AI's decisions against what your agents actually did, calibrate where they diverge, and go live with confidence. No live customer tickets at risk during testing.

Mature eesel deployments reach up to 81% autonomous resolution. Gridwise resolved 73% of tier 1 requests in the first month. Smava runs 100,000+ tickets a month through eesel in German, fully automated.

The rollout is deliberately progressive: start with eesel drafting responses for agent review on a few ticket types, verify accuracy, then expand its autonomy as trust builds - the same way you'd promote any new team member based on demonstrated performance.

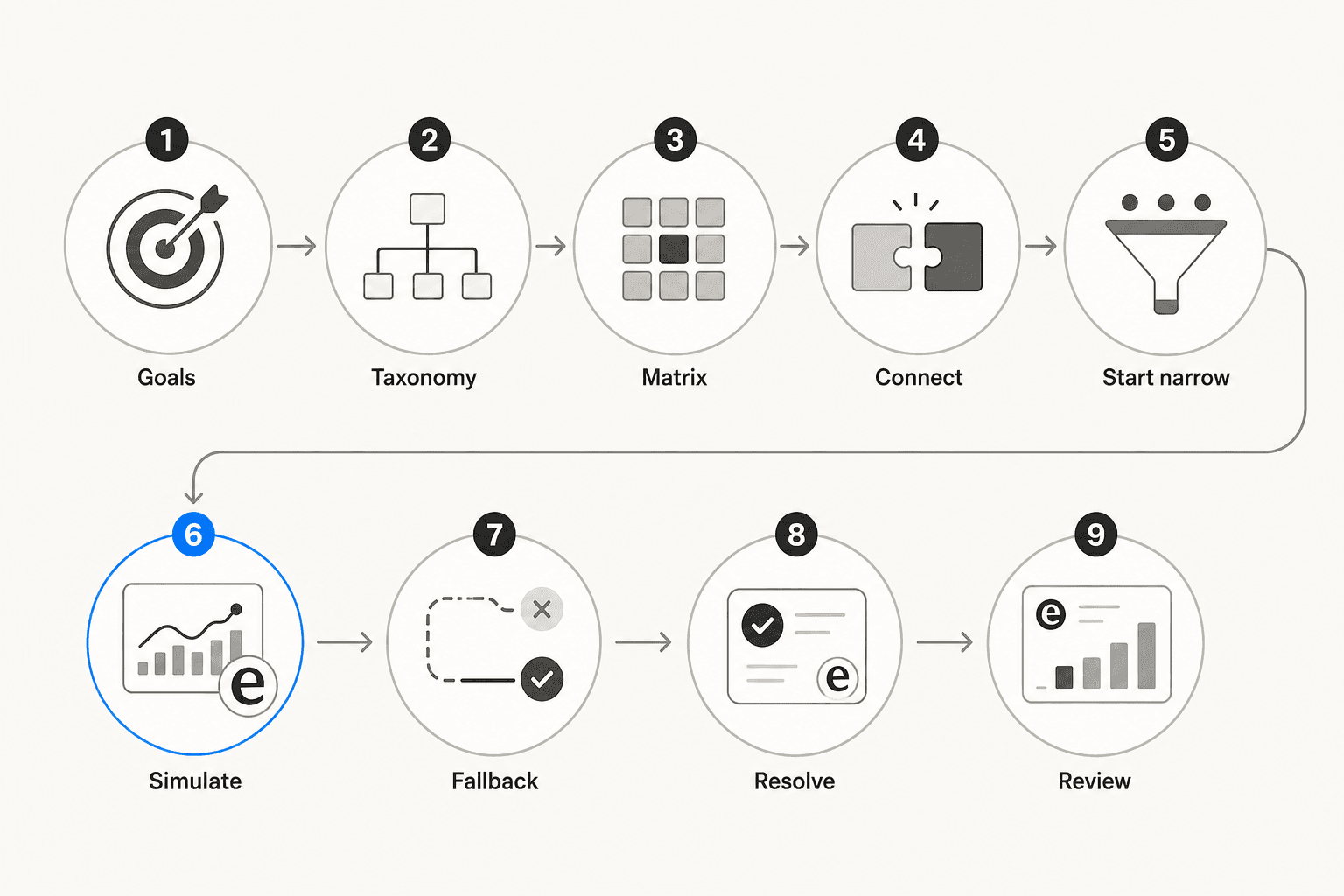

Setting up automated triage: the 9 steps

Step 1: Define your goals and SLAs

Before touching any tooling, decide what success looks like. SentiSum recommends anchoring on specific, measurable questions: Which customer segment needs faster response? Which ticket type causes the most SLA breaches? What's the current first-response time for Critical tickets?

Set explicit SLA targets per priority tier. eesel AI's best practices suggest something like: Critical = 15-minute response, High = 1 hour, Normal = 8 hours, Low = 24 hours. The exact numbers matter less than the consistency. Once SLAs are defined, triage automation has a clear success condition.

Step 2: Build a clean tagging taxonomy

Map every ticket category you need to act on, and write a one-sentence definition for each. IrisAgent's guide is direct: "If your tag taxonomy has 200 overlapping categories, automation just makes the mess faster. Clean up the taxonomy before training any model."

Collapse redundant tags, delete unused ones, and standardize before connecting any automation. Cover at minimum: product area, issue type, urgency signal, sentiment, root cause, and customer segment. The AI's accuracy ceiling is set by the clarity of the categories - a fuzzy taxonomy produces a fuzzy model.

Step 3: Create a priority matrix

Build a 2×2 grid mapping impact × urgency to four tiers: Critical, High, Normal, Low. eesel AI's guide and multiple r/msp practitioners both recommend this approach, drawn from the ITIL framework.

Critical design rule: customers control impact ("how many users are blocked?"), your team controls urgency ("how severe is the technical issue?"). Never let customers self-assign a priority label. Use structured intake questions that produce objective answers - "Is there a workaround?" and "How many users are affected?" - and map those answers to a tier automatically.

Step 4: Connect your helpdesk and import ticket history

Install eesel AI via your helpdesk's marketplace and import 6-12 months of resolved tickets. IrisAgent recommends this historical import as the fastest path to accurate tagging from day one - the model trains on your actual data, terminology, and resolution patterns rather than a generic industry baseline.

For rule-based triage: identify the triggers (email domain, subject keywords, channel) and configure them directly in your helpdesk settings.

Step 5: Start with your 3-5 highest-volume intents

Don't automate everything on day one. IrisAgent is explicit: pick the 3-5 highest-volume intents - password resets, order status, billing questions - and prove 90%+ accuracy on those before expanding. Full coverage from day one means mediocre accuracy everywhere, which erodes team trust before it has a chance to build.

Step 6: Simulate before going live

Run your triage configuration on historical tickets in simulation mode before it touches a live customer. eesel AI's simulation feature shows how the AI would have handled thousands of past tickets - which ones it would have routed correctly, which incorrectly, and which it would have escalated - so you can calibrate before launch. This removes the risk of discovering problems through customer complaints.

Step 7: Design the fallback path

When the AI's confidence score falls below your threshold, it should route the ticket to human triage with its reasoning attached - not force a low-confidence decision. IrisAgent calls this the trust mechanism: hard-fail fallbacks destroy trust faster than occasional misrouting. The fallback queue is also the safety net for novel tickets that don't match training patterns.

Step 8: Resolve at triage, not after

Triage is the first opportunity to resolve a ticket, not just sort it. Wrangle and DevRev both describe agentic AI closing out known-issue responses, how-to queries (pulling from the knowledge base), account lookups, and feature request acknowledgments - without routing to an agent at all. DevRev customer BILL achieved 70%+ autonomous resolution by building resolution into the triage step. The benchmark for mature AI triage is around 60% auto-resolution.

Step 9: Close the loop weekly

Every manual correction an agent makes after an incorrect routing is a training signal. IrisAgent recommends reviewing mislabeled tickets weekly and feeding corrections back into the model. Teams that maintain this cadence reach 100% auto-triage coverage within 60-90 days. Skip the loop for a quarter and accuracy quietly decays - what IrisAgent calls "automation drift" - as the product evolves and the ticket mix shifts.

Common mistakes that kill automated triage projects

Most triage automation failures are process failures, not model failures.

Letting customers self-report priority. Kirsty Pinner, Head of Product at a customer service analytics firm, via SentiSum: "One of the things most companies get wrong is letting customers self-report issues on forms. It causes inherent distrust in any subsequent analysis." When every customer can mark their own ticket Critical, the word stops meaning anything. Use structured intake questions tied to objective criteria instead.

Automating a messy taxonomy. If your tags are overlapping and inconsistently applied before automation, they will be overlapping and inconsistently applied faster after automation. The AI doesn't fix the taxonomy - it amplifies it.

Trying to cover everything on day one. What happens when teams rush to full coverage, from r/automation:

"A few errors is enough to make your team lose trust in your agent, which is the worst thing that can happen. Once they don't trust it, they'll double-check everything and the automation is basically dead." -- u/crow_thib

Fuzzy escalation logic. This is the source of most production AI triage complaints:

"Most issues are not model issues. They're policy issues. If your escalation logic is fuzzy, the AI just amplifies the fuzziness." -- u/DFSautomations, r/automation

Before blaming the model for bad escalation decisions, check whether your escalation rules are clear enough for a human to follow consistently. If they're not, no amount of AI configuration will fix them.

Dispatching before triaging the full queue. From r/msp: triage all tickets in the queue first, then dispatch. One-at-a-time dispatching buries urgent tickets under non-urgent ones that simply arrived earlier.

Skipping the weekly correction review. Accuracy decays as your product evolves and ticket mix shifts. The correction loop is what keeps the model current. Without it, you're running on an outdated model and won't know until SLA breaches start showing up.

Try eesel AI

eesel AI's helpdesk agent handles ticket triage end-to-end - classification, prioritization, routing, and escalation - on top of your existing helpdesk. You connect it, run simulations on past tickets to verify quality before going live, and expand its autonomy at your own pace as it earns trust. Pricing is task-based at $0.40 per regular ticket, with no credit card required for the free trial.

Frequently Asked Questions

Share this article

Article by

Stevia Putri

Stevia Putri is a marketing generalist at eesel AI, where she helps turn powerful AI tools into stories that resonate. She’s driven by curiosity, clarity, and the human side of technology.