AI helpdesk implementation guide (2026)

Stevia Putri

Katelin Teen

Last edited May 15, 2026

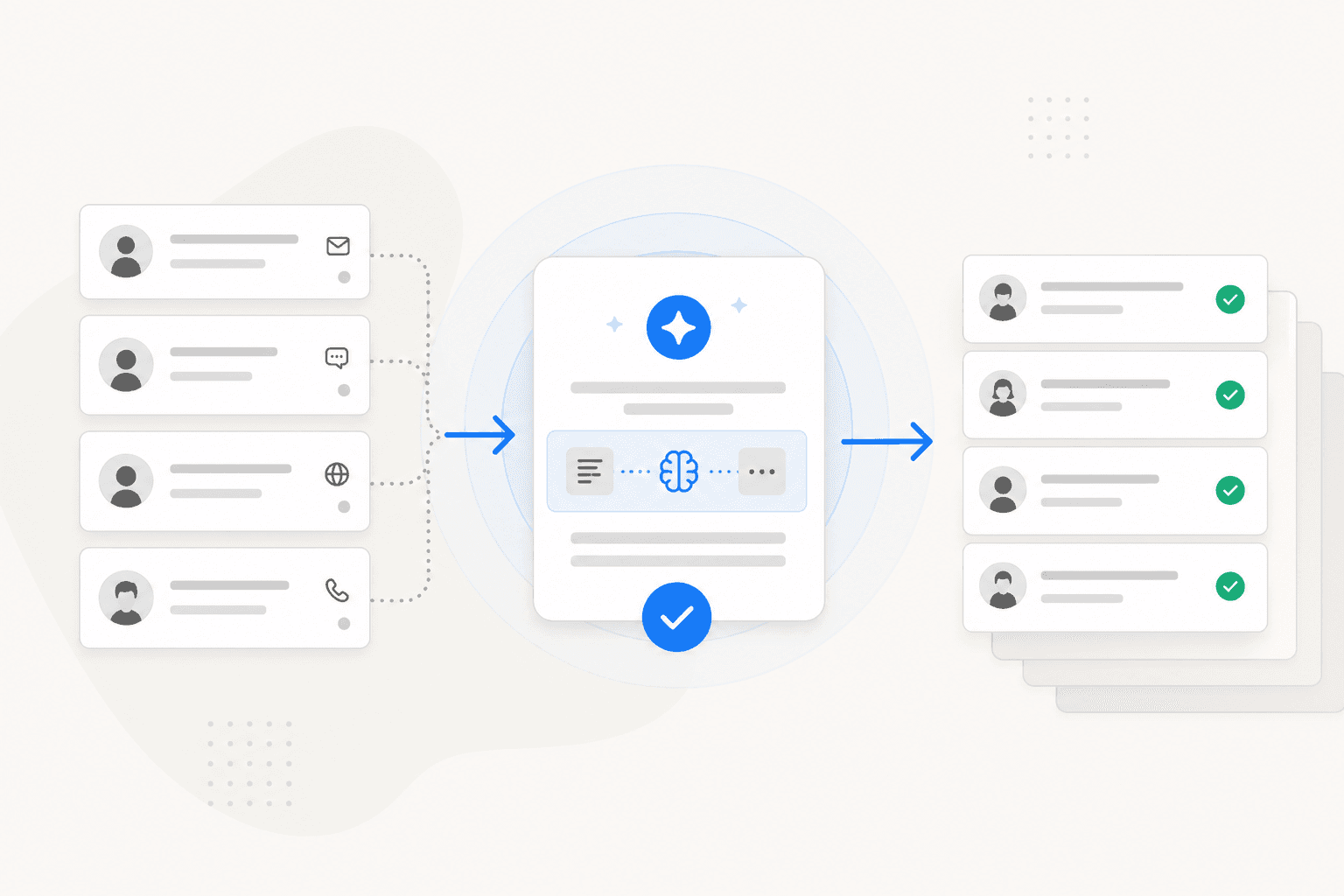

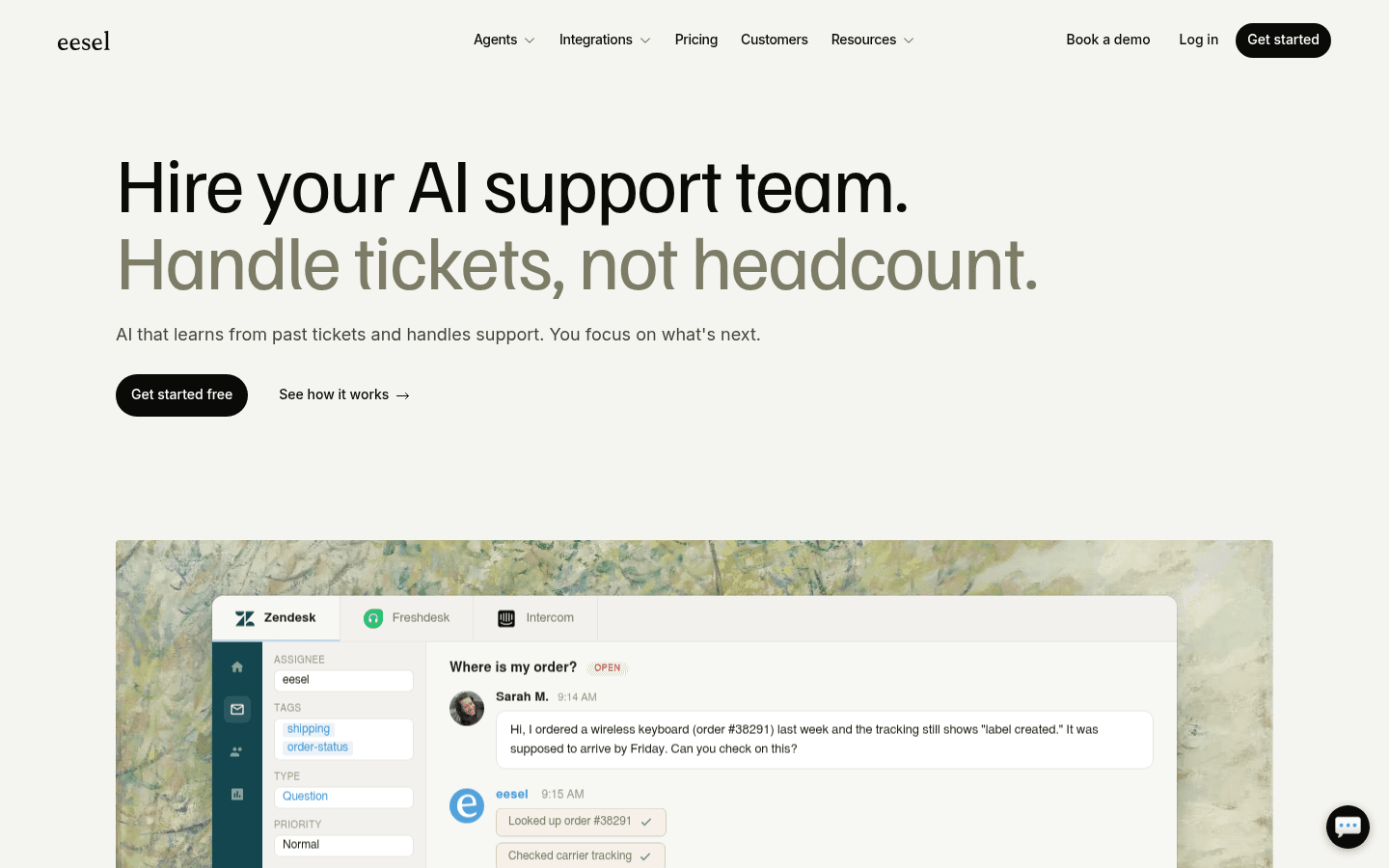

Most support teams have done the same math: ticket volume is climbing, headcount isn't, and hiring more agents doesn't scale forever. AI changes that equation - but only when the implementation is done carefully. A rushed rollout produces an AI that confidently sends wrong answers and erodes customer trust. A planned one produces an AI that handles 40-60% of your volume within the first month and keeps improving.

Tools like eesel AI have made the technical side genuinely fast - under 15 minutes to connect, same-day go-live possible. But "fast to connect" and "implemented well" are different things. This guide covers the full arc: what to sort out before you start, how to pick the right tool, how to prepare your knowledge base, how to test before customers see the AI, and how to measure results after you're live.

How to implement AI in your helpdesk

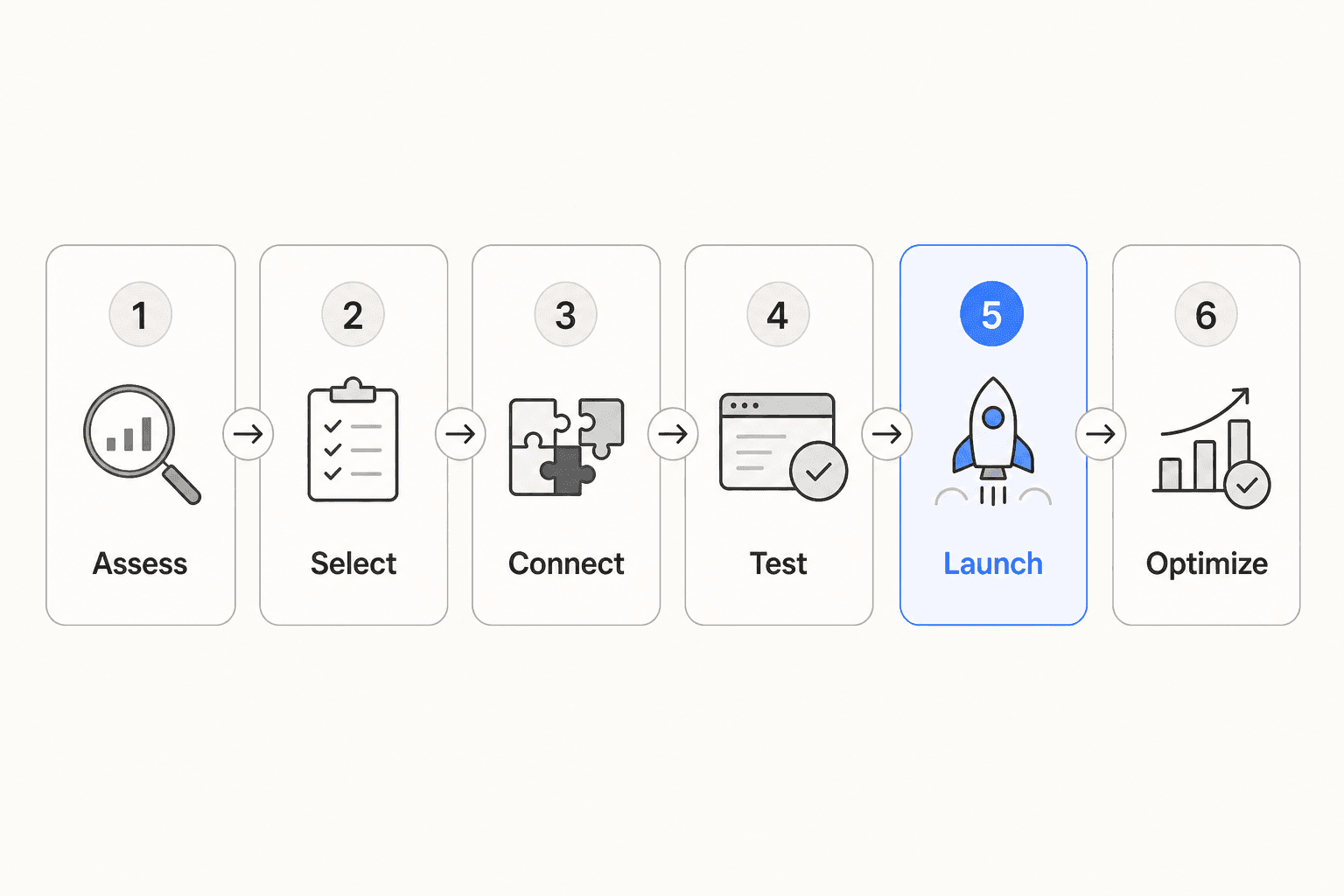

The seven phases below run in sequence - each builds on what comes before it. Skipping any one of them doesn't save time; it moves the consequences to a later stage where they're harder and more expensive to fix.

Phase 1: Assess your current support operation

You cannot skip this phase. The quality of your AI's answers depends directly on the quality of your knowledge base and how clearly you've defined your scope. Both require honest assessment before any tool gets connected.

Categorize your ticket volume. Pull the last 30 days of tickets and sort them into categories: billing questions, password resets, product how-tos, shipping and order status, bug reports, feature requests, account changes. What percentage falls into each bucket? Categories with high volume and predictable answers are where AI performs best - and where to start. Bug reports and relationship-sensitive escalations are where AI should not start.

Assess knowledge base quality. For your top five ticket categories, ask: does accurate, current documentation exist somewhere? A help center article, a macro, a Google Doc, a Confluence page - any of these count. If the answer is "we handle this by memory" or "those docs are two years out of date," note it. Every gap you identify now is a gap the AI will expose to customers later.

Define success metrics before you begin. The three most useful:

- Deflection rate: percentage of tickets handled without human involvement. Baseline this first so you can measure change accurately.

- First response time: time from ticket creation to first reply. AI reduces this to near-zero for automated tickets.

- CSAT by resolution type: customer satisfaction for AI-handled tickets versus human-handled ones. This tells you whether quality is holding as you expand AI scope.

Setting these upfront means you have clear criteria for "working" rather than a vague impression that things seem okay.

Phase 2: Choose your AI helpdesk tool

The right choice depends on your existing helpdesk, your knowledge sources, and how much control you need over AI behavior before, during, and after launch.

What to look for

A few criteria separate good implementations from mediocre ones:

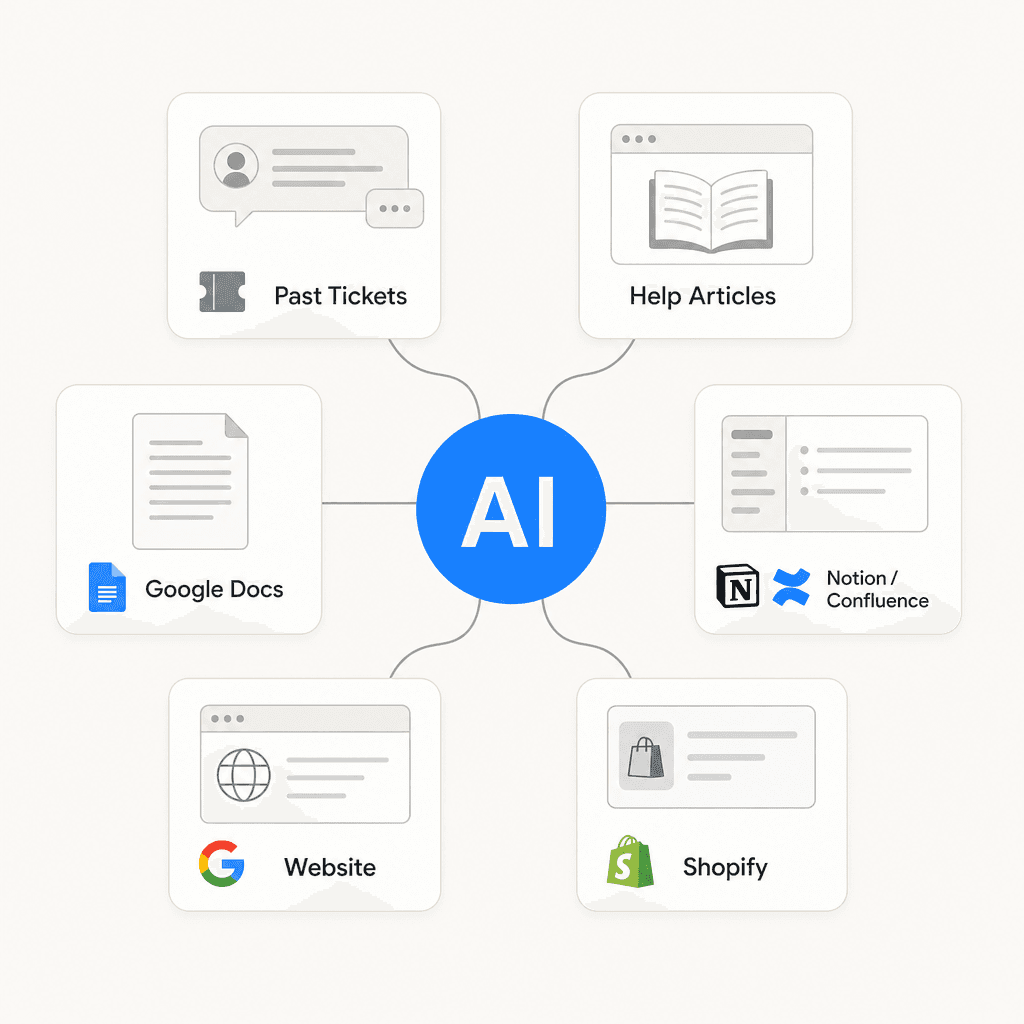

- Knowledge source breadth. Does the AI pull only from your helpdesk's built-in articles, or can it connect to Google Docs, Confluence, Notion, Slack, your website? The wider the sources, the higher your coverage ceiling.

- Graduated autonomy. Can you start in draft-only mode and expand gradually? Tools that only offer "fully on" or "fully off" remove your ability to verify quality before customers are affected.

- Pre-deployment simulation. Can you run the AI against historical tickets before going live? This is the highest-value risk-reduction feature in AI helpdesk implementations. Without it, you're guessing.

- Natural language configuration. Can you define escalation rules in plain English - "always route billing disputes to senior support" - or does configuration require a workflow builder and developer intervention?

- Platform fit. Does the tool work within your existing helpdesk interface, or does it require a separate platform your agents need to learn?

eesel AI

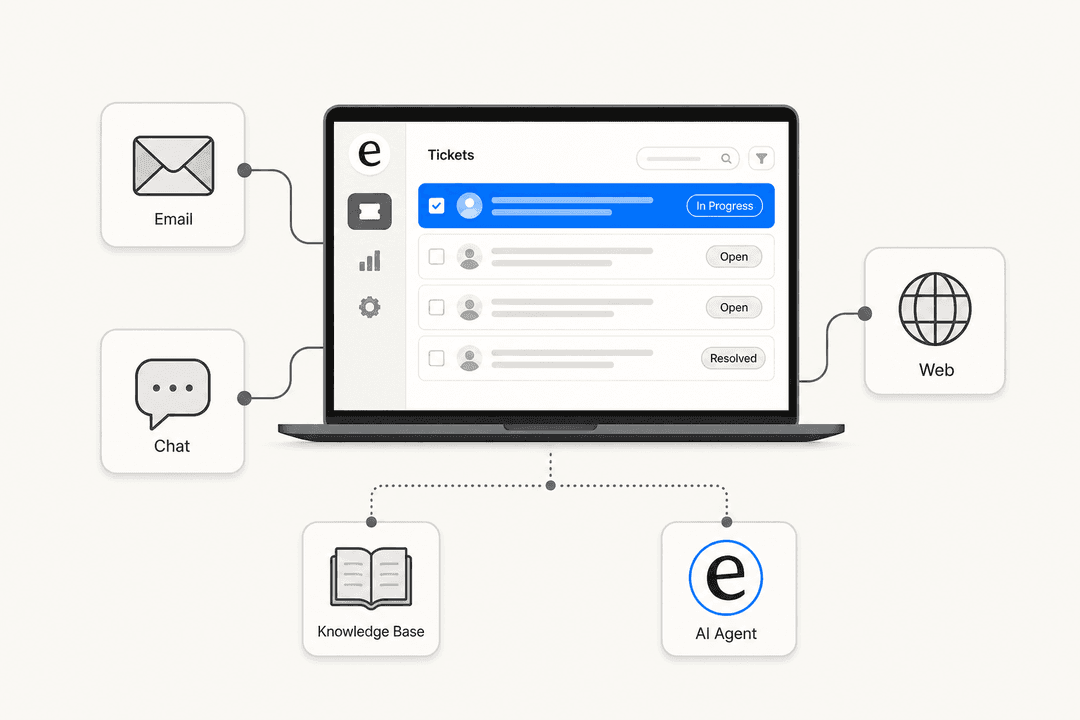

eesel AI drops on top of your existing helpdesk. It connects to Zendesk, Freshdesk, Gorgias, Help Scout, HubSpot Service Hub, and others - appearing in your agent list and operating within your existing ticket interface. No migration, no retraining your team on new software.

What distinguishes eesel from platform-native options is knowledge source coverage and pre-deployment simulation. On knowledge, eesel connects to over 100 integrations including Google Drive, Notion, Confluence, SharePoint, Shopify, and Slack - meaning the AI draws from everything your team actually knows, not just what's been uploaded to a help center. On simulation, eesel runs the AI against thousands of historical tickets and returns a data-driven forecast before any customer sees it.

Mature eesel deployments reach up to 81% autonomous resolution with a typical payback period under two months. Pricing is $0.40 per regular task (ticket or chat session) with no platform fee and no per-seat charges - at 1,000 tickets per month, that's $400.

"We chose eesel AI for its multi-channel data input. By linking our Zendesk and Google Docs, customers get instant responses and tough questions are automatically triaged." - Wesley Wang, CTO, Ecosa

Platform-native AI

Zendesk, Freshdesk, and others have AI built into their own platforms. Zendesk's AI automated resolutions are included in all Suite tiers (5 per agent per month on Team, up to 15 on Enterprise), with additional resolutions at $1.50-2.00 each. Freshdesk's Freddy AI Agent requires Freshdesk Omni, starting at $29/agent/month, and has knowledge ingestion caps of 200 files and 10 URLs per agent - no native connectors for Confluence, Google Docs, or Slack.

Platform-native AI is a reasonable starting point if your knowledge lives entirely within that one platform. If your knowledge spans Confluence, Google Docs, Notion, or Slack, you'll hit the ceiling quickly. For a full comparison of the best AI helpdesk tools in 2026, that post covers the main options with pricing and feature breakdowns.

Phase 3: Prepare your knowledge base

This phase determines your ceiling. The AI can only answer from what it knows, and what it knows depends entirely on what you give it access to and how well that content is maintained.

What to connect

eesel reads from these source types without any import or export step:

| Source type | What to connect |

|---|---|

| Helpdesk data | Past tickets, help center articles, macros, canned responses |

| Docs and wikis | Google Drive, Google Docs, Notion, Confluence, SharePoint |

| Website | Public help pages, product documentation, FAQ pages |

| Internal comms | Slack channel history, useful when product knowledge lives in conversations |

| E-commerce | Shopify products, orders, inventory for real-time lookups |

Fix documentation before connecting it

Connecting a knowledge source with outdated content is worse than not connecting it - the AI will confidently give customers old information. Before going live:

- Verify your top 10 most common ticket types each have a current, accurate answer documented somewhere.

- Update any help articles referencing discontinued features, old pricing, or deprecated processes.

- Mark draft documents clearly. The AI cannot distinguish a published policy from an internal work-in-progress if both exist in the same folder.

Fill gaps before expanding scope

Running eesel's simulation before launch identifies which ticket categories the AI cannot confidently answer. That gap report is your documentation to-do list. For each gap: check whether the information exists somewhere in your organization and just needs to be connected, or whether you need to write new documentation before expanding AI scope to that category. The AI support ticket deflection guide covers common knowledge gap patterns and how to address them systematically.

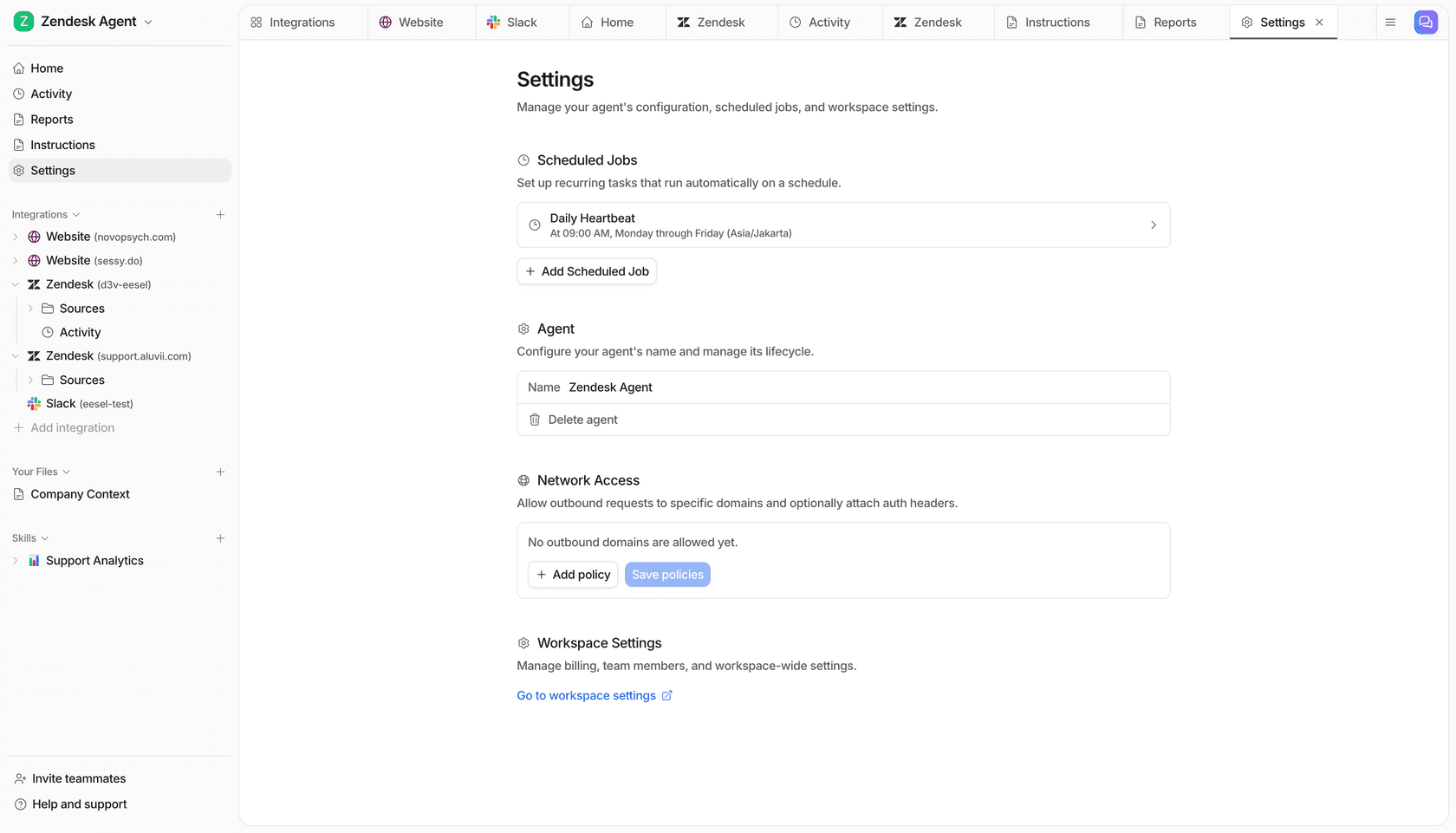

Phase 4: Configure and integrate

With your knowledge sources mapped, the technical integration is straightforward.

Connect your helpdesk. Go to eesel's integrations page and authorize the connection. eesel reads your existing tickets, macros, and help center articles immediately.

Connect your additional knowledge sources. Add Google Drive, Notion, Confluence, or whatever external sources you identified in Phase 3. No file exports needed - eesel reads them in place and stays in sync as they're updated.

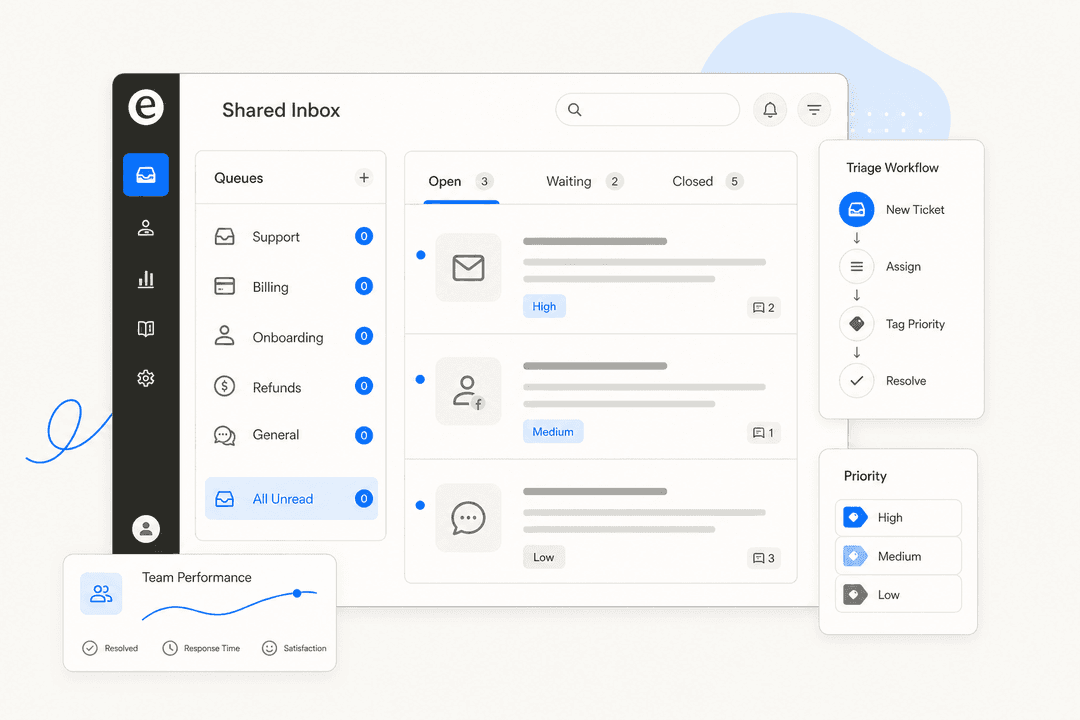

Write your escalation rules. This step gets skipped or rushed more than any other, and it causes the most post-launch problems. Write each rule in specific, plain English:

- "Always escalate if the customer mentions a refund over $100."

- "Escalate any ticket from customers tagged as Enterprise."

- "Route all billing disputes to the senior team, not the general queue."

- "If the customer uses the word 'legal' or 'lawyer,' escalate immediately."

- "For VIP customers, CC the account manager on every reply."

Vague rules like "escalate anything complex" aren't followable consistently. The AI will make inconsistent judgment calls on ambiguous cases. Be specific, and test each rule during simulation to verify it triggers correctly.

Set your starting autonomy level. Start in copilot mode, where every AI draft requires human approval before sending. Phase 6 covers when and how to expand from there.

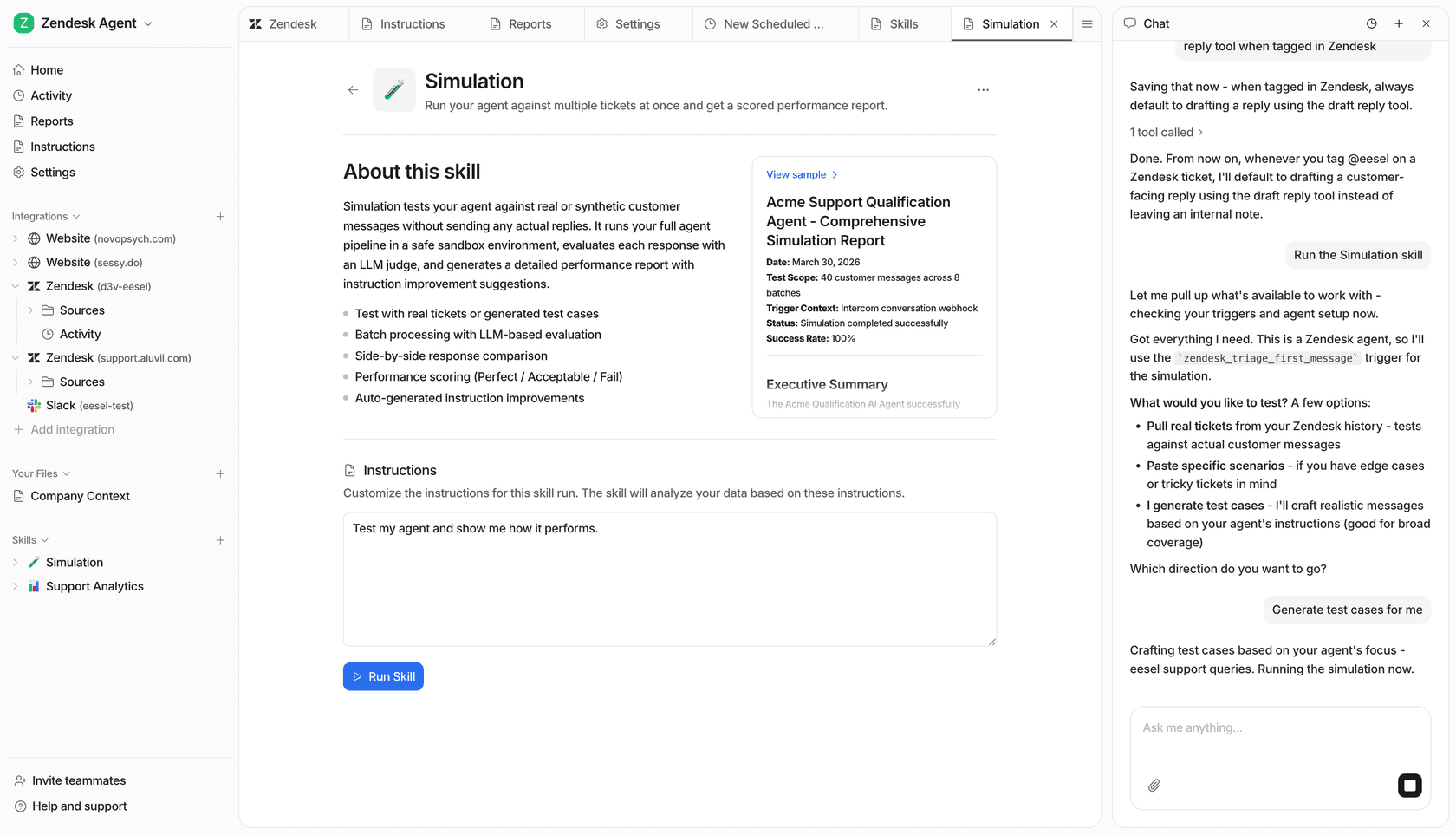

Phase 5: Test before customers see it

This is the step most teams skip - and skipping it is how teams end up discovering the AI's blind spots through customer complaints rather than internal review.

eesel's simulation runs the AI against hundreds of your historical tickets. For each one, it generates what the AI would have sent and compares it against what your agent actually sent. The output is a per-category breakdown:

- Which ticket types the AI handles confidently

- Which categories have knowledge gaps

- A predicted deflection rate once live

- Specific examples of strong and weak AI drafts for you to review

The workflow: run the simulation, review the gap report, fill the missing documentation, run the simulation again. Two or three cycles typically takes a few hours and dramatically reduces post-launch surprises.

One specific review to do during simulation: spot-check the AI's drafts on your five most sensitive ticket categories - complaints, refunds, billing disputes, legal mentions, and VIP accounts. These are where an incorrect AI response does real damage. If simulation shows weak handling in any of these, address the documentation and escalation rules before launch rather than relying on confidence-based routing to catch everything.

Phase 6: Roll out in phases

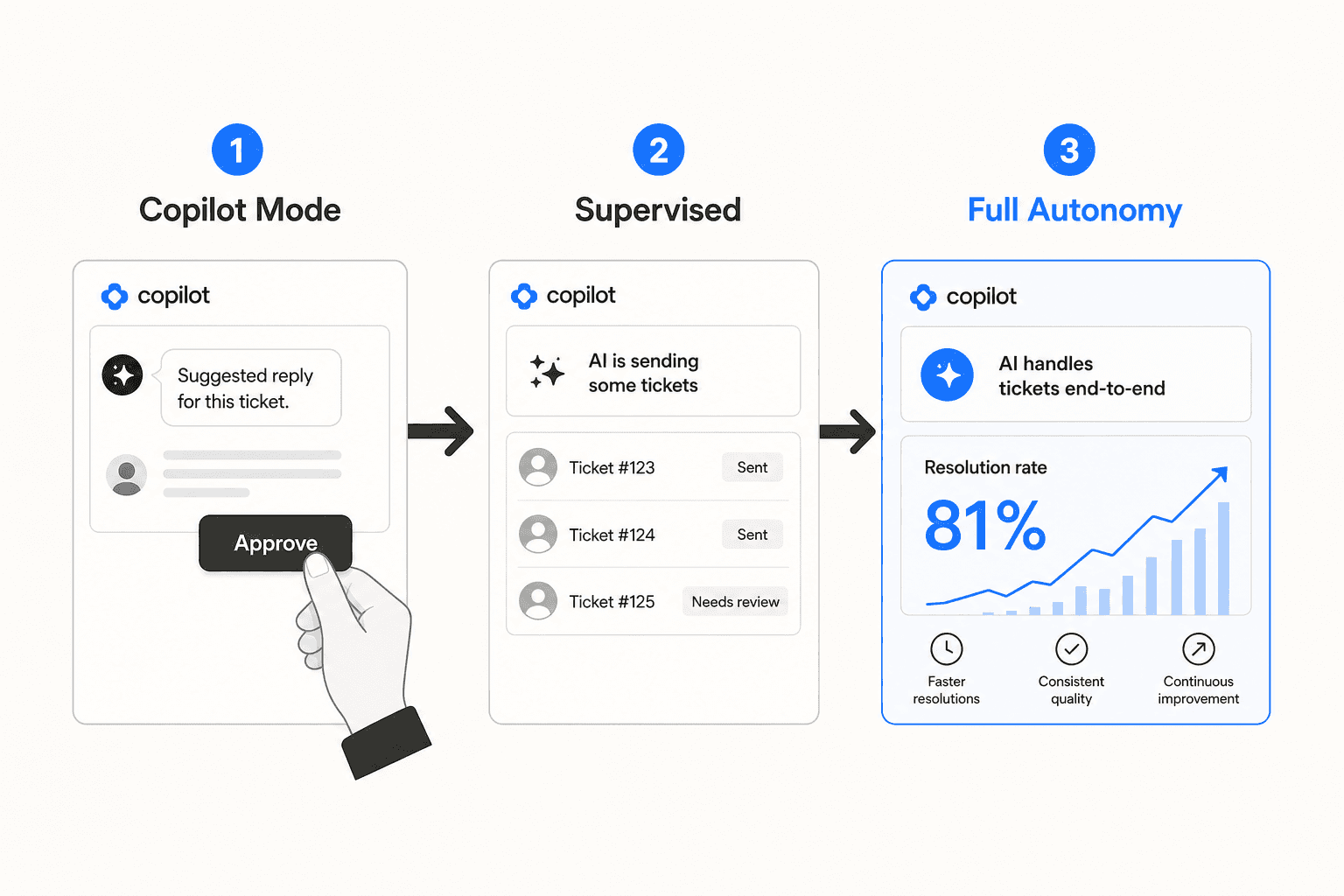

Do not go from zero AI to fully autonomous on day one. The three-stage progression below lets you verify quality at each level before expanding AI scope.

Stage 1: Copilot mode (weeks 1-2)

The AI drafts every response. Nothing sends until a human approves. Your agents review each draft, and every correction they make trains the AI's future responses - improving accuracy and tone over time.

Treat this period as active training, not just review. Agents who approve drafts without reading them don't create a useful feedback signal - the AI doesn't improve and rough edges carry into autonomous mode. Assign one person to review quality daily in the first week and flag categories where drafts consistently miss.

Stage 2: Supervised autonomy (weeks 3-4)

Promote specific ticket categories to autonomous mode once copilot performance in that category is consistently strong. If password reset drafts have been approved unchanged for a full week, switch that category to autonomous. Keep everything else in copilot.

This per-category approach gives granular control. You don't need to flip the entire system to autonomous - the scope grows category by category as confidence builds in each one.

Stage 3: Full autonomy (month 2 onward)

Once you've expanded across categories and confirmed deflection rates and CSAT are holding, extend autonomous operation to the full set of ticket types the AI has proven itself on. Keep escalation rules active for categories that require human judgment, and shift from reviewing individual drafts to reviewing the AI's decision logs weekly.

Gridwise followed this progression on Zendesk and reported:

"In the first month, eesel is resolving 73% of our tier 1 requests. Easy Zendesk implementation." - Kim Simpson, Gridwise

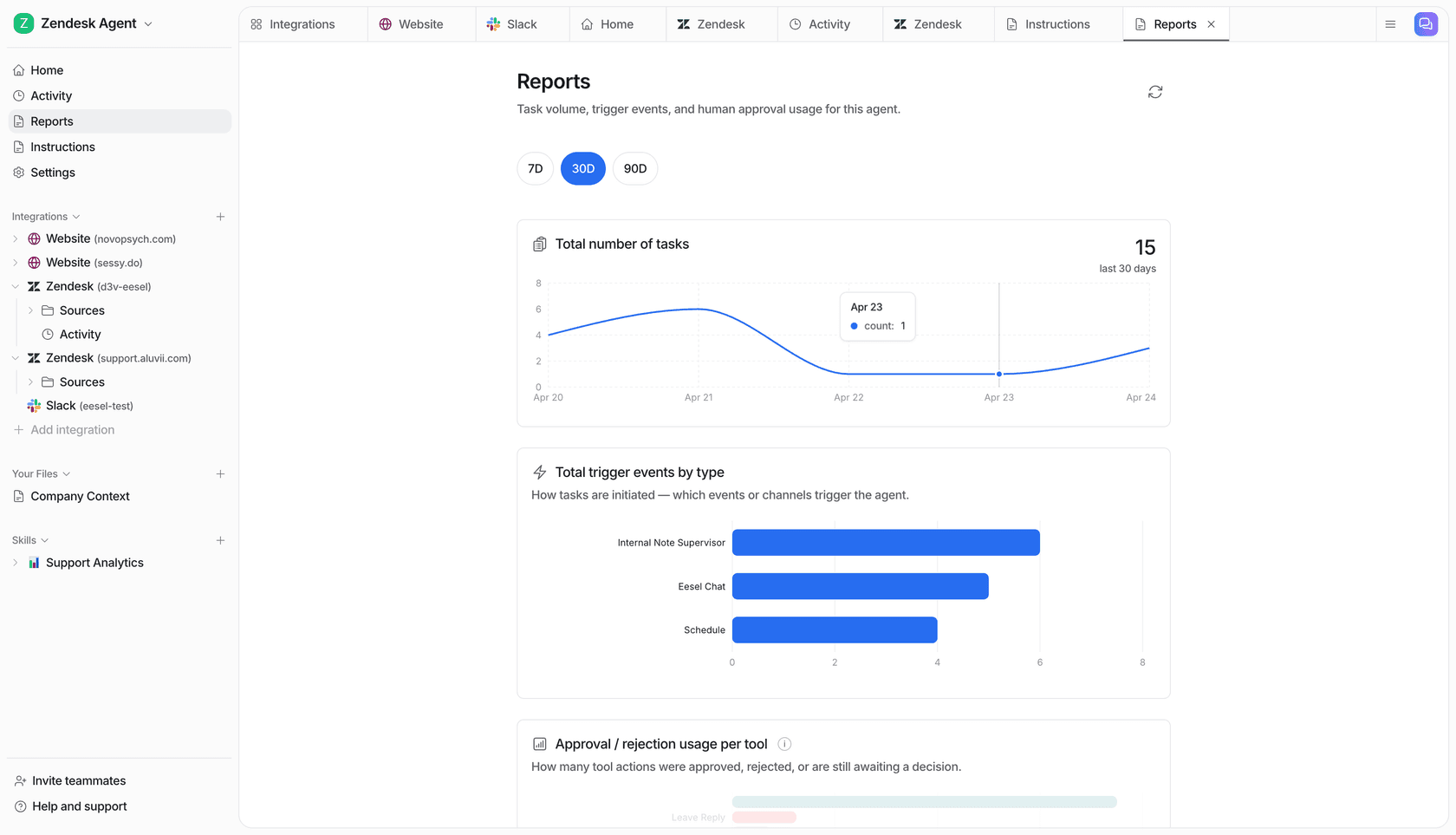

Phase 7: Measure and optimize ongoing

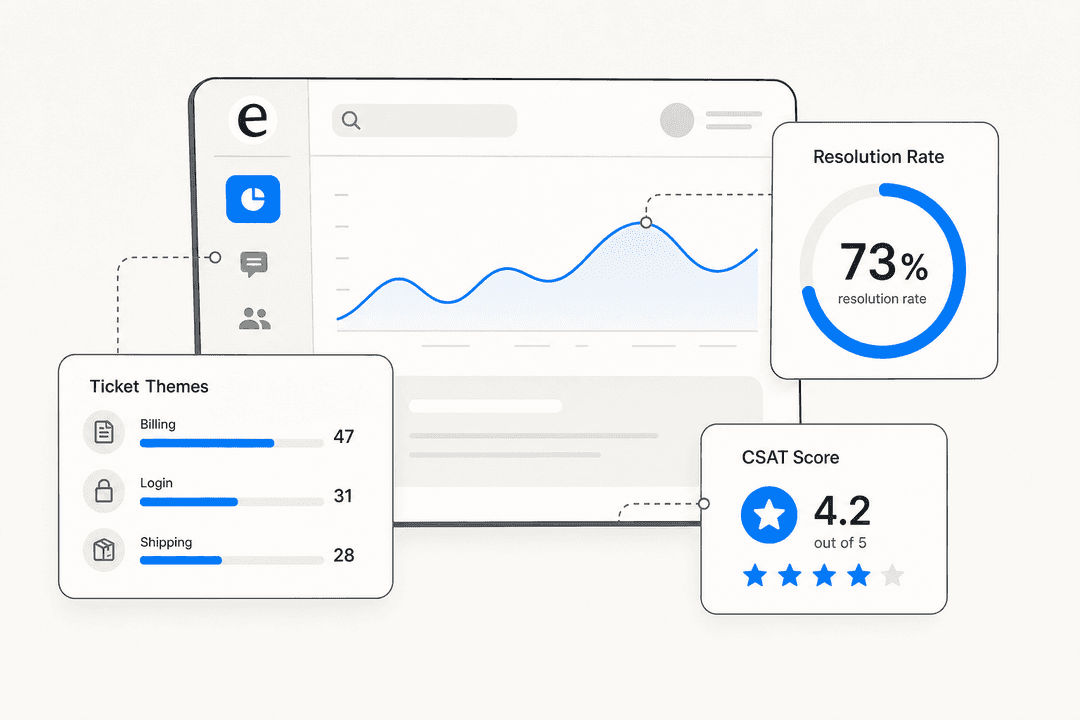

After launch, the metrics you set in Phase 1 become your operating dashboard. Three to review weekly in the first two months, then monthly once you're in a steady state:

Deflection rate. Are you hitting your target? If not, pull the undeflected tickets and look for a pattern. Usually it's a knowledge gap (a common question the AI can't answer) or a misconfigured escalation rule (tickets that should be handled autonomously are routing to humans instead). The deflection rate guide has a systematic framework for diagnosing and improving this specific metric.

CSAT by resolution type. Track customer satisfaction for AI-handled tickets separately from human-handled ones. If AI-handled CSAT is lower, that typically points to a quality issue - the AI is sending technically correct answers that don't satisfy the customer. This often signals tone mismatches or cases where the customer needed empathy alongside a factual answer, not just the fact.

Knowledge gap alerts. eesel's theme analysis surfaces recurring questions the AI can't confidently answer and drafts KB articles to fill the gaps. Review these weekly in the first month. A knowledge gap that recurs 20 times per month is worth 30 minutes of documentation work. One that recurs twice a month is not.

eesel's insights dashboard covers recurring topics, volume trends, resolution rates, and channel breakdown in one place - the AI ticketing system overview explains what to watch and when to act on each signal.

The longer-term optimization work is the same loop: tickets reveal knowledge gaps, gaps reveal documentation work, documentation work improves deflection rates, and deflection rates justify expanding AI scope further. Teams that treat this as an ongoing process - rather than a one-time setup - are the ones that reach 70%+ deflection rates within six months.

Common implementation mistakes

Skipping the knowledge audit. The most common reason AI implementations underperform is poor source documentation, not AI capability. Teams connect their helpdesk and wonder why the AI handles 20% of tickets. The reason: 80% of their common questions either aren't documented anywhere or are documented with outdated information. The audit in Phase 1 exists to prevent this.

Going autonomous too fast. Copilot mode is not optional. Teams that skip directly to autonomous operation find the AI's gaps through customer complaints rather than internal review. Two weeks in copilot mode costs nothing and prevents weeks of degraded customer experience.

Writing vague escalation rules. "Escalate anything complex" is not a rule the AI can follow reliably. Every rule needs a specific condition. Test each one during simulation - route a few tickets through the AI that should trigger the rule and verify they do.

Treating implementation as a one-time project. Pricing changes, product launches, policy updates - the AI doesn't learn about them unless your connected documentation is updated. Build a quarterly process for reviewing your most common ticket categories against their source documentation. Two hours per quarter prevents months of degraded AI quality caused by stale knowledge.

Not using simulation before launch. Simulation is not optional for teams that care about customer experience. The few hours spent running simulation cycles and addressing gaps prevents weeks of customer-facing quality problems. This step alone distinguishes implementations that go well from the ones that generate support escalations about the AI itself.

eesel AI for helpdesk implementation

eesel AI is built for exactly this implementation workflow. Connect to your existing helpdesk in under 15 minutes, run simulations against past tickets to verify quality before any customer sees the AI, start in copilot mode, and expand scope as the AI proves itself category by category.

There is a $50 free trial with no credit card required - enough to connect your helpdesk, ingest your knowledge sources, run ticket simulations, and see the predicted deflection rate on your actual ticket mix before committing to anything.

Frequently Asked Questions

Share this article

Article by

Stevia Putri

Stevia Putri is a marketing generalist at eesel AI, where she helps turn powerful AI tools into stories that resonate. She’s driven by curiosity, clarity, and the human side of technology.