It seems like every business is trying to build something cool with Large Language Models (LLMs) these days. But pretty quickly, everyone hits the same wall: how do you get these powerful models to know anything about your business? All your unique, valuable information, customer support tickets, internal wikis, product specs, is completely invisible to them.

This is the exact problem data frameworks are built to solve. They act as the middleman between an LLM and your private data. One of the most popular open-source tools that developers reach for is LlamaIndex.

In this guide, we'll give you a straight-to-the-point, business-focused look at what LlamaIndex is, how it works, and what people are building with it. We'll also get real about its limitations and talk about why a ready-made AI platform might be a much faster way to get the job done.

What is LlamaIndex?

Simply put, LlamaIndex is an open-source framework that gives developers the tools to connect LLMs to their own private data. Its main purpose is to create a pipeline that can feed your company-specific info to a model like GPT-4, so it can give answers based on what you know, not just what's on the public internet.

Here’s a good way to think about it: imagine an LLM is a brilliant new hire who has read every book in the public library. They know a ton about general topics but have zero clue where your company keeps its financial reports or how your internal software works. LlamaIndex is the toolkit a developer uses to act as a corporate librarian for that new hire. It reads, catalogs, and indexes every single document your company owns.

So, when a user asks a question, the LLM doesn't have to guess. It can consult the perfectly organized index your developer built and pull out the exact piece of information it needs. This is how you can build things like a chatbot that knows your product inside and out, or an internal search tool that actually works.

How LlamaIndex works: The RAG pipeline explained

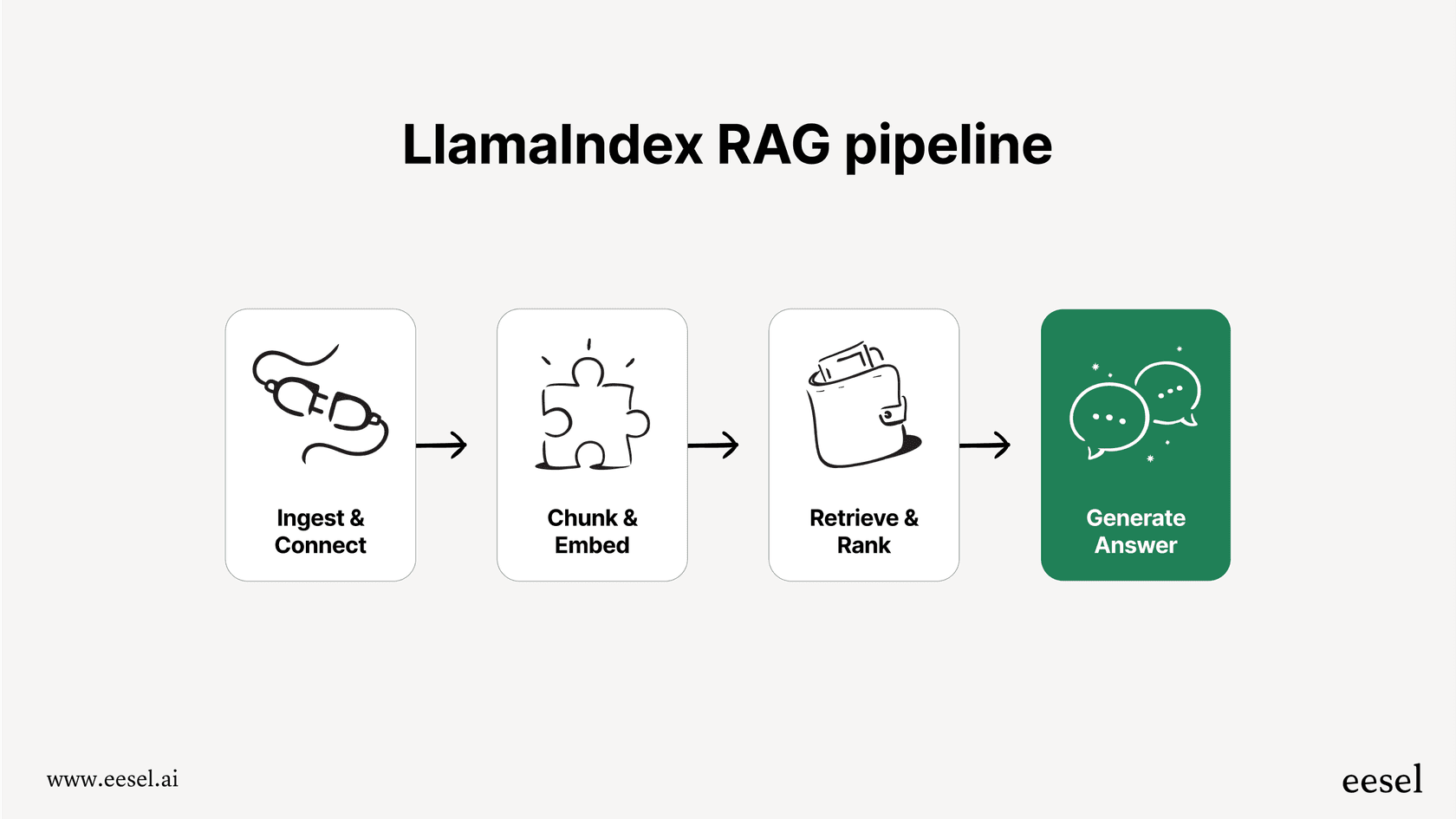

The process that makes this all happen is called Retrieval-Augmented Generation, or RAG. It sounds a little intimidating, but it's really just a logical, four-step journey from your raw data to a smart, accurate answer from an LLM.

Let’s walk through it.

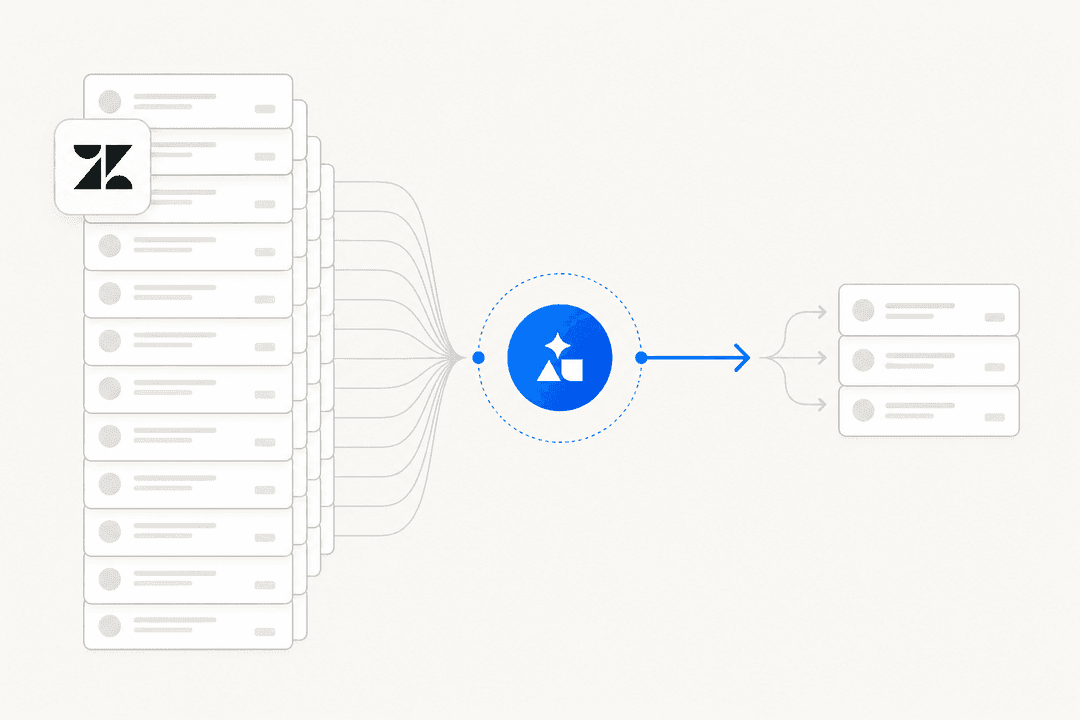

Step 1: Getting your data in with LlamaIndex

First things first, you have to load your data. LlamaIndex uses what it calls "data connectors" (or "loaders") to do this. These are basically little scripts designed to pull information from all the different places you keep it, PDFs, databases, Notion, you name it. There's a big community library called LlamaHub where you can find pre-built connectors for hundreds of different sources.

While having options is great, this is also where the first challenge pops up. You need a developer who's comfortable with Python to set up and maintain these connections. Depending on how messy your data sources are, this step alone can turn into a pretty time-consuming engineering project.

Step 2: Indexing and storing it all with LlamaIndex

Once the data is in, you can't just throw it at the LLM. It needs to be structured properly. This is the "indexing" part of the process.

LlamaIndex chops up your documents into smaller, bite-sized "chunks." Each chunk is then run through an embedding model, which is a fancy way of saying it converts the text into a string of numbers (a "vector embedding"). These numbers capture the actual meaning of the text. All these vectors get stored in a special kind of database called a "vector database," which is designed to be searched based on meaning, not just keywords.

Step 3: How LlamaIndex understands the user's question

Now we get to the good part. When a user asks a question, their query goes through the exact same process. It's converted into a vector embedding using the same model.

The system then zips over to the vector database and looks for the data chunks with embeddings that are most similar to the question's embedding. In plain English, it finds the snippets from your original documents that are most likely to contain the answer.

These relevant chunks of information are then bundled up and sent to the LLM along with the user's original question. This gives the model all the context it needs to craft an answer that's actually based on your company's data.

Key features and capabilities of LlamaIndex

Beyond that core RAG pipeline, LlamaIndex offers a few more advanced tools for developers looking to build more complex apps.

LlamaIndex query engines and chatbots

Query engines are the components that handle the whole retrieve-and-answer process. A step up from that are "chat engines," which are built for more natural, back-and-forth conversations. They can remember the chat history, so the user can ask follow-up questions without the bot losing track of the conversation. This is how you build a custom chatbot that feels less like a robot and more like a helpful assistant.

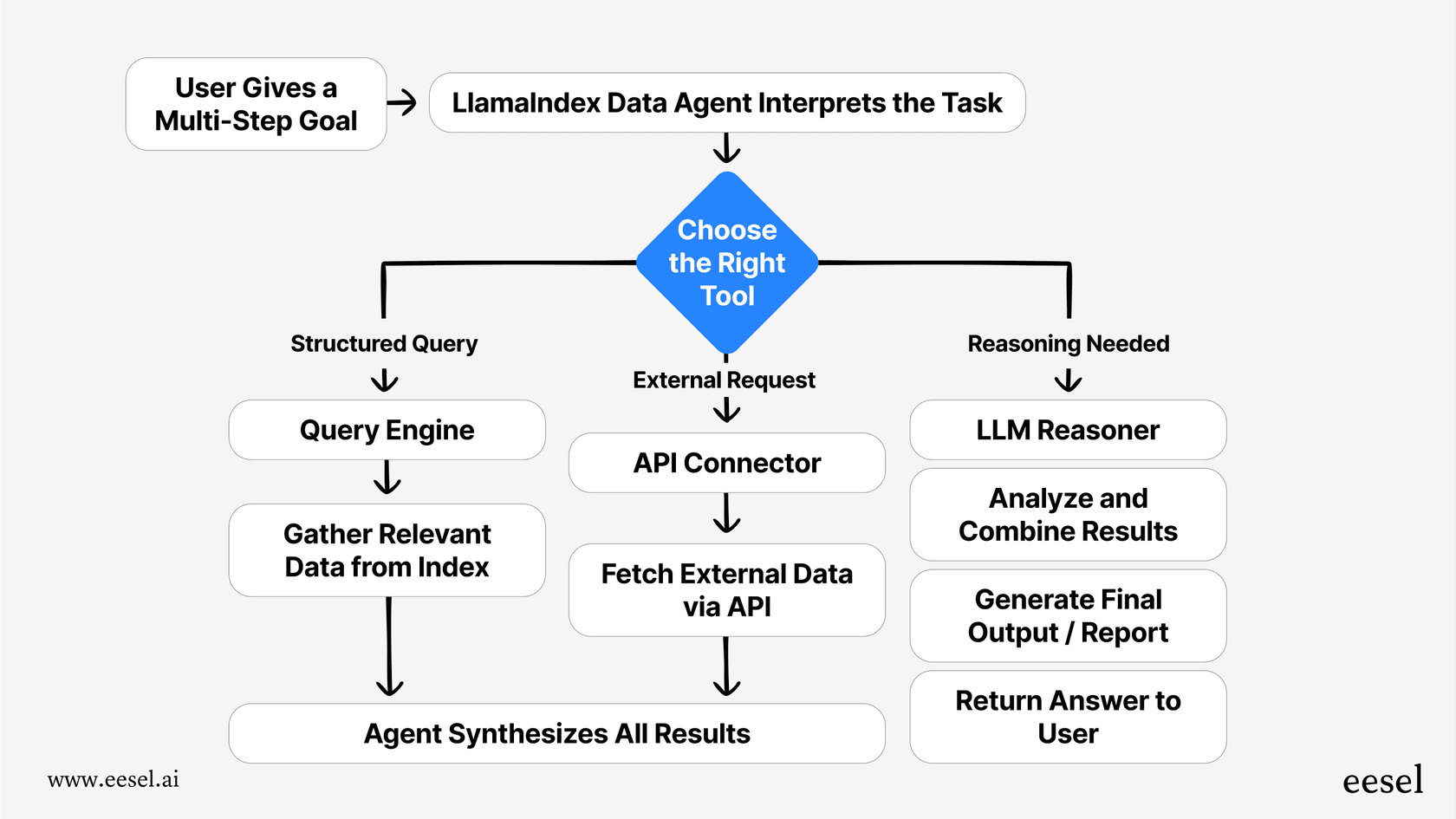

LlamaIndex data agents

Data agents are where things get really interesting, and really complicated. Think of an agent as an LLM-powered worker that can handle multi-step tasks. You can give it a high-level goal and access to a set of "tools" (like a query engine or an API), and it will figure out the steps needed to get the job done.

For example, you could tell an agent: "Find our latest sales report, summarize the key takeaways, and draft an email to the sales team." The agent would first use one tool to find the report, a second tool to analyze and summarize it, and a third to compose the email. It’s an incredibly powerful idea, but making these agents reliable is a serious engineering lift that requires a lot of expertise in system design.

Common use cases and limitations of LlamaIndex

LlamaIndex gives your developers a powerful box of LEGOs, but it's completely up to them to design and build the final product.

What can you build with LlamaIndex?

-

Question-Answering over your docs: This is the most common use case. Let employees or customers ask normal questions and get answers from your internal wikis (Confluence, Google Docs), technical manuals, or company reports.

-

Custom chatbots: You can build internal bots to help with IT or HR questions, or customer-facing bots that are true experts on your products.

-

Structured data extraction: Use an LLM to read through messy, unstructured text like emails or support tickets and pull out key details, like names, dates, or order numbers, and save them in a clean, structured format for analysis.

What are the business limitations of LlamaIndex?

While LlamaIndex is an exciting tool for developers, it comes with some very real business limitations that are easy to gloss over at the start.

-

It demands serious technical skill: Let's be clear, LlamaIndex is a framework for developers. This isn't a tool your support team can just pick up and start using. You need a team of Python engineers, ideally with some AI experience, to build, launch, and maintain anything you create with it.

-

The total cost is much more than "free": The open-source framework doesn't cost anything, but that's just the tip of the iceberg. The real costs are the salaries for the developers building it, the monthly bills for servers and vector databases, and the constant engineering time needed for bug fixes and updates.

-

You have to build everything around it: LlamaIndex provides the engine, but you have to build the entire car yourself. That means creating the user interface, an admin panel for your team, reporting dashboards, and, crucially, integrating it with the tools you already use, like Zendesk or Slack. All that custom development can take months, which means you're waiting a long time to see any value.

LlamaCloud pricing

The company behind the LlamaIndex framework does offer a commercial product called LlamaCloud. It’s designed to take some of the grunt work off your plate, specifically the document parsing, ingestion, and indexing parts.

But it’s important to understand what LlamaCloud doesn't do. It doesn't build the final application for you. It's a managed service that makes the first two steps of the RAG pipeline easier. Your engineering team still has to build the query engines, agents, UI, and all the business logic. Its pricing is credit-based, with 1,000 credits costing $1.

| Plan | Free | Starter | Pro | Enterprise |

|---|---|---|---|---|

| Included credits | 10K | 50K | 500K | Custom |

| Pay-as-you-go credits | 0 | up to 500K ($500) | up to 5,000K ($5K) | Custom |

| Users | 1 | 5 | 10 | Unlimited |

| Data Sources | 0 | 50 | 100 | Unlimited |

| Support | Basic | Basic | Basic | Dedicated |

Even with LlamaCloud, the heaviest lifting, like building the actual workflows and user interface, is still on your team.

The alternative to LlamaIndex: A managed AI platform

The choice to use LlamaIndex really boils down to the classic "build vs. buy" debate. If you're a tech company with a deep bench of engineering talent and you want to build a completely custom AI app from scratch, LlamaIndex is a fantastic option.

But what if you're a business that just wants to use AI to solve a problem, like, today? For most teams, the better alternative is a fully managed, end-to-end AI platform. This is where a tool like eesel AI comes into the picture. It handles all the complicated stuff under the hood, data ingestion, indexing, RAG pipelines, and delivers a solution you can start using in minutes, not months.

| Feature | LlamaIndex (DIY Approach) | eesel AI (Managed Platform) |

|---|---|---|

| Setup Time | Weeks to months | Go live in minutes |

| Required Skills | Python developers, AI/ML engineers | Non-technical users |

| Integrations | Requires coding for each connection | 100+ one-click integrations (Zendesk, Slack, etc.) |

| Workflows | Must be built from scratch | Fully customizable workflow engine |

| Testing | Manual testing, custom scripts required | Built-in simulation over historical tickets |

| Maintenance | Ongoing engineering effort | Fully managed and maintained by eesel AI |

Here's what really makes a managed platform like eesel AI different:

-

It’s truly self-serve: You can sign up, connect your helpdesk like Freshdesk or Intercom, point it to your knowledge sources, and have a working AI agent without ever talking to a salesperson.

-

You get full control without writing code: A simple, visual interface lets you decide exactly which tickets the AI should handle, tweak its personality, and set up custom actions (like looking up order details from Shopify). No developers needed.

-

You can test it with confidence: Before your AI ever speaks to a real customer, you can run a simulation on thousands of your past support tickets. You'll see exactly how it would have responded and get a solid forecast of your automation rate. You can launch knowing exactly what to expect.

Get started with a purpose-built AI solution today

LlamaIndex is an excellent, flexible framework for development teams that have the time, budget, and specific expertise to build custom LLM applications from the ground up. It gives you complete control, which is great if you need it.

However, for most businesses, the goal isn't to build an AI framework; it's to solve problems and make things more efficient right now. For that, a managed platform is almost always the more practical and cost-effective route.

With eesel AI, you get all the power of a custom-built RAG system with the ease of a self-serve tool. It connects to your knowledge, plugs into the tools you already use, and gives you the controls you need to automate safely.

Instead of spending the next few months building the plumbing, you could be automating support tickets this afternoon. Start your free trial or book a demo to see how it can work for your team.

Frequently asked questions

LlamaIndex is an open-source framework designed to connect Large Language Models (LLMs) with your private data. It creates a pipeline that feeds your company-specific information to an LLM, allowing it to generate answers based on your internal knowledge rather than just public internet data.

With LlamaIndex, the RAG pipeline starts by loading your data using connectors, then indexing it by converting text into numerical embeddings stored in a vector database. When a user queries, the question is also embedded, matched to relevant data chunks, and then these chunks are sent to the LLM for generating a context-aware answer.

You can build robust question-answering systems over your internal documents, create custom chatbots for customer support or internal HR, and even use it for structured data extraction from unstructured text like emails or support tickets.

LlamaIndex requires significant technical skill from Python and AI engineers for setup and maintenance. While the framework is free, the total cost involves developer salaries, infrastructure, and ongoing engineering time. You also need to build all surrounding components like UI, admin panels, and integrations from scratch.

While the core LlamaIndex framework is free, businesses incur substantial costs from developer salaries for building and maintaining the application, monthly bills for servers and vector databases, and continuous engineering effort for updates and bug fixes. The "free" aspect only refers to the framework itself, not the total solution.

LlamaCloud is a commercial product offered by the company behind the LlamaIndex framework. It provides a managed service specifically for the data ingestion, parsing, and indexing parts of the RAG pipeline, simplifying the initial steps. However, your engineering team is still responsible for building the query engines, agents, UI, and business logic for the final application.

Using LlamaIndex is ideal if your business has a dedicated team of AI/ML engineers, ample time, and budget to build a highly customized LLM application from the ground up, requiring complete control. For most businesses aiming to solve problems quickly and efficiently without extensive custom development, a fully managed, end-to-end AI platform is often a more practical and faster solution.

Share this article

Article by

Stevia Putri

Stevia Putri is a marketing generalist at eesel AI, where she helps turn powerful AI tools into stories that resonate. She’s driven by curiosity, clarity, and the human side of technology.