AI has moved from experimental to essential in customer support. What used to be a nice-to-have is now a competitive necessity. Support teams face exploding ticket volumes, rising customer expectations for instant responses, and pressure to control costs. AI offers a path forward, but here's the problem: most implementation guides treat AI like software you configure, not a teammate you onboard.

The result? Teams deploy AI too quickly, discover it doesn't handle their specific issues well, and either roll it back or limp along with frustrated customers and agents. There's a better way.

This guide covers a progressive implementation approach that treats AI like a new hire: start with guidance, prove performance, then level up to autonomy. You'll learn how to connect AI to your existing systems, test it before customers see it, and expand its role based on actual results rather than hope.

What you'll need before you start

Before diving in, make sure you have these basics covered:

-

Access to your help desk. Whether you use Zendesk, Freshdesk, Gorgias, or another platform, you'll need admin access to connect AI tools.

-

Historical support data. Past tickets, help center articles, macros, and saved replies. This is what trains the AI on your business, tone, and common issues.

-

Understanding of your ticket types. Know which issues are frequent and straightforward versus rare and complex. This determines what to automate first.

-

Escalation criteria. Clear rules for when AI should hand off to humans. Billing disputes? VIP customers? Technical issues beyond tier 1? Define these upfront.

-

An internal champion. Someone who understands both support operations and AI capabilities. They'll drive the implementation and troubleshoot issues.

Step 1: Connect your AI teammate to existing systems

The first decision shapes everything that follows: will AI plug into your existing stack, or will you need to migrate to a new platform?

Integration-first approaches win here. Modern AI tools connect directly to your help desk without requiring you to move tickets, retrain agents on new interfaces, or disrupt workflows. The AI learns from your existing data and operates within the systems your team already uses.

Here's what the connection process looks like:

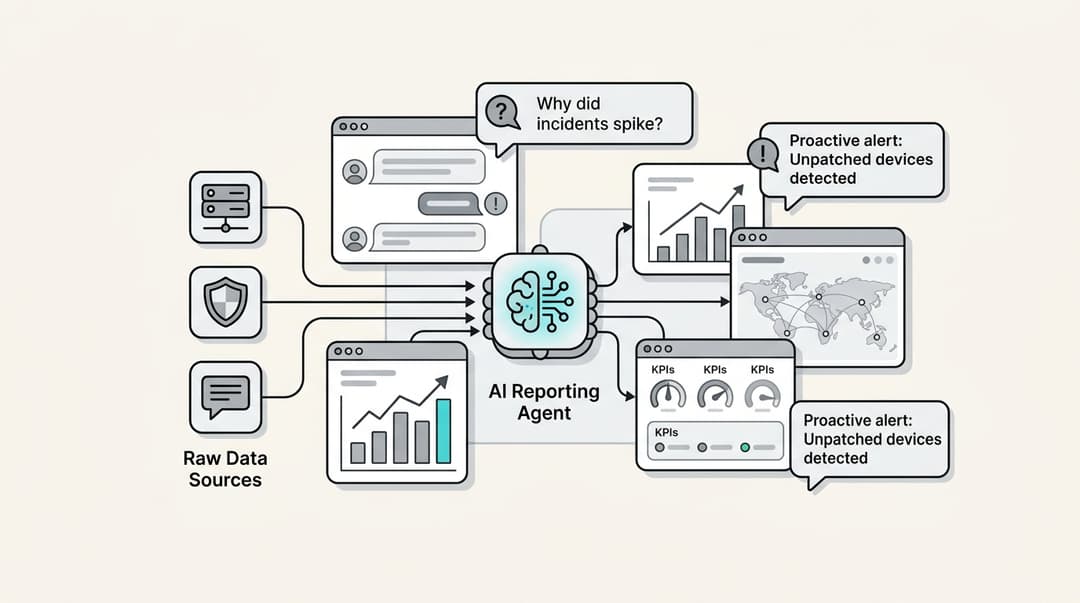

Connect your help desk. Authorize the AI to access your ticket history, help center articles, macros, and saved replies. This gives the AI its foundational training data: how your team actually writes, what issues come up most, and how you've solved them in the past.

Link knowledge sources. Most businesses have documentation scattered across Confluence, Google Docs, Notion, PDFs, and internal wikis. Connect these so the AI can reference them when responding. The more comprehensive the knowledge base, the better the AI performs.

Set up action integrations. If you handle e-commerce support, connect Shopify, WooCommerce, or your order management system. This lets AI look up orders, process refunds, and check inventory. For technical support, connect tools like Jira or ServiceNow so AI can create issues and track bugs.

The goal is simple: AI should learn your business context, tone, and common issues from day one. Not from generic training data, but from your actual support history.

Time estimate: Minutes, not weeks. Modern AI tools (including eesel AI) complete this initial connection in under an hour.

Step 2: Run simulations on past tickets before going live

Here's where most AI implementations go wrong: they skip testing. Teams configure the AI, turn it on for real customers, and hope for the best. When the AI responds poorly (and it will, at first), customers suffer and confidence plummets.

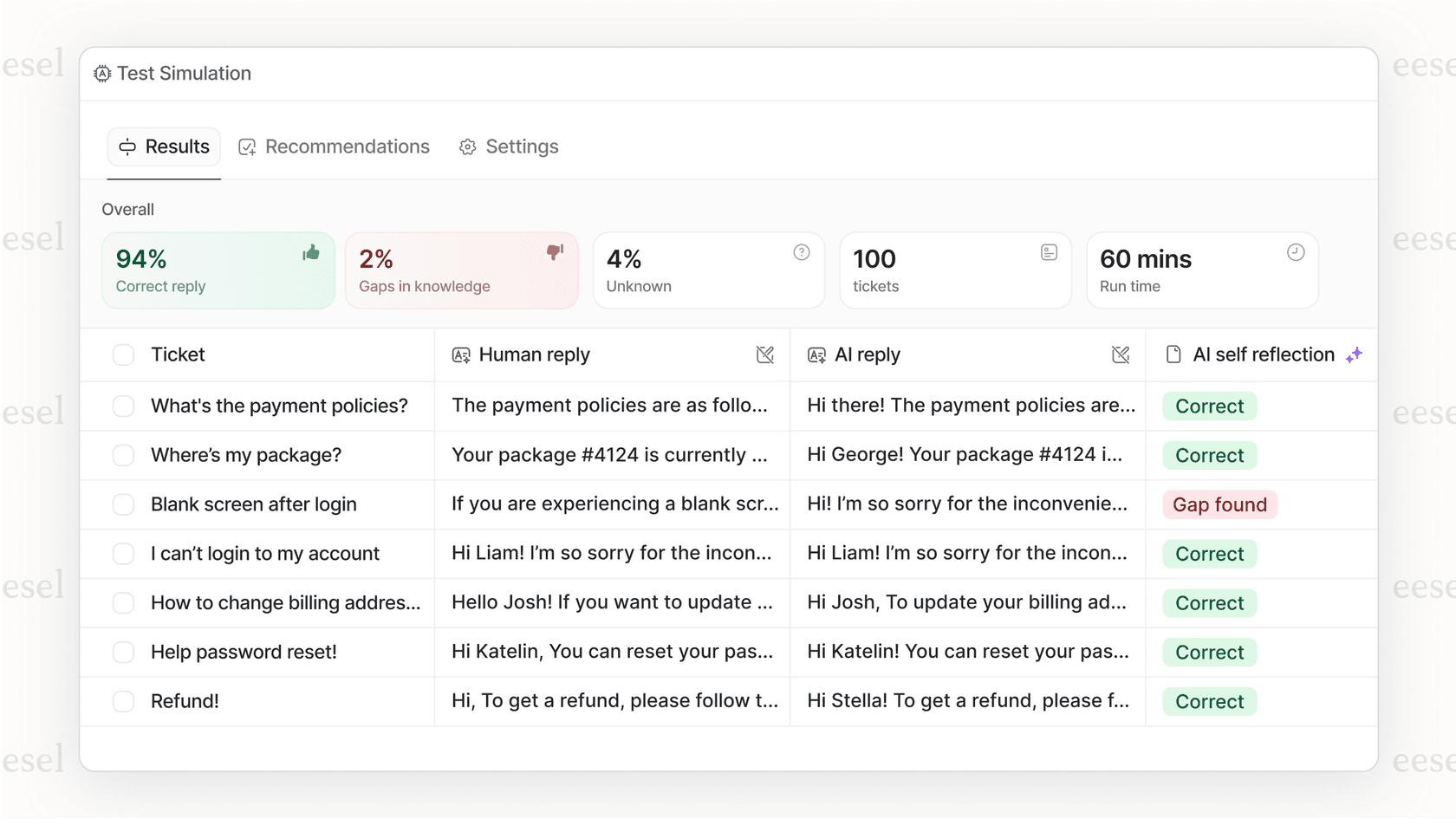

There's a better approach: run simulations on historical tickets before going live.

Simulations work like this: the AI generates responses to past tickets that your team already resolved. You review these responses for accuracy, tone, and escalation appropriateness. No customers see the AI's answers. You're simply testing how it would have performed.

Measure what matters:

- Resolution rate: What percentage of tickets would the AI have resolved correctly without human intervention?

- Tone accuracy: Does the AI match your team's voice? Is it too formal? Too casual?

- Escalation judgment: Does the AI know when to hand off? Complex issues, frustrated customers, and VIPs should go to humans.

Identify knowledge gaps. When the AI responds poorly, ask why. Is the answer in your help center but the AI missed it? Is the policy undocumented tribal knowledge? Use these gaps to improve your knowledge base before launch.

Tune prompts and rules. Simulations reveal where your escalation rules need refinement. Maybe "billing disputes" needs more specific criteria. Maybe certain product lines require human touch. Adjust these settings based on actual data.

The value is confidence. You see exactly how the AI performs before customers do. You fix issues in private rather than recovering from public mistakes.

Time estimate: 1-2 days of review, depending on ticket volume and complexity.

Step 3: Start with guidance drafts for review

With simulations complete and quality verified, it's time for the progressive rollout to begin. Start with AI Copilot mode: AI drafts replies, human agents review and edit before sending.

This phase serves multiple purposes. Agents learn to work alongside AI. You gather feedback on where AI excels and struggles. And you maintain quality control while building confidence.

Set boundaries for the initial rollout:

- Business hours only. Let AI draft during business hours when agents are available to review. Save after-hours automation for later.

- Specific ticket types. Start with FAQs, order status checks, and password resets. Save complex technical issues and billing disputes for when AI has proven itself.

- Clear review expectations. Agents should check AI drafts for accuracy, tone, and completeness. Over time, they'll recognize when they can trust the AI and when they need to intervene.

Gather structured feedback. Ask agents to flag specific issues:

- Where did AI get the answer wrong?

- Where was the tone off?

- Where should AI have escalated but didn't?

- Where did AI miss context from previous tickets?

This feedback loop improves the AI. Most systems learn from agent edits, so corrections today produce better drafts tomorrow.

Timeline: This phase typically lasts 1-2 weeks depending on ticket volume. The goal is enough interactions to identify patterns, not perfection before moving forward.

Step 4: Level up to autonomous responses

Once AI demonstrates consistent quality in Copilot mode, it's time to expand its role. This is the "level up" phase: AI sends replies directly for ticket types where it showed high accuracy.

Expand scope based on data, not hope:

- Ticket types: If AI handled 95% of order status tickets correctly in Copilot mode, let it send those replies directly. Keep billing disputes in review mode until performance improves.

- Coverage hours: Extend from business hours to evenings, then to full 24/7 coverage as confidence grows.

- Complexity: Gradually allow AI to handle more complex issues as it demonstrates capability.

Monitor the metrics that matter:

| Metric | What it tells you | Target |

|---|---|---|

| Resolution rate | % of tickets AI handles without human intervention | 60-80% for mature deployments |

| CSAT | Customer satisfaction for AI-handled tickets | Match or exceed human-handled |

| Escalation rate | How often AI correctly hands off | Low, with appropriate complexity |

| Response time | Speed of first response | Under 1 minute for AI |

Adjust based on performance. If CSAT drops for a particular ticket type, move it back to Copilot mode. If escalation rate spikes, review your escalation rules. This is continuous optimization, not set-and-forget.

Research from IBM shows that mature AI adopters achieve 17% higher customer satisfaction than those without AI. But maturity takes time. The progressive approach gets you there faster than the "turn it on and hope" alternative.

Timeline: Most teams reach significant autonomy within 2-4 weeks. Full maturity (up to 81% autonomous resolution) typically takes 2-3 months of continuous learning.

Step 5: Define escalation and scope in plain English

One of the most powerful aspects of modern AI is plain-English control. You don't need to write code or configure complex decision trees. You simply tell the AI what to do in natural language.

Examples of effective escalation rules:

- "Always escalate billing disputes over $500 to the finance team."

- "If a customer mentions 'lawsuit' or 'lawyer,' immediately escalate to the legal team."

- "For VIP customers (tier Gold and above), CC their account manager on all responses."

- "If a refund request is over 30 days old, politely decline and offer store credit instead."

- "Technical issues involving integrations should go to the engineering queue."

Set up VIP handling:

Some customers should never interact with AI, or should have AI responses reviewed before sending. Define these segments clearly:

- Enterprise accounts above a certain contract value

- Customers with open escalation history

- Strategic accounts with named account managers

- Customers who explicitly request human agents

Create time-based policies:

Business hours and after-hours can have different rules:

- Business hours: AI handles tier 1, escalates tier 2+ to humans

- After hours: AI handles everything it can, queues complex issues for morning review

- Weekends: AI-only for simple requests, human queue for Monday

The power of plain-English control is accessibility. Anyone on your team can adjust AI behavior without technical expertise. Support managers can refine policies. Team leads can update escalation rules. The AI adapts to your business rather than forcing your business to adapt to the AI.

Common use cases and expected results

Different support scenarios yield different automation potential. Here's what to expect:

FAQ automation (70-80% resolution rate). Common questions about hours, policies, and basic product features are ideal for AI. Customers get instant answers, agents focus on harder issues.

Order status and tracking (high automation potential). "Where's my order?" questions are straightforward when AI connects to your e-commerce platform. Customers get real-time updates without waiting for an agent.

Password resets and account issues (straightforward for AI). These follow predictable patterns. AI can verify identity, trigger reset emails, and guide customers through recovery flows.

Returns and refunds (moderate complexity). Good for guided automation when policies are clear. AI can check eligibility, initiate returns, and process refunds within defined limits.

Technical troubleshooting (escalate complex issues). Tier 1 issues ("Have you tried restarting?") work well for AI. Complex debugging should escalate to technical staff.

Triage and routing (high automation value). AI can read incoming tickets, tag them by topic and urgency, and route to the right team before humans even see them.

Real metrics from deployments show what's possible: up to 81% autonomous resolution at maturity, with typical payback periods under two months. The key is matching the right use cases to your AI's capabilities and expanding from there.

Measuring success: KPIs and ROI for AI in customer support

You can't improve what you don't measure. Track these metrics from day one:

Resolution rate. The percentage of tickets AI handles without human intervention. Start small (20-30%) and grow to 60-80% as the AI learns.

CSAT impact. Compare customer satisfaction scores for AI-handled versus human-handled tickets. The goal is parity or improvement, not just efficiency at the cost of experience.

Response time. AI should deliver sub-minute first responses. Measure this separately from resolution time.

Agent productivity. With AI handling routine tickets, agents should resolve more complex issues per hour. Track tickets per agent and time spent on high-value work.

Cost per interaction. Industry data shows AI-handled interactions cost $1 or less, compared to $8-15 for human-handled tickets. Calculate your own numbers based on AI costs and volume.

Escalation rate. How often does AI correctly identify when to hand off? Too high means AI is being too cautious. Too low means AI is missing complexity it should catch.

Set baseline metrics before implementation. Without "before" numbers, you can't prove improvement. Most teams see measurable gains within the first month of progressive rollout.

Tips for successful AI implementation

Based on hundreds of deployments, here are the practices that separate successful implementations from failed ones:

-

Start small, expand fast. Don't try to automate everything at once. Pick 2-3 high-volume, low-risk ticket types for your initial rollout. Expand scope as the AI proves itself.

-

Keep humans in the loop. AI augments agents; it doesn't replace them. Complex issues, emotional situations, and VIP customers need human judgment and empathy.

-

Update continuously. AI learns from corrections, new documentation, and policy changes. When you update your help center or change a policy, make sure the AI knows.

-

Be transparent. Let customers know when they're interacting with AI. Most don't mind, and it builds trust. Provide easy paths to human agents for those who prefer them.

-

Focus on data quality. AI is only as good as the knowledge it learns from. Outdated help articles, conflicting policies, and undocumented tribal knowledge will produce poor AI responses.

-

Plan for edge cases. Define clear escalation paths for unusual situations. What happens when AI encounters a ticket it doesn't understand? Where does it go? Who reviews it?

Start implementing AI in your support team today

The progressive approach to AI implementation is simple: Connect your systems, run simulations to verify quality, start with AI drafting for review, then level up to autonomy based on proven performance. Define escalation rules in plain English and expand scope as the AI demonstrates capability.

This teammate model treats AI as a new hire who learns your business and grows with you. It's fundamentally different from the "configure and deploy" approach that leads to disappointing results.

At eesel AI, we've built our entire platform around this philosophy. Our AI Agent handles autonomous responses. Our AI Copilot drafts replies for review. Our AI Triage routes and tags tickets automatically. All three are included in every plan because most teams use all three capabilities at different stages of their AI journey.

Our pricing scales by interactions, not seats. The Team plan starts at $299 per month for up to 1,000 AI interactions. Business plans at $799 include unlimited bots and 3,000 monthly interactions. You can start small, prove value, and scale as your AI handles more volume.

The best part? You can see results in days, not months. Connect your help desk, run simulations on past tickets, and know exactly how AI will perform before it touches a real customer conversation.

Ready to invite an AI teammate to your support team? Start your free trial and see what progressive AI implementation looks like in practice.

Frequently Asked Questions

Share this article

Article by

Stevia Putri

Stevia Putri is a marketing generalist at eesel AI, where she helps turn powerful AI tools into stories that resonate. She’s driven by curiosity, clarity, and the human side of technology.