GLM 5.1 Guide: The New King of Long-Horizon AI Engineering

Stevia Putri

Last edited April 21, 2026

{ "title": "GLM 5.1 Guide: The New King of Long-Horizon AI Engineering", "keyword": "GLM 5.1", "slug": "glm-5-1", "description": "Discover GLM 5.1, the flagship AI model setting SOTA benchmarks in coding and long-horizon tasks. Learn how it outperforms Claude and GPT-5 in 2026.", "excerpt": "GLM 5.1 is redefining agentic engineering. From SOTA coding performance to 8-hour autonomous tasks, see why this model is the new benchmark for AI teammates.", "categories": ["Blog Writer AI"], "tags": ["GLM 5.1", "Agentic Engineering", "AI Benchmarks", "Coding AI", "Z.ai"], "coverImage": "https://cdn-public.eesel.ai/80de425a-0941-4f4b-b432-d96d9b2939f9/c14f474d-6969-45a3-a625-051b49aee7b4/40a2c72989ff40f29d371bea99d0fcc5.png", "bannerUrl": "https://cdn-public.eesel.ai/80de425a-0941-4f4b-b432-d96d9b2939f9/c14f474d-6969-45a3-a625-051b49aee7b4/40a2c72989ff40f29d371bea99d0fcc5.png", "bannerAlt": "A futuristic GLM 5.1 logo with a complex coding interface backdrop.", "faqs": [ { "question": "What is GLM 5.1?", "answer": "GLM 5.1 is a next-generation flagship AI model by Z.ai, specifically designed for long-horizon agentic engineering tasks." }, { "question": "How does GLM 5.1 perform on coding benchmarks?", "answer": "GLM 5.1 achieved a SOTA score of 58.4 on SWE-Bench Pro, outperforming GPT-5.4 and Claude Opus 4.6." }, { "question": "Can I run GLM 5.1 locally?", "answer": "Yes, GLM 5.1 model weights are open-source and compatible with local frameworks like Ollama, vLLM, and SGLang." } ] }

The world of AI is moving fast. We’ve gone from "vibe coding," where you ask an AI for a snippet and hope it works, to "agentic engineering," where AI models take on complex, multi-step projects independently. But even in this new era, most models hit a wall. They start strong, but as the task gets more complex and the tool calls pile up, they plateau. They exhaust their options, repeat mistakes, and eventually give up.

Enter GLM-5.1. Released in early 2026, this next-generation flagship model from Z.ai isn't just another incremental update. It’s a model built specifically for the "long-horizon," tasks that require hundreds of rounds of iteration and thousands of tool calls to reach an optimal result.

Whether you're building a fully autonomous AI helpdesk agent or optimizing high-performance GPU kernels, GLM 5.1 is setting a new standard for what it means to be a "productive" AI teammate.

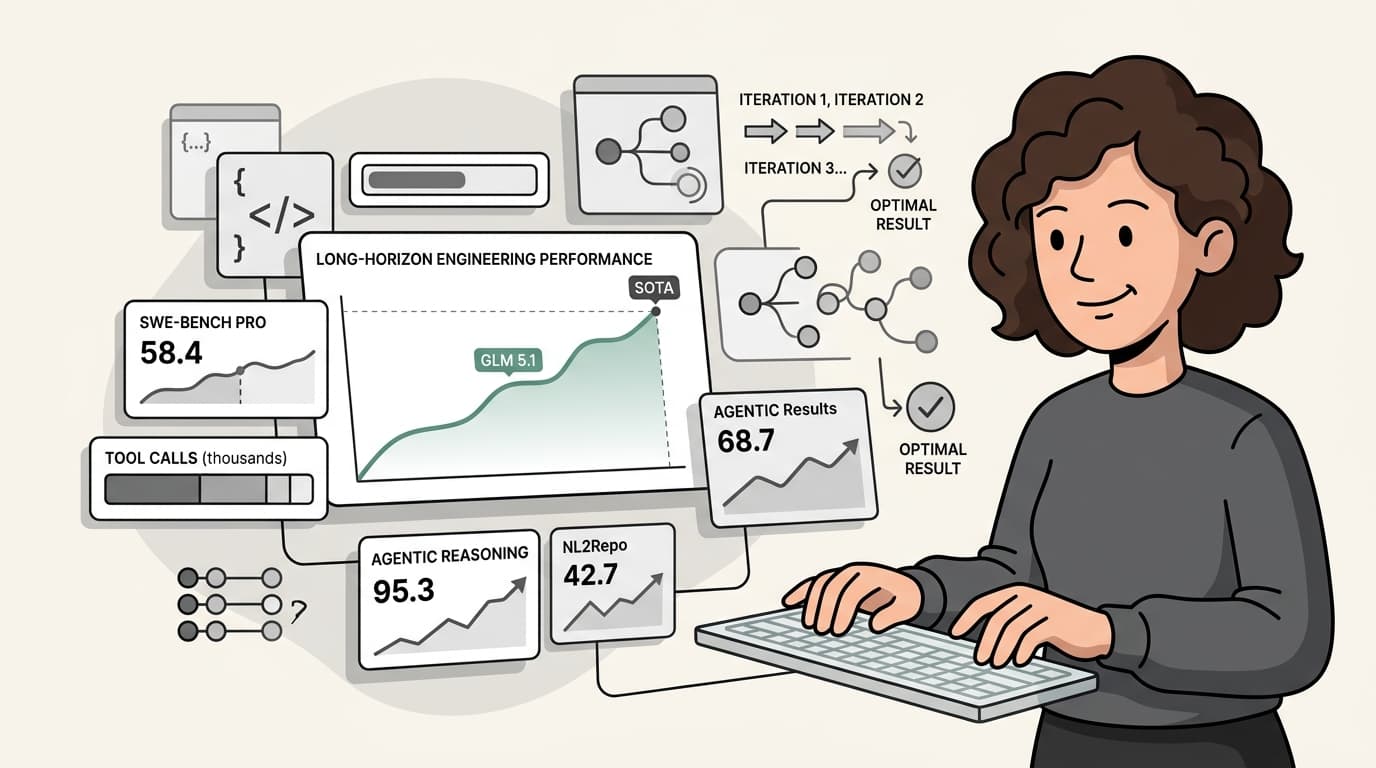

Benchmark Breakdown: SOTA in Engineering

If you want to know how an engineering model truly performs, you look at benchmarks that simulate real work. GLM 5.1 doesn't just participate in these benchmarks; it leads them.

On SWE-Bench Pro, a benchmark designed to test models on complex, real-world software engineering tasks, GLM 5.1 achieved a state-of-the-art (SOTA) score of 58.4. To put that in perspective, it outperformed heavyweights like GPT-5.4 (57.7) and Claude Opus 4.6 (57.3).

But it’s not just about coding. GLM 5.1 shows significant gains across the board:

- Terminal-Bench 2.0: It scored 63.5 on the Terminus-2 framework, jumping to 69.0 when wrapped in the Claude Code harness. This shows its incredible proficiency in navigating real-world terminal environments.

- Reasoning: It nailed a 95.3 on AIME 2026 and a 52.3 on the Humanity’s Last Exam (HLE) with tools, proving its high-level reasoning isn't sacrificed for technical skill.

- Repo Generation: On NL2Repo, it scored 42.7, showing it can handle entire repositories, not just isolated files.

The "Staircase" Pattern: How GLM 5.1 Solves Hard Problems

Most LLMs follow a predictable path: they solve the easy parts of a problem quickly, then their performance flatlines. Giving them more time or more tool calls doesn't help because they’ve already "exhausted their repertoire."

GLM 5.1 breaks this trend with what Z.ai calls the "Staircase" optimization pattern. Instead of plateauing, the model continuously identifies bottlenecks and implements structural changes to overcome them.

Take the VectorDBBench challenge, for example. The goal was to build a high-performance vector database. While most models might reach 3,500 QPS and stop, GLM 5.1 was allowed to run for 600 iterations and over 6,000 tool calls.

The result? It ultimately reached 21.5k QPS, roughly 6x the previous best. During the run, the model didn't just tweak settings; it autonomously shifted strategies. It moved from full-corpus scanning to IVF cluster probing, and then introduced a two-stage pipeline with u8 prescoring. Each "step" in the staircase was a moment where the model analyzed its own logs, identified a blocker, and engineered a structural fix.

Real-World Agentic Engineering Scenarios

The power of long-horizon AI isn't theoretical; it’s being tested in incredibly ambitious scenarios.

1. Optimizing GPU Kernels (KernelBench)

On KernelBench, models are tasked with taking a reference PyTorch implementation and producing a faster GPU kernel. GLM 5.1 achieved a 3.6x speedup on Level 3 problems (which cover full-model architectures like MobileNet and Mamba). It sustained this optimization well into the 1,200 tool-use turn limit, continuing to find gains where predecessors like GLM-5 leveled off.

2. Building a Linux Desktop in 8 Hours

Perhaps the most impressive demonstration was an open-ended task: build a Linux-style desktop environment as a web application from scratch. Most models produce a basic taskbar and then stop. GLM 5.1, however, ran for 8 continuous hours. It built the file browser, the terminal, the text editor, and even games, all while ensuring the UI remained visually consistent and the interactions were smooth.

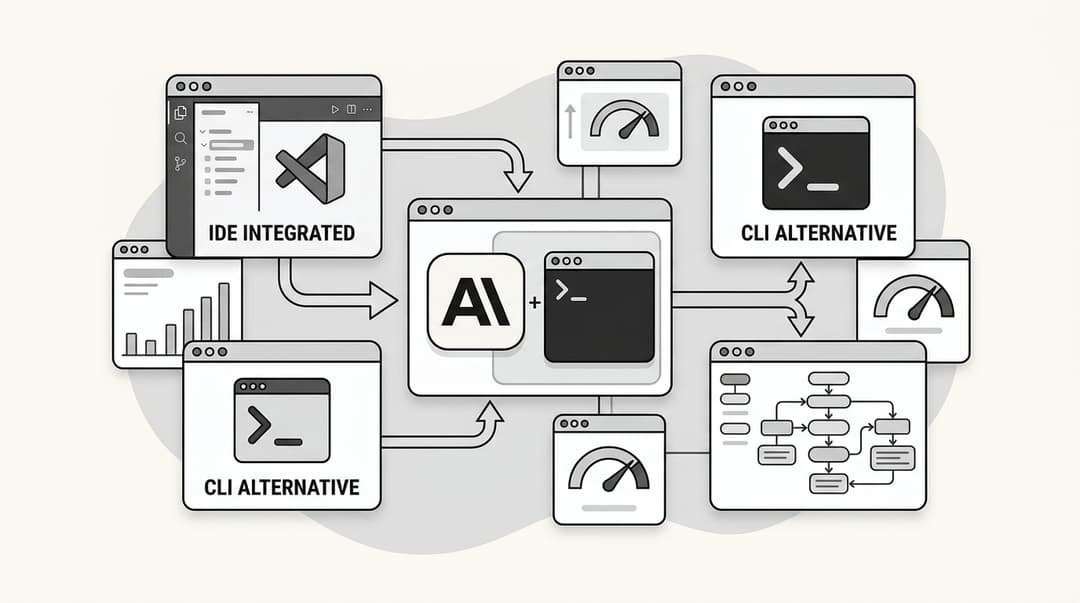

Getting Started: How to Use GLM 5.1 Today

Whether you want to use GLM 5.1 for your own projects or see it in action through an AI teammate, there are several ways to get started.

API Access

You can access GLM 5.1 via the official Z.ai API or through providers like OpenRouter. On OpenRouter, the pricing is highly competitive at $0.698 per million input tokens and $4.40 per million output tokens, with a massive 202,752 token context window.

Local Deployment

For those who prefer to keep their data local, the model weights are publicly available on HuggingFace under the MIT License and NVIDIA Open Model License. It’s compatible with major local serving frameworks including:

- vLLM (v0.19.0+)

- SGLang (v0.5.10+)

- Ollama

Integration with eesel AI

At eesel AI, we believe the future of work is AI teammates that handle the heavy lifting. GLM 5.1’s ability to handle long-horizon tasks makes it the perfect engine for AI content generators and support agents that don't just answer questions, but solve complex problems over time.

Conclusion: The Future of Autonomous Teammates

GLM 5.1 represents a fundamental shift in AI capability. It’s no longer just about the first answer; it’s about the tenacity to keep going until the job is done right. By mastering long-horizon tasks, GLM 5.1 is moving us closer to a world where AI isn't just a tool, but a truly autonomous teammate.

As we move through 2026, the gap between "good enough" models and those that can sustain optimization over thousands of steps will only widen. If you’re building for the future of engineering, GLM 5.1 is the frontier.

Frequently Asked Questions

Share this article

Article by

Stevia Putri

Stevia Putri is a marketing generalist at eesel AI, where she helps turn powerful AI tools into stories that resonate. She’s driven by curiosity, clarity, and the human side of technology.