GLM 5.1: The End of the AI Plateau? | eesel AI

Stevia Putri

Last edited April 21, 2026

If you’ve spent any time working with AI coding assistants lately, you’ve probably hit the "plateau." You start a session, the model gives you a brilliant first few lines, and then... it just sort of gives up. It starts repeating the same buggy code, ignores your feedback, or completely loses the plot after a few rounds of debugging.

It’s frustrating because we know the intelligence is there, but the stamina isn't.

Enter GLM 5.1.

Released by Z.AI (Zhipu AI) in April 2026, GLM 5.1 isn't just another incremental update to the LLM leaderboard. It’s a model specifically built to tackle what researchers call "long-horizon" tasks (the kind of complex, multi-step engineering projects that usually cause AI to crash and burn).

Whether you’re a dev lead looking for more reliable autonomous agents or an engineer tired of babysitting your AI, GLM 5.1 represents a massive shift in how we think about "agentic engineering."

The "Plateau" Problem: Why Other Models Fail at Long Tasks

Most LLMs (even the heavy hitters like GPT-5.4 and Claude 4.6) are optimized for "one-shot" or short-session performance. They’re built to give you the right answer immediately. And for most things, that’s great.

But engineering isn't a one-shot game. It’s an iterative process of experimentation, failure, and refinement. When you ask a traditional model to solve a complex repo-level bug, it often exhausts its repertoire early. It applies the most obvious fixes first, and if those don't work, it hits a performance ceiling. Giving it more time or more tool calls usually doesn't help; it just spins its wheels.

This "plateau" happens because most models lack the internal judgment to realize when a strategy isn't working. They can't effectively "backtrack" or rethink their entire approach. Instead, they keep digging the same hole, getting deeper and deeper into a logical dead-end.

Breakthrough: How GLM 5.1 Handles Long-Horizon Tasks

GLM 5.1 was built with a different philosophy: The longer it runs, the better the result should be.

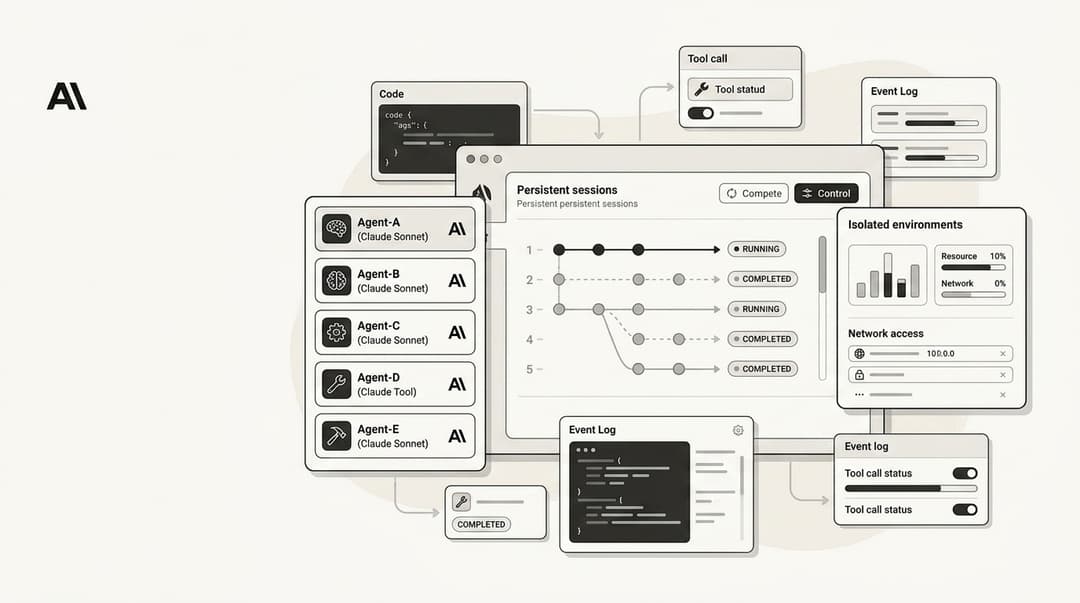

This isn't just marketing fluff. Z.AI has optimized the model for sessions that last over a thousand tool calls. The secret sauce is something they call "Reflection-based Reasoning."

Instead of just barreling forward, GLM 5.1 uses an internal reflection loop to evaluate its intermediate steps. If a command fails or a test suite doesn't pass, the model has the judgment to say, "Wait, this whole strategy is wrong," and backtrack to a previous decision point.

This "long-horizon" capability means the model can handle ambiguous problems with much better judgment. It stays productive over hours-long sessions, breaking down complex problems, running experiments, and identifying blockers with real precision. It’s the difference between a junior dev who needs constant supervision and a senior dev who can be left alone to solve a ticket from start to finish.

Benchmark Showdown: Leading the Leaderboards

The numbers back up the hype. On SWE-Bench Pro (the gold standard for evaluating how AI agents solve real-world GitHub issues) GLM 5.1 achieved a staggering 58.4% success rate.

For context, that beats out GPT-5.4 (57.7%) and Claude Opus 4.6 (57.3%). While those margins might look small, in the world of autonomous agents, every percentage point represents thousands of human hours saved in bug fixing.

But it’s not just about one benchmark. GLM 5.1 also leads on:

- Terminal-Bench 2.0: A benchmark for real-world terminal tasks like environment setup and log analysis.

- NL2Repo: A test for generating entire, architecturally consistent repositories from scratch.

These scores prove that GLM 5.1 is more than just a chatbot; it’s a powerhouse for autonomous systems.

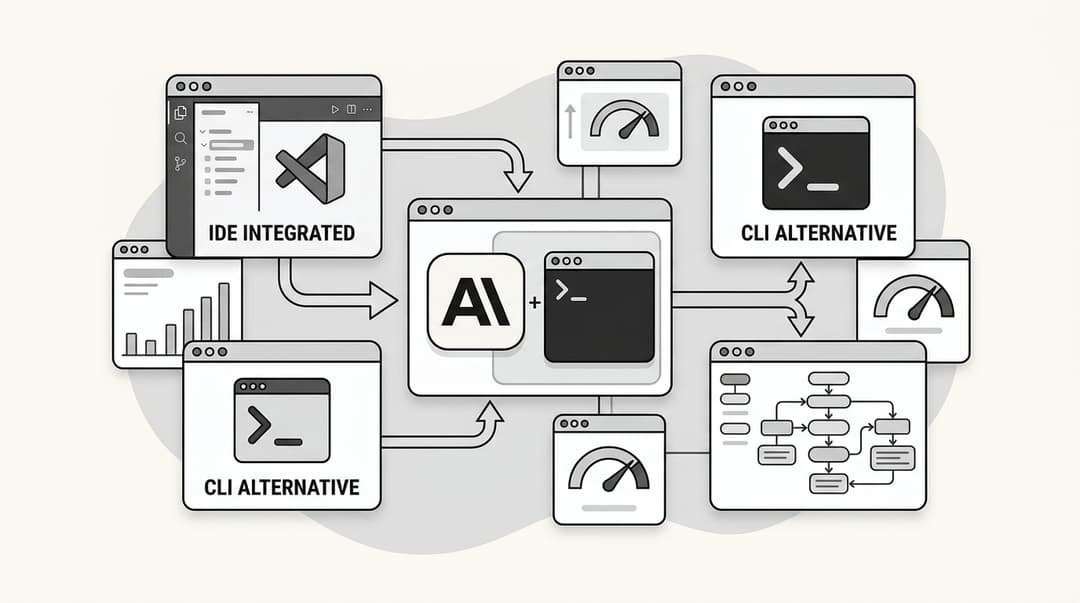

Practical Guide: How to Deploy and Use GLM 5.1 Today

The good news is that you don't need a massive server farm to start playing with this. GLM 5.1 is surprisingly accessible.

API Access

For most production use cases, you’ll want to go the API route. As of April 2026, OpenRouter and NVIDIA Build are the primary providers.

- OpenRouter Pricing: Around $2.00 per 1M input tokens and $6.00 per 1M output tokens.

- NVIDIA Build: Offers slightly more competitive rates and free credits for developers to test the model.

Local Deployment

If you’re privacy-conscious or just like running things on your own hardware, Ollama has already added support for GLM 5.1. You can run it locally in GGUF or FP16 formats, though you’ll want a decent GPU setup to get the most out of the flagship version.

Pro Tip for Prompting

Because GLM 5.1 is built for iteration, don't be afraid to give it open-ended, complex tasks. Instead of breaking things into tiny steps for the model, give it the goal and the tools, and let its internal reflection loop handle the heavy lifting.

The eesel AI Perspective: LLMs for Autonomous Support

At eesel AI, we’re constantly looking for ways to make our autonomous support agents even more reliable. For us, the "long-horizon" breakthrough is a game-changer.

Customer support tickets often require multi-step debugging (checking a customer's account, looking at log files, verifying a technical integration, and then formulating a fix). If an AI agent "plateaus" halfway through that process, the customer gets a bad experience.

GLM 5.1’s ability to persist through hundreds of tool calls and self-correct when it hits a wall is exactly what’s needed for truly autonomous support. It allows us to run more reliable "simulation modes" where we test the AI on thousands of past tickets to ensure it never hits a reasoning dead-end.

Check out how eesel AI builds autonomous agents

Conclusion

The release of GLM 5.1 marks the end of the "AI plateau" era. We’re moving away from models that just answer questions and toward agentic teammates that can solve problems.

The ability to keep trying, to backtrack, and to maintain productivity over long horizons is what will define the next generation of AI tools. If you haven't tried it yet, GLM 5.1 is a great place to start.

Frequently Asked Questions

Share this article

Article by

Stevia Putri

Stevia Putri is a marketing generalist at eesel AI, where she helps turn powerful AI tools into stories that resonate. She’s driven by curiosity, clarity, and the human side of technology.