Zendesk API incremental export: A complete developer guide

Stevia Putri

Last edited March 2, 2026

Keeping your external systems in sync with Zendesk data can feel like a never-ending task. Whether you're building a data warehouse, running analytics, or syncing ticket information to your CRM, you need a reliable way to fetch only what's changed since your last update. The Zendesk API incremental export is built exactly for this purpose.

Unlike the standard API endpoints designed for real-time queries, incremental exports are built specifically for bulk data synchronization. They let you fetch records that were created or updated since a specific point in time, making them ideal for ETL pipelines and regular data syncs.

In this guide, you'll learn how to use the Zendesk incremental export API effectively. We'll cover the two pagination approaches (cursor-based and time-based), walk through a complete Python implementation, and share best practices for production data pipelines. If you're looking for a way to get Zendesk analytics without building custom infrastructure, eesel AI offers native Zendesk integrations that handle the data sync automatically.

What is the Zendesk incremental export API?

The incremental export API is a set of endpoints designed for bulk data export rather than individual record lookups. While the standard Tickets API is great for fetching a specific ticket or searching with filters, it's not optimized for syncing large datasets efficiently.

Here's how incremental exports work: you provide a start time (as a Unix epoch timestamp), and the API returns all records created or updated since that time. On your next sync, you use the end time or cursor from the previous response as your new start point. This creates an efficient sync loop where you only fetch changed data.

The API supports two pagination methods:

- Cursor-based pagination uses an opaque cursor pointer to track position. It's more consistent, eliminates duplicates, and is the recommended approach when available.

- Time-based pagination uses start and end timestamps. It's supported by all incremental endpoints but can return duplicate records when multiple items share the same timestamp.

You can incrementally export tickets, users, organizations, ticket events, Talk call data, Chat conversations, Help Center articles, and custom object records. For teams building comprehensive analytics, our guide on the Zendesk ticket API covers additional endpoints that complement incremental exports.

When to use incremental exports vs Search API

A common question is whether to use incremental exports or the Search API for fetching ticket data. The key difference is purpose: incremental exports are designed for data synchronization, while Search is designed for querying.

Use incremental exports when:

- You're syncing data to a data warehouse or external system

- You need to process every ticket change reliably

- You're building analytics or reporting pipelines

- You want to minimize API calls by fetching only changes

Use Search API when:

- You need to find specific tickets matching complex criteria

- You're building a search interface for agents

- You need real-time results with advanced filtering

Cursor-based vs time-based pagination: Which should you use?

Zendesk offers both pagination methods, but cursor-based is the clear winner when available. Here's how they differ.

Cursor-based pagination (recommended)

Cursor-based exports use an opaque pointer (the cursor) to track your position in the dataset. After your initial request with a start_time, subsequent requests use the cursor parameter.

Key benefits:

- No duplicate records, even when items share timestamps

- More consistent response times and payload sizes

- Better performance for large datasets

- Higher rate limits (10 requests per minute for tickets, 20 for users, or 60 with the High Volume API add-on)

Supported resources:

- Tickets

- Users

- Custom object records

How it works:

- Make initial request with

start_timeparameter - Extract

after_cursorfrom the response - Use

cursorparameter for next request - Repeat until

end_of_streamis true

Time-based pagination

Time-based exports use Unix epoch timestamps to define your query window. Each response includes an end_time that you use as the start_time for your next request.

Limitations:

- Can return duplicate records when multiple items share the same timestamp

- Less consistent performance

- Lower rate limits (10 requests per minute)

When to use it:

- When cursor-based isn't available (ticket events, organizations, Talk data)

- For simple scripts where duplicate handling isn't critical

Decision matrix

| Factor | Cursor-based | Time-based |

|---|---|---|

| Duplicate handling | None (guaranteed unique) | Must deduplicate manually |

| Performance | Consistent | Variable |

| Rate limit | 10-20/min (60 with add-on) | 10/min |

| Available for | Tickets, users, custom objects | All resources |

Use cursor-based whenever possible. The only time you should use time-based is when cursor-based isn't available for your resource type.

Getting started: Authentication and prerequisites

Before you start coding, you'll need a few things set up.

Required:

- A Zendesk account with admin privileges

- An API token (generate one in Admin Center > Apps and integrations > APIs > Zendesk API)

- Python 3.7+ installed

Python packages:

pip install requests python-dotenv

Environment setup:

Create a .env file to store your credentials securely:

ZENDESK_SUBDOMAIN=your-subdomain

ZENDESK_EMAIL=your-email@company.com

ZENDESK_API_TOKEN=your-api-token

The incremental export API uses Basic authentication. You'll pass your email address combined with /token as the username, and your API token as the password.

Step-by-step: Exporting tickets with cursor-based pagination

Let's walk through a complete implementation for exporting tickets using cursor-based pagination. This pattern handles pagination, rate limiting, and error recovery.

The code

import os

import time

import requests

from requests.auth import HTTPBasicAuth

from dotenv import load_dotenv

load_dotenv()

class ZendeskIncrementalExport:

def __init__(self):

self.subdomain = os.getenv('ZENDESK_SUBDOMAIN')

self.email = os.getenv('ZENDESK_EMAIL')

self.api_token = os.getenv('ZENDESK_API_TOKEN')

self.base_url = f"https://{self.subdomain}.zendesk.com/api/v2"

self.auth = HTTPBasicAuth(f"{self.email}/token", self.api_token)

def export_tickets(self, start_time=None, cursor=None):

"""

Export tickets using cursor-based pagination.

Args:

start_time: Unix timestamp for initial export (required for first call)

cursor: Cursor from previous response (for subsequent calls)

"""

url = f"{self.base_url}/incremental/tickets/cursor.json"

params = {}

if cursor:

params['cursor'] = cursor

elif start_time:

params['start_time'] = start_time

else:

raise ValueError("Either start_time or cursor must be provided")

try:

response = requests.get(url, auth=self.auth, params=params, timeout=30)

if response.status_code == 429:

# Rate limited - implement exponential backoff

retry_after = int(response.headers.get('Retry-After', 60))

print(f"Rate limited. Waiting {retry_after} seconds...")

time.sleep(retry_after)

return self.export_tickets(start_time, cursor)

response.raise_for_status()

return response.json()

except requests.exceptions.RequestException as e:

print(f"Request failed: {e}")

raise

def full_export(self, start_time):

"""

Perform a complete export, handling pagination automatically.

"""

all_tickets = []

cursor = None

page_count = 0

while True:

data = self.export_tickets(

start_time=start_time if cursor is None else None,

cursor=cursor

)

tickets = data.get('tickets', [])

all_tickets.extend(tickets)

page_count += 1

print(f"Fetched page {page_count}: {len(tickets)} tickets")

# Check if we've reached the end

if data.get('end_of_stream'):

print(f"Export complete. Total tickets: {len(all_tickets)}")

return {

'tickets': all_tickets,

'final_cursor': data.get('after_cursor'),

'pages': page_count

}

cursor = data.get('after_cursor')

# Respect rate limits - sleep between requests

time.sleep(3) # 20 requests/min = 1 request per 3 seconds

if __name__ == "__main__":

exporter = ZendeskIncrementalExport()

# Start from 24 hours ago

import datetime

start_time = int((datetime.datetime.now() - datetime.timedelta(days=1)).timestamp())

result = exporter.full_export(start_time)

# Save the final cursor for next sync

print(f"Save this cursor for next run: {result['final_cursor']}")

Key implementation details

The 1-minute exclusion window: The API excludes data from the most recent minute to prevent race conditions. Your end_time will never be more recent than one minute ago. Plan your sync schedule accordingly.

Rate limit handling: The code implements exponential backoff when receiving a 429 response. The Retry-After header tells you exactly how long to wait.

Cursor persistence: Always save the final cursor atomically with your data writes. If your script crashes after writing data but before saving the cursor, you'll process duplicate records on the next run.

Excluding deleted tickets: Add exclude_deleted=true to your request parameters if you don't want deleted tickets in your export. Deleted tickets are retained for 90 days after permanent deletion, so they'll appear in exports unless excluded.

Working with other incremental export endpoints

The same patterns apply to other Zendesk resources, though endpoint URLs and available pagination methods vary.

Users and organizations

These follow the same pattern as tickets:

url = f"{base_url}/incremental/users/cursor.json"

url = f"{base_url}/incremental/organizations.json"

Ticket events

Ticket events are time-based only and include a record of every change made to tickets:

url = f"{base_url}/incremental/ticket_events.json"

params = {'start_time': start_time, 'include': 'comment_events'}

The comment_events sideload is particularly useful if you need the actual comment text, not just metadata about changes.

Talk call data

Export call records and call legs for voice analytics:

url = f"{base_url}/channels/voice/stats/incremental/calls.json"

url = f"{base_url}/channels/voice/stats/incremental/legs.json"

Rate limit: 10 requests per minute for Talk endpoints.

Chat data

Chat exports use microseconds instead of seconds for timestamps:

url = f"{base_url}/chat/incremental/chats.json"

start_time_micro = start_time * 1000000

url = f"{base_url}/chat/incremental/agent_timeline.json?start_time={start_time_micro}"

Note: Chat API requires the start time to be at least 5 minutes in the past.

Help Center articles

Export article metadata changes:

url = f"{base_url}/help_center/incremental/articles.json"

Returns up to 1,000 articles per page. The next_page URL contains a new start time based on the last article's update timestamp.

Handling rate limits and errors

Production data pipelines need robust error handling. Here's what to watch for.

Rate limit headers

Cursor-based endpoints return detailed rate limit information:

Zendesk-RateLimit-incremental-exports-cursor: total=20; remaining=15; resets=45

Parse these headers to proactively throttle your requests rather than waiting for 429 responses.

Exponential backoff strategy

When you hit a rate limit, use exponential backoff with jitter:

import random

def backoff_with_jitter(attempt, base_delay=3):

"""Calculate delay with exponential backoff and jitter."""

delay = min(base_delay * (2 ** attempt), 60) # Cap at 60 seconds

jitter = random.uniform(0, delay * 0.1) # Add 0-10% jitter

return delay + jitter

for attempt in range(5):

try:

response = requests.get(url, auth=auth)

if response.status_code == 429:

delay = backoff_with_jitter(attempt)

time.sleep(delay)

continue

response.raise_for_status()

break

except requests.exceptions.RequestException:

if attempt == 4: # Last attempt

raise

delay = backoff_with_jitter(attempt)

time.sleep(delay)

Common errors and solutions

| Error | Cause | Solution |

|---|---|---|

| 401 Unauthorized | Invalid credentials | Check email format (must include /token) and API token |

| 403 Forbidden | Insufficient permissions | Ensure account has admin access |

| 422 Unprocessable | Invalid start_time | Verify timestamp is at least 60 seconds in the past |

| 429 Too Many Requests | Rate limit exceeded | Implement backoff and respect Retry-After header |

| 500/502/503 | Zendesk server error | Retry with exponential backoff |

Testing with sample exports

Zendesk provides a sample export endpoint with stricter limits (10 requests per 20 minutes) but smaller responses. Use this for development and testing:

url = f"{base_url}/incremental/tickets/sample.json?start_time={start_time}"

Building a production data pipeline

For production use, you'll want a more robust architecture than a simple script.

Recommended architecture

Scheduled Job (Airflow/Lambda/Cron)

↓

Incremental Export API

↓

Data Validation & Transform

↓

Data Warehouse (Snowflake/BigQuery/Redshift)

↓

Analytics Dashboard

Storing cursor state

Never rely on local files for cursor storage in production. Use a persistent store:

import psycopg2

def save_cursor(cursor, last_sync_time):

conn = psycopg2.connect(database_url)

cur = conn.cursor()

cur.execute("""

INSERT INTO zendesk_sync_state (cursor, last_sync_time, updated_at)

VALUES (%s, %s, NOW())

ON CONFLICT (id) DO UPDATE SET

cursor = EXCLUDED.cursor,

last_sync_time = EXCLUDED.last_sync_time,

updated_at = EXCLUDED.updated_at

""", (cursor, last_sync_time))

conn.commit()

Deduplication for time-based exports

If you're using time-based pagination, implement deduplication:

def deduplicate_records(records, key_fields):

"""

Remove duplicates based on composite key.

For tickets: (id, updated_at)

For ticket events: (id, created_at)

"""

seen = set()

unique = []

for record in records:

key = tuple(record.get(f) for f in key_fields)

if key not in seen:

seen.add(key)

unique.append(record)

return unique

tickets = deduplicate_records(tickets, ['id', 'updated_at'])

Monitoring and alerting

Track these metrics in your pipeline:

- Sync duration and record counts

- Rate limit hits and retry counts

- Failed requests and error rates

- Data freshness (time since last successful sync)

Set up alerts for:

- Sync failures or excessive retries

- Unusually low record counts (possible API issues)

- Data freshness exceeding your SLA

Alternative: Managed solutions

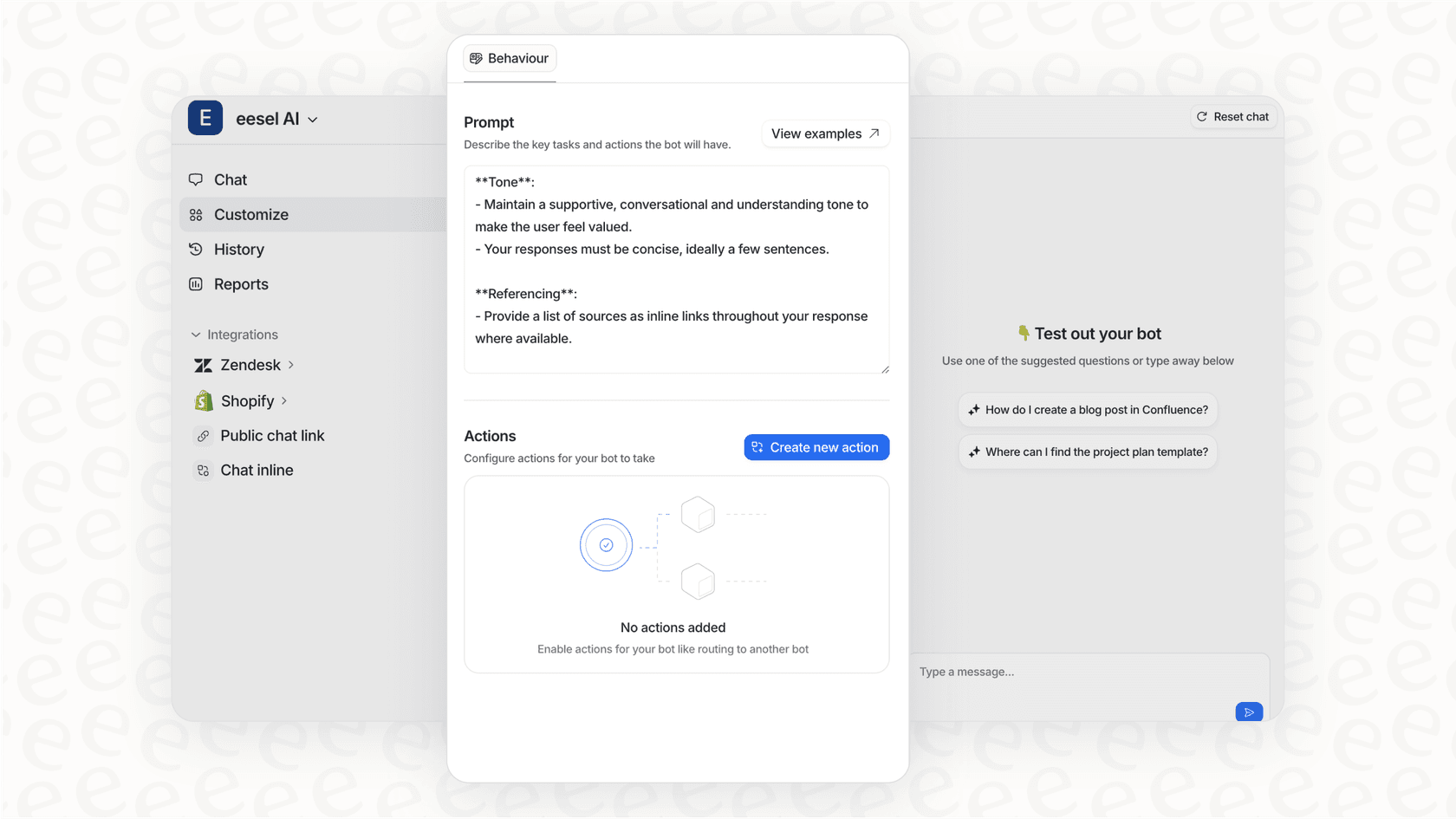

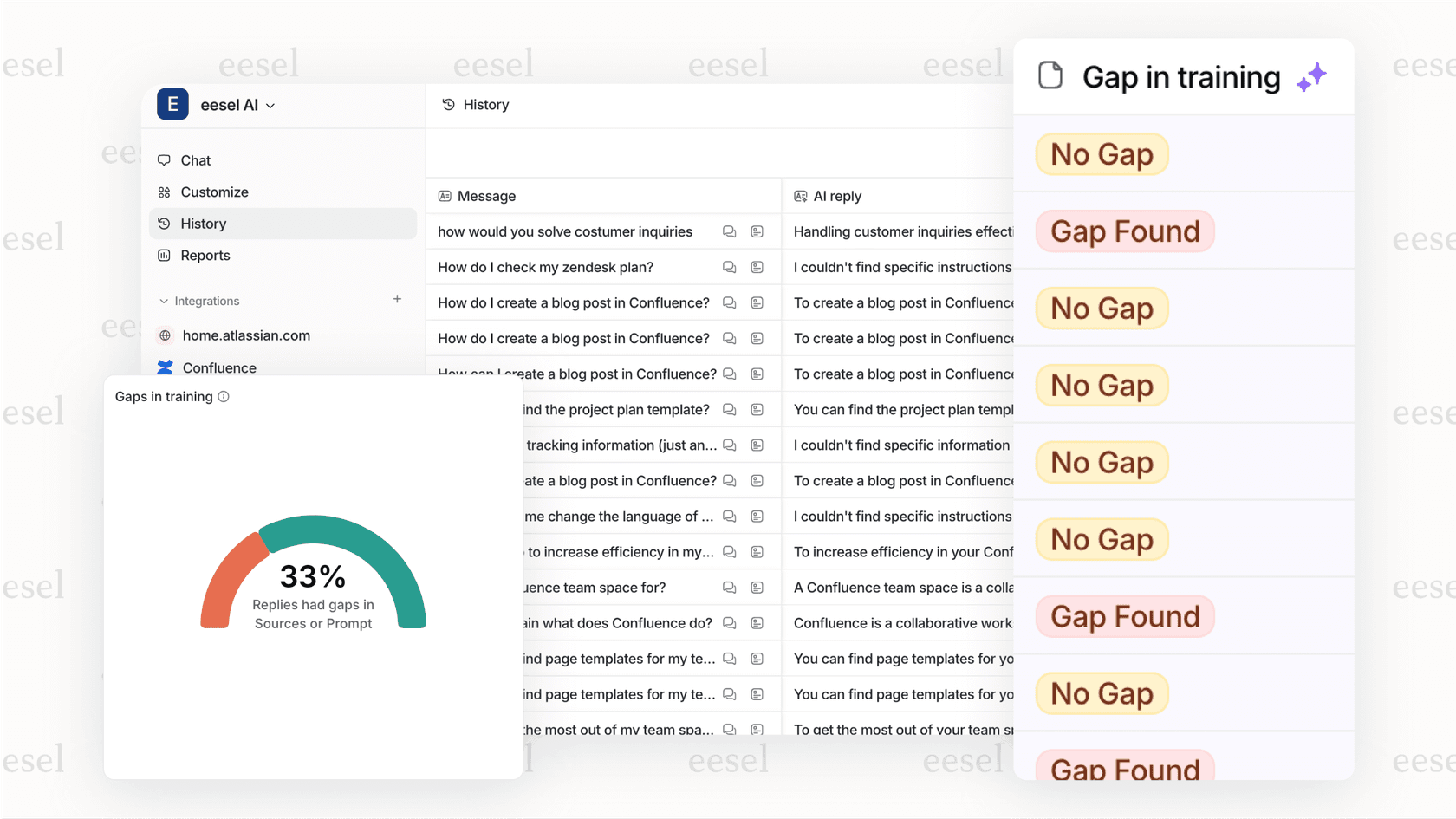

Building and maintaining a data pipeline takes engineering resources. If your team needs Zendesk analytics without the infrastructure overhead, eesel AI's AI Agent provides automated ticket analysis, reporting, and insights directly from your Zendesk data, no custom pipeline required.

Key best practices and limitations

Before you deploy to production, keep these points in mind.

Best practices

- Always use cursor-based pagination when available. The performance and consistency benefits are worth it.

- Store cursors atomically with data writes. Use a transaction to ensure cursor and data are always in sync.

- Handle the 1-minute exclusion window. Don't expect data newer than one minute ago.

- Implement idempotent writes. Design your destination system to handle duplicate records gracefully.

- Monitor rate limit headers. Proactive throttling beats reactive backoff.

Limitations to know

- Deleted ticket retention: Deleted tickets remain in exports for approximately 120 days total (30 days until permanent deletion, then 90 days after scrubbing).

- Archived tickets: Tickets archived by Zendesk are not included in incremental exports.

- Scrubbed data: After 30 days, deleted tickets have their content scrubbed (replaced with "SCRUBBED" or "X").

- No real-time guarantees: The API is designed for batch syncs, not real-time streaming.

Start syncing your Zendesk data efficiently

The Zendesk API incremental export gives you a reliable way to keep external systems synchronized with your support data. By using cursor-based pagination, handling rate limits gracefully, and storing state properly, you can build robust data pipelines that scale with your ticket volume.

Key takeaways:

- Use cursor-based pagination for tickets and users when possible

- Implement exponential backoff for rate limit handling

- Store cursors atomically with your data writes

- Account for the 1-minute data exclusion window in your sync schedule

Building a custom pipeline makes sense when you have specific data transformation needs or are integrating with proprietary systems. But if you're primarily looking for analytics, reporting, and AI-powered insights from your Zendesk data, consider whether a managed solution like eesel AI might save your team months of engineering effort. Our Zendesk integration handles the data sync automatically, giving you immediate access to ticket analytics without writing a single line of API code.

Frequently Asked Questions

Share this article

Article by

Stevia Putri

Stevia Putri is a marketing generalist at eesel AI, where she helps turn powerful AI tools into stories that resonate. She’s driven by curiosity, clarity, and the human side of technology.