Kimi K2.6: The New Standard for Agentic AI in 2026

Stevia Putri

Last edited April 20, 2026

The AI arms race in 2026 is no longer just about which model can summarize a PDF or write a clever poem. We've moved into the era of "agentic" orchestration—where models don't just answer questions, they execute entire projects.

Moonshot AI has just thrown a massive wrench into the gears of the current hierarchy with the release of Kimi K2.6. This isn't just another incremental update; it's a native multimodal agentic model designed to handle complex, autonomous work that would typically require a team of human developers. Even more disruptive is the price point: Kimi K2.6 is entering the market at a fraction of the cost of heavyweights like Claude 4.6 and GPT-5.4.

If you’ve been looking for an AI teammate that can actually get things done without a $500 monthly API bill, Kimi K2.6 might be the breakthrough you’ve been waiting for.

What’s New in Kimi K2.6?

Kimi K2.6 is built on a massive Mixture-of-Experts (MoE) architecture, boasting 1 trillion total parameters with 32 billion active parameters per forward pass. While those numbers are impressive, the real magic lies in its specialized capabilities.

- Long-Horizon Coding: K2.6 is a beast at end-to-end coding tasks. Whether you're working in Rust, Go, or Python, it generalizes across domains—from front-end design to complex DevOps performance optimization.

- Native Multimodal Power: Unlike models that rely on external vision encoders, Kimi K2.6 uses its native MoonViT vision encoder. This allows it to "see" UI screenshots and visual prompts and immediately transform them into production-ready full-stack code.

- Sequential Reasoning: One of the biggest hurdles for AI agents is losing the thread during long tasks. Kimi K2.6 can execute 200–300 sequential tool calls without human interference, maintaining logic and coherence across hundreds of steps.

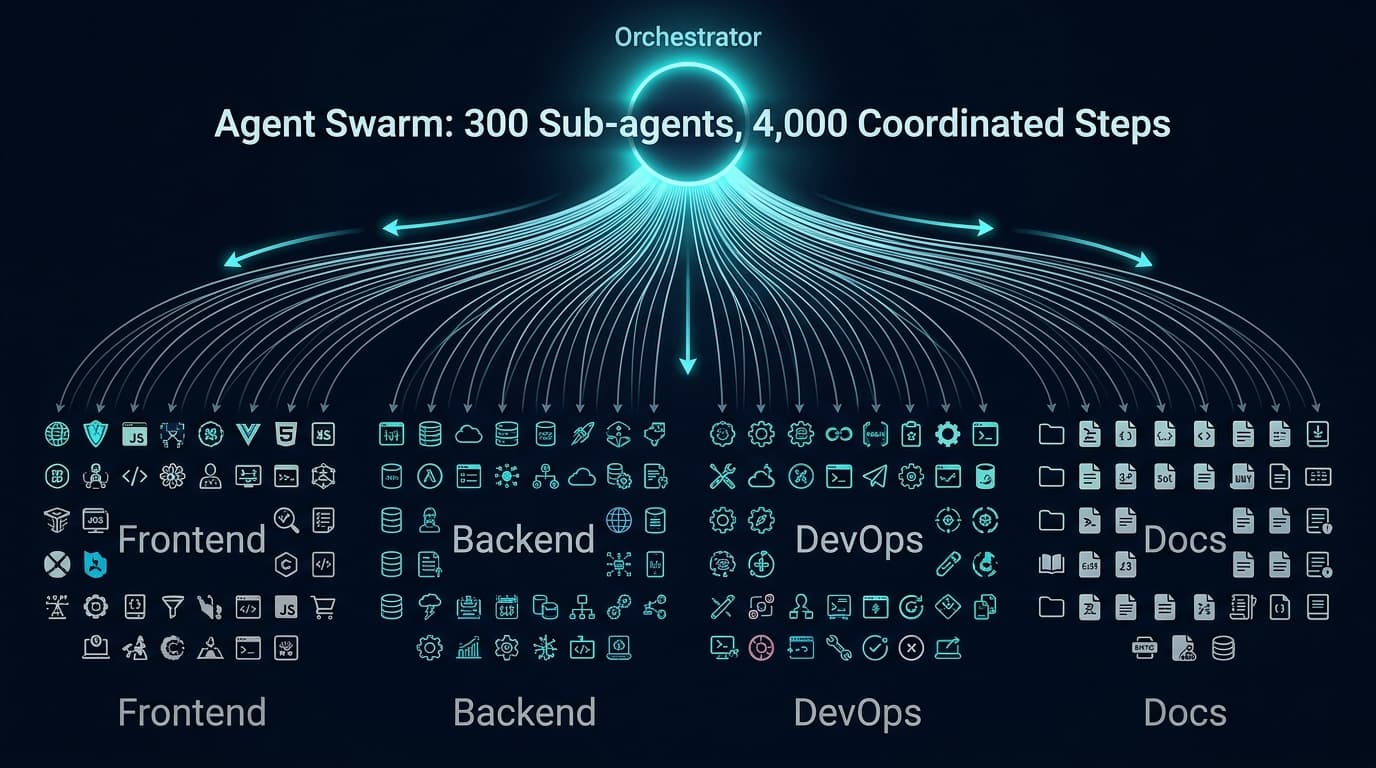

The "Agent Swarm" Breakthrough

The standout feature of Kimi K2.6 is its Agent Swarm orchestration. Most AI agents today are solo acts, but Kimi can scale horizontally to 300 specialized sub-agents.

In a single autonomous run, these sub-agents can execute up to 4,000 coordinated steps. Imagine asking an AI to build a full-stack web application. Instead of one model struggling to remember the database schema while writing the CSS, Kimi’s orchestrator dynamically decomposes the task into parallel sub-tasks. One sub-agent handles the backend, another the frontend, and a third manages the documentation—all coordinated by a central logic.

This dynamic decomposition prevents the model from collapsing into slow, serial execution loops. It’s the difference between hiring one overwhelmed freelancer and an entire coordinated agency.

Benchmarks: How Kimi K2.6 Stacks Up

Moonshot isn't just making claims; the benchmarks back them up. In agentic reasoning tasks (specifically HLE-Full with tools), Kimi K2.6 scored 54.0%, edging out GPT-5.4 (52.1%) and rivaling Claude Opus 4.6 (53.0%).

In coding benchmarks like SWE-Bench Verified, Kimi K2.6 hit a 80.2% success rate, a significant jump from the K2.5 baseline of 76.8%. But beyond the numbers, there’s the "vibe." Early testers on Reddit and YouTube have described K2.6’s reasoning as "Opus-flavored," noting its verbose, structured "Thinking Mode" that provides deep reasoning traces similar to Claude’s flagship models.

As AICodeKing noted on YouTube, "Kimi may be the best overall value if you care about performance, speed, and cost."

Pricing & Developer Accessibility

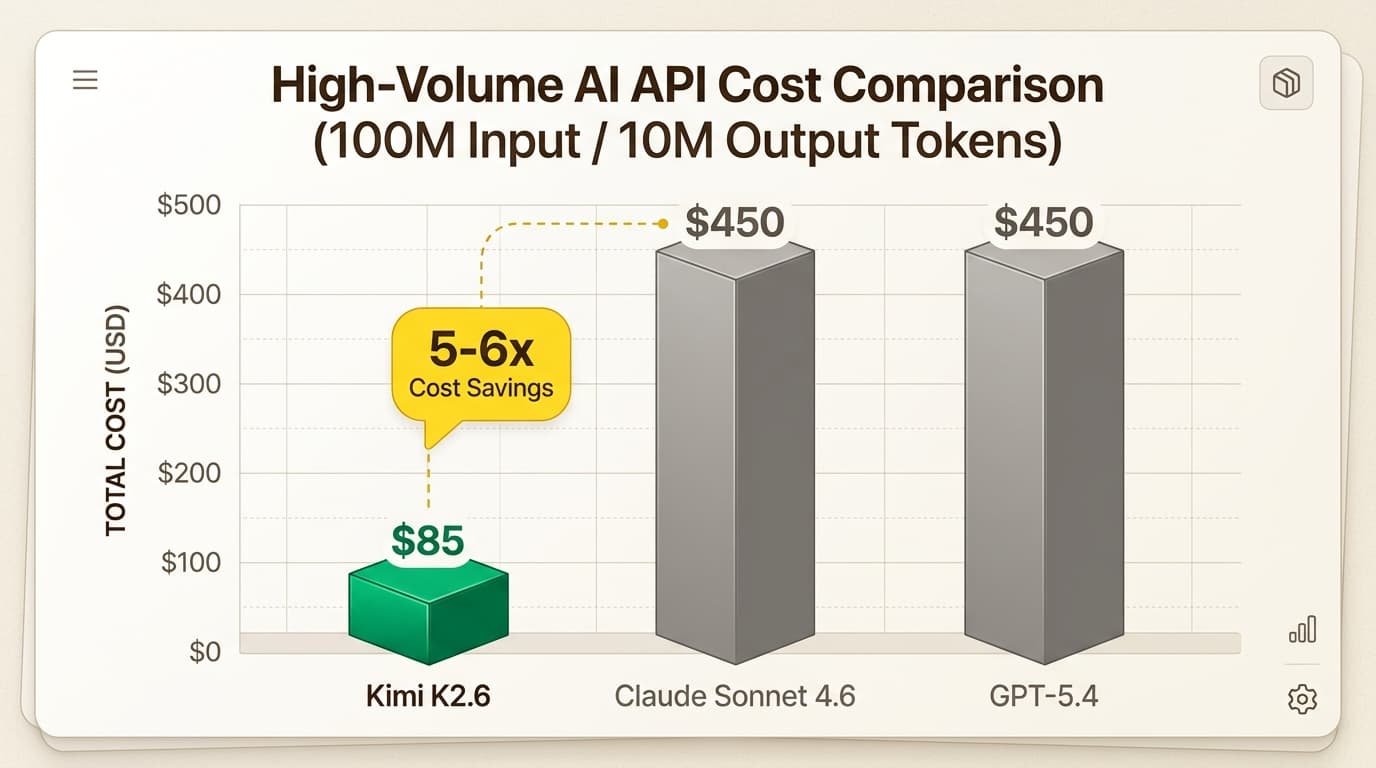

This is where Kimi K2.6 truly disrupts the market. Moonshot has priced the API at $0.60 per 1 million input tokens and $2.50 per 1 million output tokens.

To put that in perspective, that’s roughly 5-6x cheaper than Claude Sonnet 4.6 or GPT-tier models. For a developer or a startup running high-volume agents, this isn't just a marginal saving—it's a massive reduction in operational overhead.

You can access Kimi K2.6 through:

- Kimi Code CLI: A terminal-first agent that plugs directly into your dev workflow.

- Moonshot API: Fully compatible with OpenAI and Anthropic SDKs for easy migration.

- Open-Source Weights: The weights are available on Hugging Face under a Modified MIT License for teams that want to self-host.

Use Cases: Beyond Just Chatting

Kimi K2.6 is designed for heavy lifting. It’s already powering persistent, 24/7 background agents that manage schedules, execute code, and orchestrate cross-platform operations without oversight.

For businesses, the potential is huge. You can take a UI screenshot of a dashboard you like and have Kimi K2.6 build a functional version of it in minutes.

At eesel AI, we’re particularly excited about how these agentic models can supercharge autonomous teammates. Whether it's an AI Helpdesk Agent drafting complex technical responses or an AI Triage Agent routing thousands of tickets based on deep reasoning, Kimi K2.6 provides the "brain" needed for truly autonomous operations.

Final Verdict: Should You Switch to Kimi K2.6?

If you are running high-volume AI agents and your API bills are starting to look like a second mortgage, the switch to Kimi K2.6 is a no-brainer. The combination of Agent Swarm orchestration and top-tier coding performance—all at a 5x discount—is a winning formula for 2026.

There are minor hurdles: the English documentation is still catching up to the Chinese version, and the unified model identifiers in the API can be a bit tricky for strict CI/CD pipelines. However, for teams that need massive parallel task execution and reliable reasoning, Kimi K2.6 is currently the model to beat.

Frequently Asked Questions

Share this article

Article by

Stevia Putri

Stevia Putri is a marketing generalist at eesel AI, where she helps turn powerful AI tools into stories that resonate. She’s driven by curiosity, clarity, and the human side of technology.