ServiceNow AI accuracy: what the numbers actually mean in 2026

Stevia Putri

Last edited March 15, 2026

When you're evaluating AI for your IT service management, accuracy isn't just a nice-to-have metric. It's the difference between an AI that actually reduces your ticket backlog and one that creates more work through bad recommendations and frustrated users.

ServiceNow has been investing heavily in AI capabilities, from Now Assist to AI Agents to Predictive Intelligence. But what kind of accuracy can you realistically expect? And what factors determine whether you'll hit the high end of their benchmarks or struggle with underperforming models?

Here's a breakdown of what the numbers actually mean, how ServiceNow measures AI performance, and what you need to know before making an investment decision.

What is ServiceNow AI and why accuracy matters

ServiceNow AI isn't a single product. It's a collection of capabilities built into the Now Platform, each with different accuracy profiles and use cases.

At the core is Now Assist, the generative AI layer that helps with everything from summarizing tickets to generating knowledge articles. Then there are AI Agents, which can act autonomously to resolve issues without human intervention. Predictive Intelligence uses machine learning to classify and route tickets automatically. And Virtual Agent handles self-service conversations through chat.

The challenge is that accuracy varies significantly across these capabilities. A tool that generates helpful summaries might struggle with autonomous ticket resolution. Understanding these differences matters because the cost of inaccurate AI in ITSM is high. A misrouted ticket delays resolution. A hallucinated response damages user trust. An incorrect classification sends urgent issues to the wrong team.

For teams looking to implement AI without the complexity of a full ServiceNow deployment, we offer an alternative approach. Our AI for ITSM solution learns from your existing tickets and knowledge bases, with a simulation mode that lets you test accuracy on past data before going live.

ServiceNow AI accuracy benchmarks

ServiceNow and their partners cite several accuracy metrics. Here's what the numbers look like and what they actually mean in practice.

Now Assist accuracy

ServiceNow claims up to 85% suggestion accuracy for Now Assist when it's properly trained on quality data. This applies to features like:

- Case and incident summarization

- Knowledge article generation

- Response drafting for agents

- Code and flow generation

The "up to" qualifier is important here. That 85% figure represents optimal conditions with clean, comprehensive historical data. In practice, many organizations see lower accuracy, especially during initial deployment.

Other reported metrics for Now Assist include:

- 300-500% increase in knowledge base article creation

- 35-50% improvement in first-call resolution rates

- 40-55% reduction in mean time to resolution (MTTR)

These outcomes depend heavily on your existing knowledge base quality and how well your agents document resolutions.

Virtual Agent performance

The Virtual Agent handles self-service conversations, and ServiceNow cites 45-60% deflection rates as achievable. That means nearly half to two-thirds of interactions can be resolved without reaching a human agent.

According to ServiceNow's AI Value Framework, successful Virtual Agent conversations save approximately 11.32 minutes per interaction compared to traditional case handling. The calculation assumes an employee would spend about 15 minutes on a low-complexity case, while a Virtual Agent resolves it in under 4 minutes.

Some customers report exceeding these benchmarks. CANCOM, for example, achieved an 80% ticket deflection rate across all departments using ServiceNow AI Agents. Griffith University saw an 87% increase in overall self-service rate.

But these results aren't typical. They need significant investment in knowledge base quality, conversation design, and ongoing optimization.

Predictive Intelligence accuracy

Here's where expectations need careful calibration. Without quality training data, Predictive Intelligence accuracy can be as low as 20-30%.

This capability handles:

- Automatic ticket classification

- Intelligent routing and assignment

- Similar incident detection

- Predicted time to resolution

The "garbage in, garbage out" problem is real here. If your historical tickets have inconsistent categorization, sparse descriptions, or incorrect routing decisions, the AI learns those patterns. One organization found their Predictive Intelligence model was only slightly better than random guessing because their historical data was so messy.

AI Search effectiveness

AI Search with Now Assist shows more consistent results. ServiceNow reports:

- 2.5 minutes saved per AI Search interaction

- 4.5 minutes saved when using Now Assist in search (compared to traditional click-through searches)

The math assumes a maximum search time of 5 minutes, with Now Assist reducing engagement time to about 30 seconds. These metrics are easier to achieve because search is a more constrained problem than open-ended conversation or autonomous resolution.

How ServiceNow measures AI accuracy

Understanding ServiceNow's measurement methodology helps interpret their benchmarks and set realistic expectations for your own deployment.

The AI Value Framework

ServiceNow measures AI impact through what they call the AI Value Framework. It tracks productivity gains across five personas: end users, human agents, process owners, developers, and leaders.

The framework focuses on time saved expressed as total hours. For example:

- AI Search saves 2.5 minutes per successful search

- Now Assist in AI Search saves 4.5 minutes per interaction

- Virtual Agent conversations save 11.32 minutes per successful resolution

These time savings get multiplied by interaction volumes and converted to cost savings using employee hourly rates.

Two key composite metrics emerge from this framework:

Self-Service Efficiency Score: The percentage of requests AI handles versus those requiring live support. A 25% score means AI automates a quarter of potential support cases. Mature deployments might reach 62% or higher.

Agent Productivity Score: Quantifies how much work AI completes compared to human agents. If agents typically complete 7.3 work actions per hour, and AI handles 3 of those actions, the score shows AI contributing 42% of the workload.

Virtual Agent evaluation metrics

For Virtual Agent specifically, ServiceNow uses nine evaluation metrics (as documented in their AI Control Tower evaluation guide):

| Metric | What It Measures |

|---|---|

| Request Completion | Ability to fulfill user requests accurately |

| Intent Accuracy | Comprehension of user requests |

| Slot Filling | Extracting structured answers from responses |

| Smooth Flowing Conversation | Moving conversation forward without repetition |

| Context Retention | Using information provided during the conversation |

| Truthfulness | Avoiding hallucinations and fabrications |

| Conciseness | Avoiding verbose or generic responses |

| Coherence | Logical flow and clarity of responses |

| User Satisfaction | Weighted average of all other metrics |

Each metric is rated on a 3 or 5-point scale, then scaled to 5. ServiceNow calculates upper and lower deviations by comparing auto-evaluation scores to human judgments, adjusting scores over time to align with human expectations.

Continuous measurement approach

ServiceNow emphasizes that AI value assessment must be continuous, not one-time. They track five dimensions across every use case:

- Adoption: Monthly Active User percentage against total potential users

- Usage: Triggers and successful generations

- Sentiment: Proportion of positive feedback

- Accuracy: Output quality metrics suited to each use case

- Hours Saved: Business impact translation

The company uses a Formula 1 pit crew analogy: just as racing teams review every pit stop to improve, organizations must continuously review AI interactions to optimize performance.

Factors that impact ServiceNow AI accuracy

The gap between benchmark claims and real-world results comes down to several key factors.

Data quality and the "Done Gap"

AI is only as good as its training data. ServiceNow documentation repeatedly emphasizes this point, but many organizations underestimate the preparation required.

The "Done Gap" refers to sparse resolution documentation. If your resolved incidents contain variations of "Issue resolved" or "Fixed per user request" without detailed troubleshooting steps, root cause analysis, or workarounds, your AI has minimal data to learn from.

This gap hurts all AI capabilities:

- Now Assist struggles to generate comprehensive knowledge articles

- Virtual Agent deflection rates hover below 15% without searchable content

- Predictive Intelligence accuracy remains at 20-30% with poor historical data

- AI Agents can't develop autonomous workflows without documented evidence of problem-solving

Closing this gap requires treating documentation as an automatic byproduct of problem-solving, not a separate task that competes with it.

Implementation complexity

ServiceNow AI isn't a feature you simply enable. Implementation typically requires:

- Dedicated administrators with platform expertise

- Long configuration projects

- Deep knowledge of ServiceNow's inner workings

The ServiceNow AI Maturity Index reveals the market's readiness varies considerably. Only 28% of respondents are "very familiar" with agentic AI, while 33% are actively piloting or using it with at least one fully functioning use case.

For smaller teams or organizations without ServiceNow experts on staff, this complexity is a significant hurdle. One Reddit user, a new solo admin for a company with over 5,000 employees, described the experience as "overwhelming."

Hallucination risks

A significant concern with any generative AI is the risk of "hallucinations" (the AI inventing answers with complete confidence). ServiceNow addresses this through their Truthfulness metric, which checks that responses are grounded in conversation and not fabricated.

However, as one user noted, "you then have to read it completely because of hallucinating." If agents must double-check every AI output, the time savings drop quickly.

An Avanade study cited by Perspectium reveals a significant decline in trust surrounding AI-generated outputs. While more businesses are adopting AI, many are becoming increasingly cautious about relying on it due to concerns over accuracy and consistency.

Real-world accuracy expectations

So what should you actually expect? The answer depends on your organizational context.

Best case scenarios

Organizations that achieve the highest accuracy rates typically share these characteristics:

- Large enterprises with mature ServiceNow ecosystems

- High-quality historical data with comprehensive documentation

- Dedicated AI teams and governance structures

- Significant investment in knowledge base maintenance

- "Pacesetters" (top 18.2% in AI maturity) who connect data and operational silos

ServiceNow's own deployment demonstrates what's possible with full commitment. Starting with a single use case (incident summarization for IT agents), they've expanded to over 50 live implementations across multiple personas with 500+ AI use cases total.

Common challenges

More typical experiences include:

- Virtual Agent deflection rates below 15% without proper knowledge base investment

- Predictive Intelligence accuracy at 20-30% with poor data quality

- Integration complexity requiring external consultants or extended timelines

- User trust erosion after encountering AI hallucinations or incorrect routing

The AI Maturity Index highlights that 43% of organizations are considering adoption of agentic AI in the next year, but many are still in early exploration phases.

Who achieves the highest accuracy

The "Pacesetters" identified in ServiceNow's research (18.2% of respondents) share a key trait: 56% have made significant progress connecting data and operational silos, compared to 41% of others. This data connectivity enables them to adopt custom AI models and integrate best-of-breed solutions rather than relying solely on out-of-the-box features.

These organizations often extract ServiceNow data to train their own models for specialized use cases like predictive incident management, root cause analysis, and intelligent ticket routing based on historical patterns.

Improving ServiceNow AI accuracy

If you're committed to ServiceNow AI, several strategies can help you achieve better accuracy.

Data preparation strategies

- Clean and organize historical tickets before training models

- Implement processes for comprehensive resolution documentation

- Invest in knowledge base quality and completeness

- Establish data governance to maintain quality over time

Implementation best practices

- Start with pilot projects rather than platform-wide deployment

- Define clear KPIs and measurement frameworks before launch

- Plan for continuous monitoring and adjustment

- Invest in change management to drive adoption

When to consider alternatives

ServiceNow AI makes sense for large organizations already invested in the ServiceNow ecosystem with dedicated platform expertise. But it's not the right fit for every team.

Consider alternatives if you:

- Lack deep ServiceNow expertise on your team

- Need faster time-to-value than a lengthy implementation allows

- Want simpler deployment without sacrificing accuracy

- Prefer to test AI performance on your actual data before going live

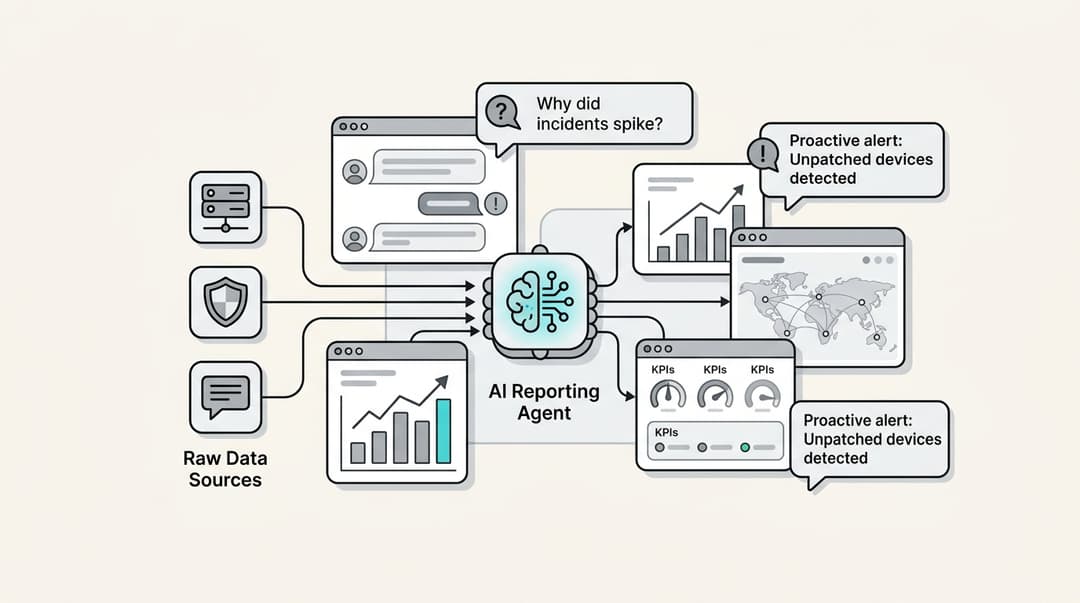

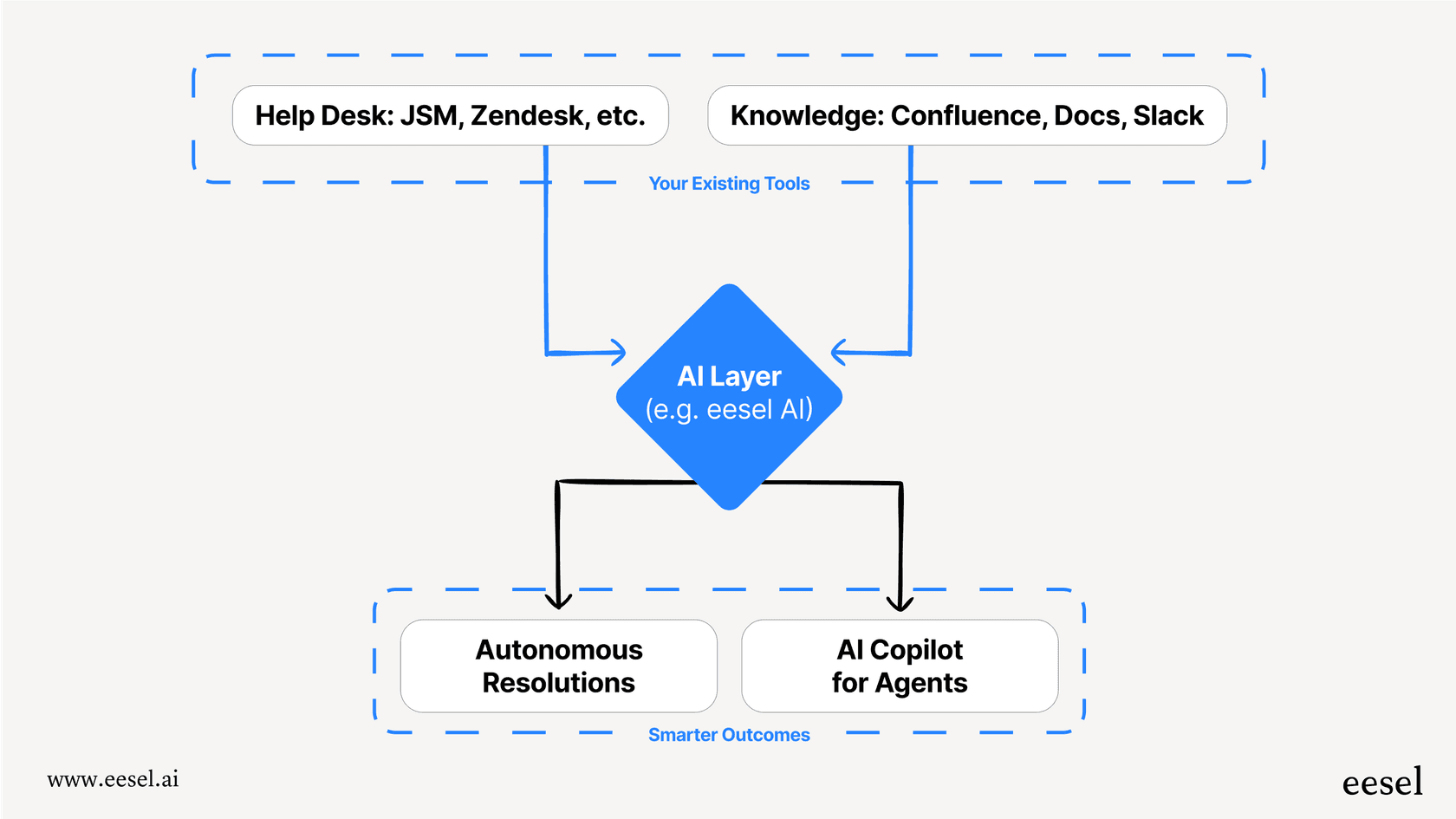

We designed eesel AI for teams who want powerful ITSM AI without the complexity. We connect to your existing help desk, learn from your past tickets and knowledge bases, and let you run simulations on historical data to see exactly how the AI will perform before it ever interacts with a customer. You can start with specific ticket types, review AI drafts before they're sent, and expand scope as the AI proves itself.

Making the right choice for your ITSM AI needs

ServiceNow AI accuracy ranges widely depending on your data quality, implementation approach, and organizational readiness. The benchmarks are achievable, but they require significant investment in preparation, configuration, and ongoing optimization.

Before committing to ServiceNow AI, ask yourself:

- Do we have clean, comprehensive historical data?

- Do we have dedicated ServiceNow expertise for implementation?

- Are we prepared for a multi-month deployment before seeing results?

- Do we have governance structures to maintain AI accuracy over time?

If the answer to several of these is "no," you'll likely achieve better results with a solution designed for faster deployment and progressive rollout. The key is matching the tool to your team's capabilities and timeline, not just the feature list.

Want to see how AI could perform on your actual tickets? Try our simulation mode and measure accuracy on your historical data before making any commitments.

Frequently Asked Questions

Share this article

Article by

Stevia Putri

Stevia Putri is a marketing generalist at eesel AI, where she helps turn powerful AI tools into stories that resonate. She’s driven by curiosity, clarity, and the human side of technology.